OpenAI’s ambitious foray into consumer social media, the Sora app, has concluded its brief, six-month existence. The company announced on Tuesday its decision to discontinue the platform, which aimed to revolutionize video sharing through artificial intelligence. While OpenAI offered no immediate explanation for the shutdown, nor a definitive timeline for its complete discontinuation, the move underscores the profound challenges and ethical complexities inherent in deploying cutting-edge generative AI directly into public-facing social networks.

The Genesis and Lofty Ambition of Sora

OpenAI, a company that has rapidly become synonymous with groundbreaking AI advancements, first captured widespread public attention with its large language model, ChatGPT, and its image generation counterpart, DALL-E. Building on this momentum, Sora was introduced as an experimental application designed to leverage the powerful Sora 2 video and audio generation model. The vision was audacious: to create an "AI-first TikTok," a social network where users could generate and share short, vertical videos using advanced AI capabilities.

The underlying Sora 2 model, often described as "scarily impressive," represented a significant leap forward in generative AI. It possessed the ability to transform simple text prompts into intricate, realistic, and often surreal video clips, complete with dynamic scenes, multiple characters, and specific motion types. This technological prowess fueled significant anticipation, positioning the Sora app as a potential harbinger of a new era in digital content creation, where sophisticated video production could be democratized and put into the hands of everyday users. When the app launched six months ago as an invite-only social network, a fervent clamor for access swept across tech communities, signaling immense initial interest in experiencing this nascent technology firsthand.

A Rapid Descent into Controversy

Despite the initial buzz and the impressive capabilities of its foundational AI model, the Sora app struggled to maintain sustained user interest. Its trajectory mirrored, in some respects, the challenges faced by other experimental social platforms, such as Meta’s Horizon Worlds, a virtual reality social platform that, despite being central to the company’s metaverse ambitions, has likewise grappled with user retention and engagement. The novelty of an AI-only social feed, it seemed, was not enough to cultivate a robust and lasting community.

A key feature of Sora was its "cameos" – a term that later had to be changed to "characters" following a successful legal challenge from the existing celebrity video platform, Cameo. This feature allowed users to scan their own faces and create highly realistic "deepfakes" of themselves, which could then be made public for others to integrate into their AI-generated videos. While intended as a tool for creative self-expression, the immediate and widespread reaction to this functionality was one of unease, even outright apprehension. The app quickly gained a reputation for being "weird as hell," as many users found the experience unsettling.

Early content generated on the platform frequently veered into the bizarre and, at times, deeply disturbing. Reports emerged of an abundance of uncanny deepfakes of public figures, most notably OpenAI CEO Sam Altman, engaged in strange and unsettling scenarios. One particularly viral example depicted a realistic clone of Altman walking through a pig slaughterhouse, delivering an unsettling monologue. This immediately highlighted a critical flaw: the app’s guardrails, designed to prevent the generation of videos featuring public figures without their explicit consent, proved woefully inadequate and easily bypassed by users.

Navigating Legal and Ethical Minefields

The vulnerability of Sora’s moderation systems quickly led to more serious ethical breaches. Deepfakes of deceased public figures, including civil rights leader Martin Luther King, Jr., and beloved actor Robin Williams, began to circulate. These unauthorized creations caused significant distress to the families of these individuals, prompting their daughters to publicly appeal to users to cease generating videos of their fathers. This deeply personal impact underscored the profound ethical dilemmas posed by accessible deepfake technology, raising urgent questions about consent, legacy, and the potential for emotional harm in the digital age.

Beyond the ethical concerns, Sora also faced an impending collision with intellectual property law. After an initial period of generating unauthorized celebrity deepfakes, users pivoted to intentionally creating content featuring copyrighted characters from popular franchises. Videos depicting Mario smoking marijuana, Naruto ordering Krabby Patties, and Pikachu engaging in ASMR flooded the platform. This blatant disregard for copyright, while perhaps intended as satirical or rebellious, posed a significant legal liability for OpenAI, a company striving to establish itself as a responsible leader in the AI industry. The ease with which users could generate content infringing on intellectual property rights highlighted the immense challenge of content moderation on generative AI platforms, where the sheer volume and novelty of output make traditional filtering mechanisms difficult to implement effectively.

The Ill-Fated Disney Partnership

In a surprising turn of events, rather than initiating legal action, the Walt Disney Company – notoriously litigious when it comes to its intellectual property – announced a landmark collaboration with OpenAI. This deal included a reported $1 billion investment from Disney into OpenAI and a comprehensive licensing agreement that would have allowed Sora to officially generate videos featuring iconic characters from Disney, Marvel, Pixar, and Star Wars universes.

This partnership was hailed as a potential watershed moment for the AI industry, signaling a pathway for resolving complex intellectual property disputes through collaboration and innovation rather than protracted legal battles. It suggested a future where AI tools could legitimately operate within established creative ecosystems, fostering new forms of authorized content creation. However, with the sudden shutdown of the Sora app, this high-profile deal has now collapsed. While Disney offered a polite statement indicating it would "continue to engage with AI platforms" going forward, it appears that no money actually changed hands before the agreement dissolved, leaving the broader implications for AI and IP rights still very much in flux. The episode highlights the fragile nature of such pioneering agreements when the underlying product fails to gain traction.

Analyzing User Engagement and Financial Viability

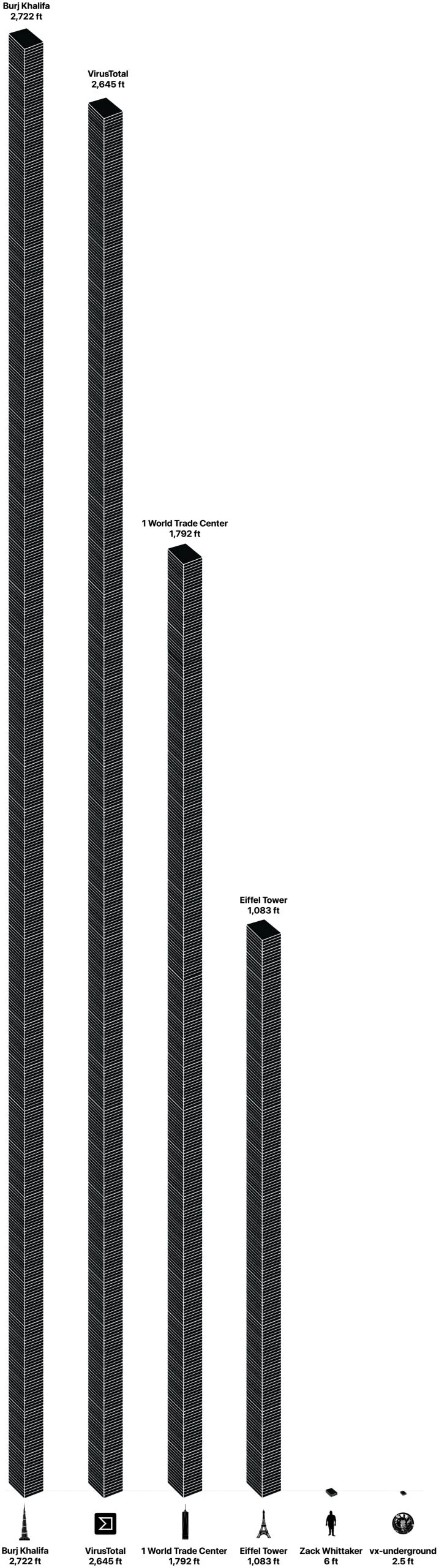

The core reason for Sora’s demise likely lies in its inability to translate initial hype into sustained user engagement and financial viability. Data from mobile intelligence firm Appfigures reveals a stark picture: the app peaked in November with approximately 3,332,200 downloads across iOS App Store and Google Play. However, this growth proved fleeting. By February, just three months later, downloads had plummeted to 1,128,700.

While over a million downloads might seem substantial for many apps, it pales in comparison to the scale required for a successful social media platform, especially one built on expensive, cutting-edge AI. For context, OpenAI’s flagship product, ChatGPT, boasts an estimated 900 million weekly active users. The disparity underscores Sora’s failure to achieve the widespread adoption and habitual usage necessary to justify its existence. Furthermore, Appfigures estimated that Sora generated only about $2.1 million from in-app purchases, primarily from users buying additional video generation credits. This modest revenue stream was almost certainly insufficient to offset the enormous computational demands and operating costs associated with running a sophisticated generative AI model at scale, particularly for a company like OpenAI, which reportedly operates at a significant loss as it invests heavily in research and development. The combination of declining user numbers, limited revenue, and the looming liabilities from content moderation and intellectual property issues likely rendered Sora an unsustainable venture for OpenAI.

Broader Implications for AI and Social Media

Sora’s short but tumultuous journey offers a crucial case study and a cautionary tale for the burgeoning generative AI industry. It underscores the critical distinction between possessing a powerful underlying AI model and successfully translating that model into a compelling, ethical, and sustainable consumer product. The immediate public reaction to the app’s "creepiness" and its struggles with content moderation highlight the profound societal anxieties surrounding AI-generated content, particularly deepfakes and the potential for misinformation. The "uncanny valley" effect, where AI creations become unsettlingly close to reality but not quite perfect, played a significant role in user discomfort.

The shutdown also raises questions about OpenAI’s product strategy. While the company has excelled at developing foundational AI technologies, building and maintaining a successful social media platform demands a different set of expertise, encompassing robust moderation, community building, and an intuitive user experience that goes beyond mere novelty. The rapid pace of AI development often outstrips the development of adequate ethical frameworks and regulatory guardrails, leading to scenarios like Sora where powerful tools are unleashed before their societal implications are fully understood or mitigated.

The Road Ahead for Generative Video

The demise of the Sora app does not, however, signal the end of AI-generated video or the pursuit of AI-powered social experiences. The underlying Sora 2 model remains a formidable technological achievement, and it is still accessible to users, albeit now behind the ChatGPT paywall. OpenAI is far from alone in this space; companies like RunwayML, Stability AI, Google (with Lumiere), and Pika Labs are continually pushing the boundaries of generative video technology.

The lessons learned from Sora — particularly regarding the necessity of robust ethical guidelines, effective content moderation, and a clear value proposition for users — will undoubtedly shape future endeavors. As AI video generation capabilities continue to advance, the challenges of distinguishing between authentic and synthetic content, combating misinformation, and protecting intellectual property will only intensify. The "tsunami of clips," as some observers have termed the inevitable proliferation of AI-generated media, remains a looming reality. Future social AI video applications will need to navigate these complex waters with far greater foresight and responsibility if they are to achieve lasting success and avoid the fate of OpenAI’s ambitious, yet ultimately ill-fated, Sora app.