The ambitious expansion of the artificial intelligence sector and the pervasive influence of social media platforms are increasingly colliding with the practicalities and demands of the real world. This collision manifests in various forms, from local communities resisting the physical footprint of technological infrastructure to legal systems holding digital behemoths accountable for their societal impact. Recent developments underscore a significant shift, signaling that the era of unbridled technological advancement, unburdened by external constraints, may be drawing to a close.

This emerging tension highlights a pivotal moment where the initial hype surrounding transformative technologies like AI and the long-established dominance of social media platforms are being rigorously tested against the expectations of communities, the boundaries of legal frameworks, and the complex realities of ethical deployment. The narrative is evolving from one of boundless innovation to one tempered by accountability, sustainability, and a deeper consideration of societal well-being.

The Land Grab for Data Centers: Local Communities Push Back

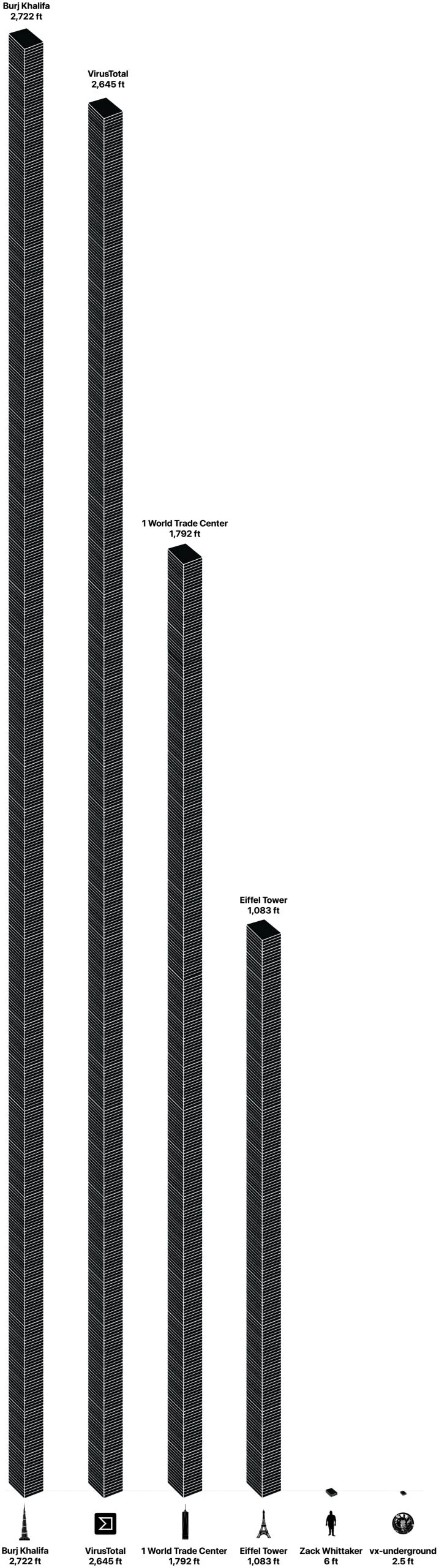

The digital revolution, particularly the current explosion in artificial intelligence, is remarkably reliant on a physical foundation: massive data centers. These facilities are the engine rooms of the internet, housing the servers, storage, and networking equipment necessary to process vast amounts of data, train sophisticated AI models, and power cloud services. Their proliferation, however, comes at a significant cost, often borne by local communities.

A poignant example recently emerged from Kentucky, where an 82-year-old woman reportedly declined a staggering $26 million offer from an AI company intent on developing a data center on her family farm. This refusal, more than just a personal decision, symbolizes a growing resistance movement. While the company may still pursue rezoning efforts for an adjacent 2,000 acres, the incident illuminates a fundamental clash between corporate expansion and individual or communal values.

Data centers are notoriously resource-intensive. They demand immense tracts of land, often in rural or semi-rural areas, to accommodate their sprawling infrastructure. Their energy consumption is staggering, requiring dedicated power grids and often relying on fossil fuels, contributing to carbon emissions. Furthermore, the cooling systems for these server farms consume prodigious quantities of water, placing strain on local water supplies, particularly in regions already grappling with scarcity. The noise pollution from cooling towers and the visual impact of massive, windowless buildings also represent a tangible disruption to the quality of life for nearby residents.

Historically, communities have resisted large industrial projects that threaten their way of life, environmental integrity, or property values. From manufacturing plants to landfills, the pattern of local opposition to perceived external impositions is well-established. The current wave of data center development is simply the latest iteration of this dynamic. For many residents, the financial incentives offered by tech companies, while substantial, do not outweigh concerns about environmental degradation, altered landscapes, increased traffic, or the loss of agricultural land and heritage. This resistance forces tech companies to confront not just economic feasibility but also social license, demanding greater transparency, community engagement, and sustainable practices in their infrastructure development.

OpenAI’s Sora: A Vision Grounded

In the realm of generative AI, where innovation often outpaces practical deployment, OpenAI’s Sora project served as a prime example of both immense promise and inherent challenges. Initially unveiled with much fanfare, Sora was touted as a groundbreaking text-to-video model capable of generating highly realistic and imaginative video clips from simple text prompts. Its demonstrations showcased stunning fidelity, intricate scenes, and dynamic camera movements, immediately sparking widespread excitement across creative industries and the general public. The potential to democratize video creation, allowing anyone to bring complex visual narratives to life without extensive technical skills or resources, seemed immense.

However, the journey from impressive demonstration to scalable, responsible product is fraught with obstacles. Reports of Sora’s shutdown, or at least a significant re-evaluation of its public release strategy, underscore the chasm between research breakthroughs and real-world application. While the exact reasons for the cessation of its public-facing app remain proprietary, several factors likely contribute to such decisions in the nascent field of generative video.

Technical hurdles are paramount. Generating consistent, high-fidelity video over longer durations, maintaining object permanence, and ensuring logical physics within generated scenes are incredibly complex computational challenges. The sheer processing power and data storage required to run and scale such a service are astronomical, translating into exorbitant operational costs.

Beyond technicalities, ethical considerations loom large. The capability to create hyper-realistic synthetic media raises profound concerns about misinformation, deepfakes, and their potential weaponization. The implications for intellectual property, copyright infringement (given the models are trained on vast datasets of existing media), and the displacement of human artists and creators are also subjects of intense debate. Deploying such a powerful tool responsibly requires robust safety protocols, content moderation, and clear provenance tracking, all of which are difficult to implement at scale.

Sora’s trajectory is not unique. The generative AI space is highly competitive, with companies like Google (with Imagen Video), RunwayML, and others actively developing similar technologies. The reported decision by OpenAI to halt Sora’s public app could signal a strategic pivot, a recognition that the technology is not yet mature enough for widespread, unsupervised use, or a prioritization of other projects. This pause serves as a crucial reminder that the "hype cycle" often outpaces the practical, ethical, and economic realities of deploying cutting-edge AI, pushing developers to reconsider the balance between rapid innovation and responsible product development.

Meta’s Legal Reckoning: The Price of Engagement

While AI grapples with its physical and ethical footprint, established tech giants like Meta are confronting a different kind of reality check: legal accountability for the societal impact of their core products. A landmark jury finding of negligence against Meta (and implicitly, YouTube) in a social media addiction trial represents a significant turning point in the ongoing debate about platform responsibility.

For years, social media platforms have faced increasing scrutiny over their design choices, particularly concerning their potential to foster addictive behaviors and contribute to mental health issues, especially among younger users. The "attention economy," where platforms optimize for maximum engagement through algorithmic feeds, notifications, and gamified interactions, has been widely criticized for prioritizing screen time over user well-being. Researchers and mental health professionals have published numerous studies linking excessive social media use to increased rates of anxiety, depression, body image issues, and cyberbullying.

The legal landscape surrounding platform liability has been complex, often shielded by Section 230 of the Communications Decency Act in the U.S., which largely protects platforms from liability for content posted by users. However, these new lawsuits pivot away from content liability to focus on product design and corporate negligence. Plaintiffs argue that platforms intentionally design features known to be addictive or harmful, fail to adequately warn users about these risks, or neglect to implement safeguards, particularly for minors. The parallels often drawn are with historical litigation against the tobacco or opioid industries, where companies were eventually held accountable for the design and marketing of products known to be harmful.

A jury finding of negligence against a company of Meta’s stature sends a powerful message. It signals that the legal system is increasingly willing to scrutinize the internal design choices and business practices of social media companies. This could open the floodgates for similar lawsuits, potentially leading to substantial financial penalties, forced changes in platform design, or stricter regulatory oversight. The social and cultural impact of such rulings is immense: it empowers advocacy groups, provides legal precedent for future cases, and intensifies public discourse around digital well-being, parental control, and the ethical obligations of tech companies. For Meta, whose business model is predicated on maximizing user engagement, this legal reckoning poses a fundamental challenge to its existing strategies and could necessitate a significant re-evaluation of its product development philosophy.

The Broader Landscape: AI Hype Meets Reality

These seemingly disparate events – a rural community’s resistance to an AI data center, the reported re-evaluation of a cutting-edge generative AI tool, and a legal judgment against a social media giant – are threads woven into a larger tapestry. They collectively illustrate a crucial inflection point where the technological ideal is meeting societal and practical realities.

The pervasive "AI hype cycle" has often painted a picture of seamless integration and boundless innovation. However, the reality is far more complex. The physical infrastructure required to power AI demands vast resources, leading to environmental concerns and community pushback. The ethical implications of powerful AI models necessitate cautious deployment and robust safeguards. The long-term societal effects of digital platforms, once overlooked in the pursuit of growth, are now becoming central to legal and regulatory debates.

This period marks a maturation for the tech industry. Investors, once solely focused on user growth and disruptive potential, are now increasingly considering sustainability, regulatory risk, and ethical governance. The "move fast and break things" ethos, once a Silicon Valley mantra, is being challenged by a growing demand for responsible innovation. Regulatory bodies globally are accelerating efforts to govern AI, with landmark legislation like the EU AI Act setting precedents for safety, transparency, and human oversight.

The cumulative effect is a demand for greater transparency, accountability, and a more human-centric approach to technological development. The public’s perception of tech companies is evolving, with an increasing skepticism about promises of utopian futures and a greater focus on tangible harms and benefits.

Looking Ahead: A New Era of Scrutiny

The confluence of these challenges suggests that the tech industry is entering a new era characterized by heightened scrutiny and a more balanced relationship with society. Future technological advancements, particularly in AI, will likely require a more proactive engagement with communities, a deeper consideration of environmental impacts, and a rigorous adherence to ethical guidelines from the outset of development.

For social media platforms, the legal precedents being set will compel a re-evaluation of product design, potentially shifting the focus from maximizing engagement at all costs to prioritizing user well-being and digital citizenship. This might involve greater investment in features that promote healthier online habits, more robust age verification and parental controls, and a fundamental rethinking of algorithmic incentives.

The path forward for tech giants involves navigating a complex interplay of innovation, regulation, ethics, and public trust. The ability to adapt to these evolving demands – to build technology that is not only powerful but also sustainable, responsible, and genuinely beneficial to society – will define the next chapter for an industry that has long reshaped the world. The recent setbacks and challenges are not necessarily indicators of failure, but rather crucial checkpoints on the journey toward a more integrated and accountable technological future.