In an era defined by the accelerating pace of artificial intelligence, the quest for specialized computing power has become a strategic imperative for technology giants. Following Amazon CEO Andy Jassy’s announcement of a landmark $50 billion investment deal with OpenAI, the spotlight intensified on the core technology enabling this colossal commitment: Amazon Web Services’ (AWS) custom-designed Trainium chip. This proprietary silicon, developed within Amazon’s advanced chip development lab, represents a significant thrust into the fiercely competitive AI hardware market, signaling Amazon’s ambition to democratize high-performance AI infrastructure and potentially disrupt the long-standing dominance of incumbents like Nvidia.

The tour of this critical facility, granted to a select few, offered a rare glimpse into the engineering prowess and strategic vision underpinning AWS’s AI future. Guided by Kristopher King, the lab’s director, and Mark Carroll, director of engineering, along with public relations representative Doron Aronson, the visit illuminated not only the technical achievements of Trainium but also its profound implications for the broader AI ecosystem. This move is not merely about creating another chip; it is about establishing an end-to-end, vertically integrated AI stack that promises both performance and cost efficiency, fundamentally reshaping how AI models are trained and deployed at scale.

The Dawn of Custom Silicon in AI

The landscape of artificial intelligence has undergone a dramatic transformation in recent years, largely driven by the emergence of large language models (LLMs) and generative AI applications. These sophisticated models require an astronomical amount of computational power, both for their initial "training" — the process of feeding vast datasets to the model to learn patterns — and for "inference" — the subsequent application of the trained model to generate responses or make predictions. Historically, general-purpose graphics processing units (GPUs), primarily from Nvidia, became the de facto standard for AI workloads due to their parallel processing capabilities. Nvidia’s CUDA software platform further cemented this position, creating a formidable ecosystem that made switching to alternative hardware a daunting, costly, and time-consuming prospect for developers.

However, the insatiable demand for AI compute, coupled with the high cost and occasional supply bottlenecks of premium GPUs, spurred major cloud providers and AI developers to seek alternatives. Companies like Google pioneered their own Tensor Processing Units (TPUs), while Microsoft has also invested in custom AI silicon. Amazon’s entry into this fray with its custom chip portfolio, including the Graviton CPU, Inferentia for inference, and now Trainium for both training and inference, is a direct response to this market need. It reflects a broader industry trend where hyperscalers are taking greater control over their hardware stack to optimize performance, reduce operational costs, and secure their supply chains.

Trainium’s Technical Evolution and Strategic Significance

Trainium was initially conceived with a primary focus on accelerating the training of machine learning models, a critical but expensive phase in AI development. However, as AI applications proliferated, the bottleneck shifted significantly towards inference. Running AI models to generate real-time responses for millions of users became the most demanding and costly part of the AI lifecycle. Recognizing this, Amazon’s engineering team re-tuned and optimized Trainium to excel in inference workloads as well. This adaptability is a key factor in its appeal to major AI labs.

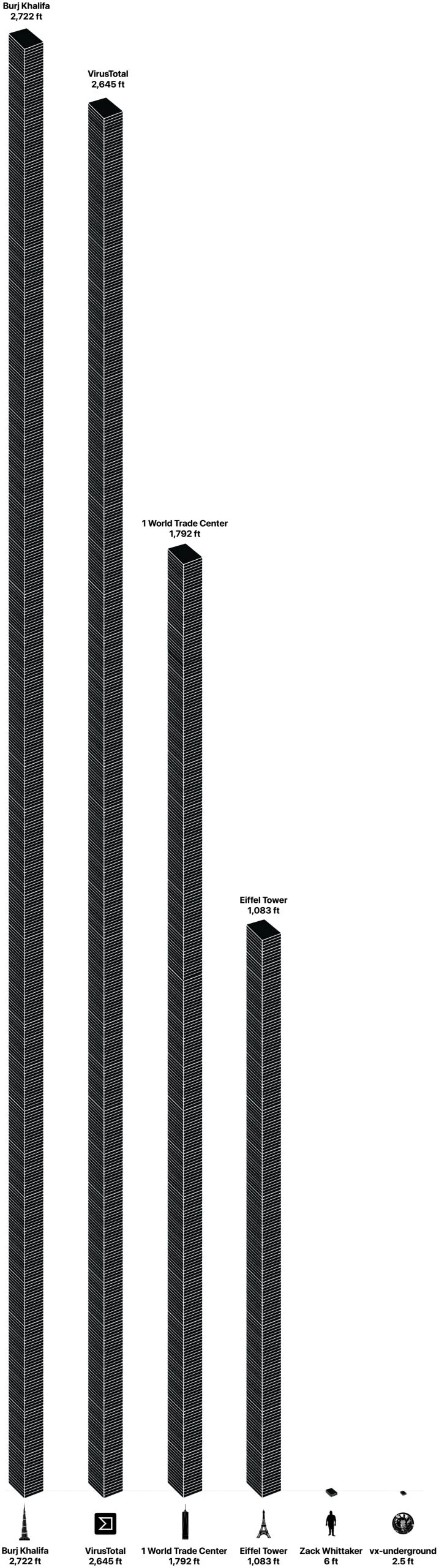

The current iteration, Trainium3, released in December, showcases significant advancements. AWS claims that its new chips, when running on the specialized Trn3 UltraServers, can offer comparable performance to traditional cloud servers at up to 50% lower operational cost. This cost-efficiency is paramount in an industry where computational expenses can quickly escalate to billions of dollars for cutting-edge models.

A crucial innovation lies in the accompanying Neuron switches. As director Mark Carroll elaborated, these switches enable every Trainium3 chip to communicate directly with every other chip in a mesh configuration. This intricate networking dramatically reduces latency, allowing for faster data transfer and processing across the massive clusters required for modern AI. Carroll emphasized that this combination of Trainium3 and Neuron switches is "breaking all kinds of records," particularly in "price per power" — a metric that directly translates into cost savings for customers. When dealing with trillions of tokens processed daily, such efficiencies translate into substantial economic advantages.

Another engineering marvel embedded within the Trainium3 ecosystem is the adoption of liquid cooling technology. While earlier versions relied on air cooling, the transition to liquid cooling addresses the immense heat generated by high-density AI compute. This advanced cooling system offers significant energy advantages, contributing to lower operating costs and a reduced environmental footprint, aligning with growing corporate sustainability goals. The ability to manage thermal output efficiently allows for more powerful chips and denser server configurations, pushing the boundaries of what’s possible within data center infrastructure.

Challenging the Incumbent: Nvidia’s Realm

Amazon’s strategic push into custom silicon directly challenges Nvidia’s near-monopoly in the AI chip market. For years, developers building AI applications were largely locked into Nvidia’s CUDA programming model, making it difficult to port their existing code to alternative hardware. This "switching cost" has been a significant barrier to competition.

However, Amazon’s chip team has made a concerted effort to mitigate this. They proudly announced that Trainium now supports PyTorch, one of the most popular open-source frameworks for building AI models. This compatibility is a game-changer. According to Carroll, transitioning a PyTorch-based application to Trainium can be as simple as "basically a one-line change, and then recompile, and then run on Trainium." This ease of migration significantly lowers the barrier for developers to experiment with and adopt Trainium, directly eroding Nvidia’s ecosystem advantage. Many open-source models hosted on platforms like Hugging Face, built with PyTorch, can now theoretically run efficiently on Trainium.

Furthermore, Amazon is not solely relying on its own chip development. The company recently announced a partnership with Cerebras Systems, integrating their inference chips on servers running alongside Trainium. This collaboration points to a hybrid strategy, leveraging specialized hardware from other innovators to offer a broader, super-powered, low-latency AI performance portfolio to its customers.

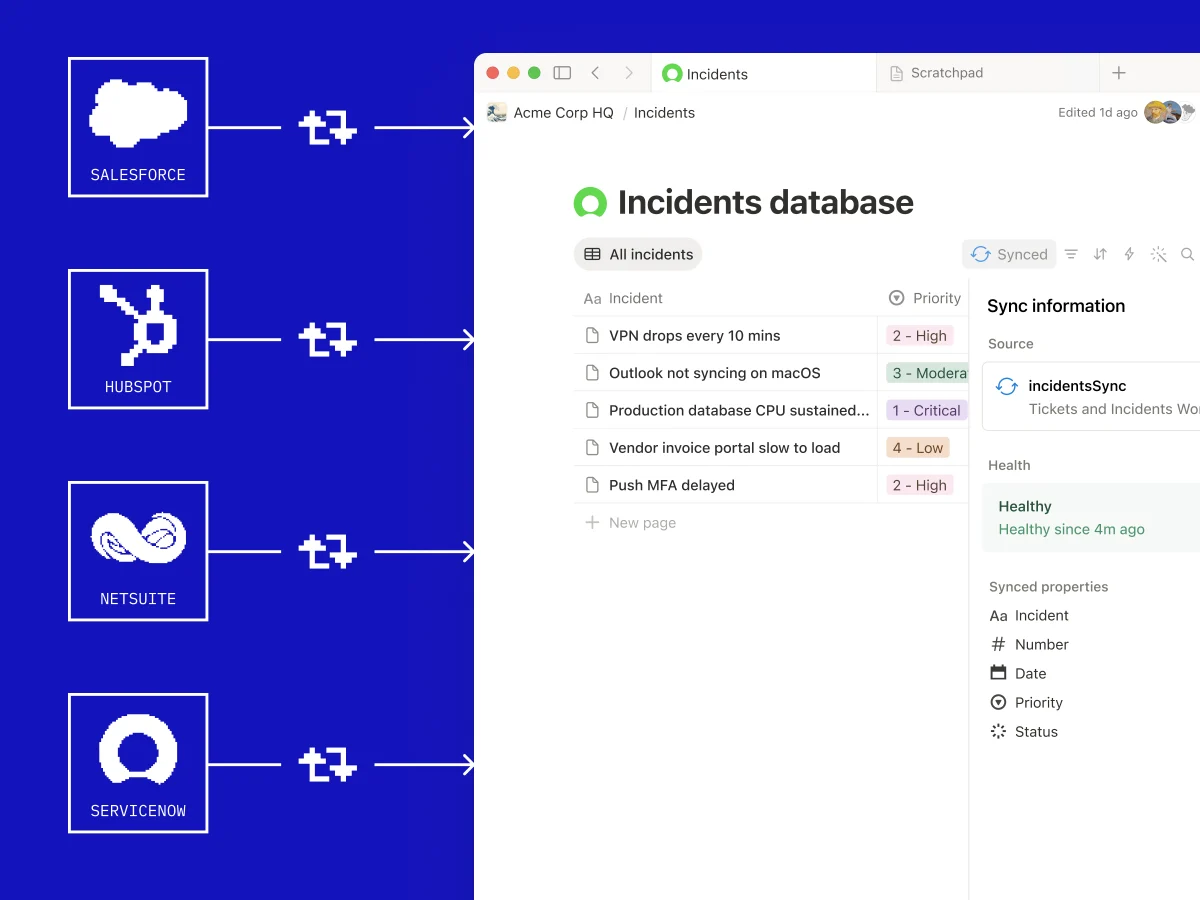

Beyond the chips themselves, Amazon’s vertical integration extends to the entire server infrastructure. The team designs not just the chips but also the servers that host them, including networking components, the Nitro virtualization technology (a hardware-software combination that allows multiple instances of software to run efficiently on a single server), state-of-the-art liquid cooling systems, and the server sleds that house all this gear. This holistic approach ensures optimal performance, reliability, and cost control across the entire stack, a classic Amazon playbook strategy: identify a core competency, build an in-house alternative, and then offer it as a service.

Validation from Industry Titans

The efficacy of Trainium is not merely an internal AWS claim; it has garnered significant validation from some of the leading players in the AI and tech industries.

Anthropic, a prominent AI research company and creator of the Claude large language model, has been a cornerstone customer for AWS since its early days. This enduring relationship saw Anthropic adding Microsoft as a cloud partner later, yet AWS remained a critical platform. The scale of Anthropic’s reliance on Trainium is staggering: over one million Trainium2 chips are currently deployed to run Claude, primarily within "Project Rainier," one of the world’s largest AI compute clusters, which went live in late 2025 with 500,000 chips. This real-world, high-stakes deployment by a leading AI innovator serves as a powerful testament to Trainium’s capabilities.

The recent $50 billion deal with OpenAI further solidified Trainium’s standing. As part of this agreement, AWS committed to supplying OpenAI with an astounding 2 gigawatts of Trainium computing capacity. This makes AWS the exclusive provider for OpenAI’s new AI agent builder, Frontier, a product that could significantly shape the future of AI applications. The magnitude of this commitment, especially given that Anthropic and Amazon’s own Bedrock service are already consuming Trainium chips rapidly, underscores Amazon’s confidence in its custom silicon and its ability to scale. While a recent report in the Financial Times suggested that Microsoft might view OpenAI’s deal with Amazon as a potential violation of its own partnership agreement, this only highlights the intense strategic competition surrounding AI infrastructure. Regardless of any potential disputes, the OpenAI deal undeniably positions Trainium as a foundational technology for a future where AI agents become ubiquitous.

Even Apple, a notoriously secretive company, offered a rare public endorsement in 2024. During an AWS re:Invent conference, Apple’s director of AI publicly lauded AWS’s custom chips, specifically mentioning Graviton (a low-power, ARM-based server CPU and the team’s first breakout chip), Inferentia (designed for inference), and acknowledging Trainium, which was then a newer offering. Such praise from a company known for its stringent internal development and high performance standards speaks volumes about the quality and impact of Amazon’s custom silicon.

Inside the Innovation Hub: The Austin Lab

The birthplace of Trainium is Amazon’s custom chip-designing unit, rooted in the acquisition of Israeli chip designer Annapurna Labs in January 2015 for approximately $350 million. This acquisition, now over a decade old, provided Amazon with a seasoned team of engineers whose expertise forms the bedrock of its custom silicon strategy. The Annapurna legacy is still evident, with its logo prominently displayed throughout the facility.

Located in Austin’s upscale "The Domain" district, often dubbed "Austin’s Silicon Valley," the lab itself is a blend of corporate professionalism and raw engineering grit. While the offices feature standard cubicles and meeting rooms, the true heart of innovation lies in a noisy, industrial space at the back of a high floor. This lab, roughly the size of two large conference rooms, hums with the whirring of equipment fans, a stark contrast to the quiet corporate environment. Here, engineers, dressed casually, work to bring cutting-edge silicon to life.

This is not a manufacturing facility, so the pristine "white hazmat suits" of chip fabrication plants are absent. Trainium3, for instance, is a state-of-the-art 3-nanometer chip produced by TSMC, a global leader in advanced semiconductor manufacturing. Other chips in Amazon’s portfolio are produced by Marvell. The Austin lab is where the critical "bring-up" process occurs.

As King explained, a silicon "bring-up" is an intense, all-night event when a newly manufactured chip is activated for the very first time. After 18 months of design and development, this is the moment of truth, verifying if the chip functions as intended. It’s rarely problem-free. King recounted an anecdote from the Trainium3 bring-up: the prototype, initially designed for air cooling, had a slight misdimension for its heat sink attachment. Unfazed, the team quickly procured a grinder and manually adjusted the metal in a conference room to avoid disrupting the "pizza party atmosphere" of the bring-up. This impromptu problem-solving epitomizes the agile and dedicated culture within the lab.

The lab is equipped with both custom-made and commercial tools for rigorous testing and analysis. It even houses a welding station where skilled engineers like Isaac Guevara perform incredibly delicate work, micro-welding integrated circuit components under a microscope – a testament to the specialized expertise required.

The pride of the lab is a display of server "sleds," the trays that house Trainium AI chips, Graviton CPU chips, and other supporting components. These sleds, stacked together with custom-designed networking components, form the high-performance systems that power AI workloads for customers like Anthropic and OpenAI.

The Testing Grounds: A Private Data Center

Beyond the design lab, Amazon maintains a private data center for quality assurance and testing purposes. Located a short drive away in a co-location facility, this center does not run customer workloads but is exclusively dedicated to validating the performance and reliability of Amazon’s custom hardware before mass deployment. The environment is challenging: mandatory earplugs are required due to the deafening roar of the cooling systems, and the air carries the distinct, acrid smell of heated metal.

Within this facility, rows upon rows of servers hum, integrating Amazon’s full suite of custom chips: Graviton CPUs, liquid-cooled Trainium3, and Nitro virtualization technology. The liquid cooling system operates on a closed loop, recirculating coolant to enhance energy efficiency and reduce environmental impact. Hardware development engineers, such as David Martinez-Darrow, are constantly at work, performing maintenance and ensuring the optimal functioning of these critical systems.

A Future Built on Custom Silicon

The intense scrutiny and high expectations surrounding Amazon’s custom chip division are palpable. CEO Andy Jassy frequently highlights the team’s achievements, proudly declaring Trainium a "multibillion-dollar business for AWS" and one of the AWS technologies he is most excited about. This executive-level endorsement underscores the strategic importance of custom silicon to Amazon’s long-term vision.

The engineers in Austin, already working on the next iteration, Trainium4, feel this pressure keenly. The "bring-up" events, which involve 24/7 work for weeks, are crucial for rapidly identifying and rectifying issues, ensuring chips can be mass-produced and deployed efficiently into data centers. As Carroll noted, "It’s very important that we get as fast as possible to prove that it’s actually going to work. So far, we’ve been doing really well."

Amazon’s investment in Trainium and its broader custom silicon strategy represents more than just a foray into chip design; it’s a foundational pillar for its future in cloud computing and AI. By offering a high-performance, cost-effective, and increasingly easy-to-adopt alternative to traditional AI hardware, Amazon is not just participating in the AI revolution; it’s actively shaping its infrastructure, driving competition, and expanding access to the immense computational power necessary for the next generation of artificial intelligence.