The Trump administration has unveiled a comprehensive legislative framework for artificial intelligence, proposing a singular national policy for the United States. This initiative aims to consolidate regulatory authority within the federal government, potentially curtailing the burgeoning efforts by individual states to govern the development and deployment of AI technologies. The framework’s proponents argue that a unified federal approach is essential to foster innovation and maintain American leadership in the global AI race, while critics express concern that it could undermine vital state-level protections and accountability mechanisms.

The Drive for a Unified National AI Strategy

At the core of the administration’s proposal is a belief that a patchwork of disparate state regulations would stifle progress and create undue burdens for AI developers. A White House statement accompanying the framework emphasized that its success hinges on uniform application across the nation, warning that conflicting state laws could impede American innovation and its competitive edge in the rapidly evolving AI landscape. This perspective aligns with a broader push by the administration to streamline regulatory environments and remove what it perceives as unnecessary barriers to technological advancement, a philosophy often championed by "accelerationists" within the tech sector.

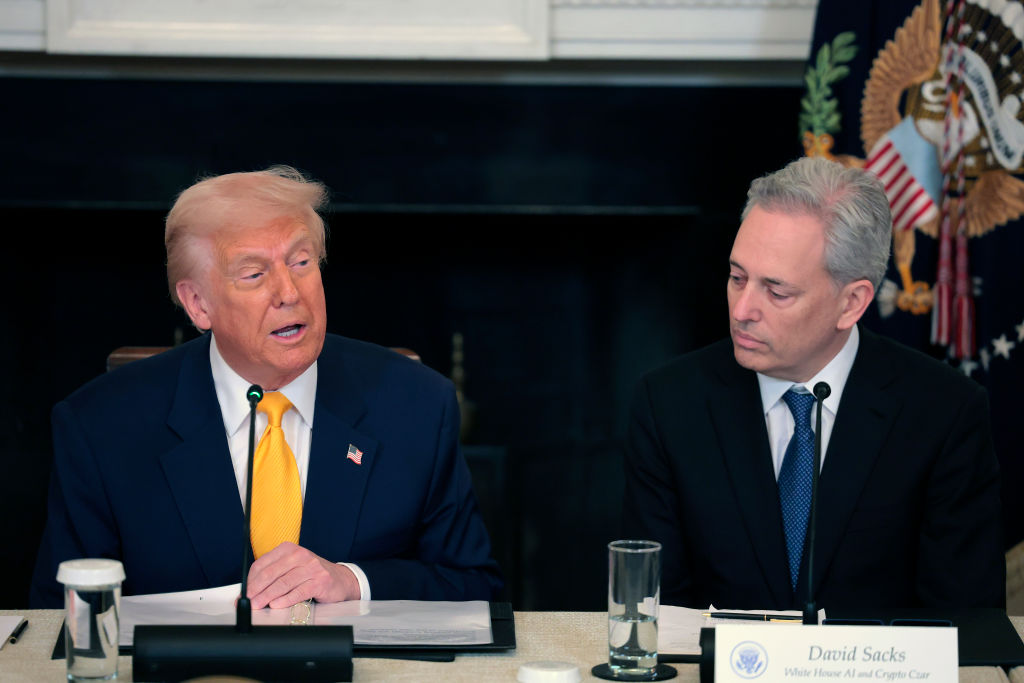

This framework follows closely on the heels of a previous executive order issued three months prior, which directed federal agencies to scrutinize and challenge state AI laws deemed "onerous." That order also tasked the Commerce Department with compiling a list of such state regulations, with potential implications for states’ eligibility for federal funding, including critical broadband grants. While that list has yet to be published, the new legislative framework signals a more concrete vision for a centralized regulatory architecture, echoing the administration’s prior AI strategies that have consistently favored growth and innovation over stringent guardrails. David Sacks, a prominent venture capitalist and the White House AI czar, has been identified as a key proponent of this light-touch regulatory philosophy.

Federalism and the Preemption Debate

The concept of federal preemption, where federal law supersedes state law, is a long-standing principle in the American legal system. Historically, it has been invoked in areas deemed of national importance, such as interstate commerce, national security, and certain environmental regulations. In the context of AI, the administration posits that the development and use of AI is an "inherently interstate" issue, deeply intertwined with national security and foreign policy, thereby justifying federal dominance.

States, however, have increasingly stepped into regulatory voids left by federal inaction, particularly in emerging technological sectors. The absence of comprehensive federal data privacy laws, for example, prompted states like California to enact the California Consumer Privacy Act (CCPA), which has since served as a model for other states. Similarly, in the realm of AI, states have begun to act as "laboratories of democracy," experimenting with different regulatory approaches to address novel risks. New York’s RAISE Act and California’s SB-53, for instance, are examples of state-level legislation designed to mandate public documentation of safety protocols for large AI companies. These state initiatives reflect a belief that local governments are often more agile and responsive to specific concerns within their jurisdictions, allowing for tailored solutions that might be overlooked in a broad national policy.

The proposed framework, while acknowledging the principle of federalism, carves out only narrow exceptions for state authority. States would largely retain jurisdiction over general laws like fraud, child protection, zoning, and their own governmental use of AI. However, the framework explicitly draws a "hard line" against states regulating AI development itself, underscoring the federal government’s intent to maintain sole authority in this domain. This approach has sparked a significant debate about the appropriate balance of power between federal and state governments in regulating rapidly advancing technologies.

Child Safety: Shifting Responsibility

One of the most notable aspects of the framework concerns child safety in the digital realm. The proposal pivots away from placing primary accountability on AI platforms, instead emphasizing parental responsibility. This stance emerges at a time when child safety has become a central and emotionally charged issue in the broader debate surrounding AI and social media. Concerns over the impact of digital technologies on minors’ mental health, exposure to harmful content, and the potential for exploitation have prompted several states to pass aggressive laws aimed at protecting children and imposing greater obligations on tech companies.

The administration’s framework suggests that "parents are best equipped to manage their children’s digital environment and upbringing," advocating for Congress to provide parents with tools such as account controls for privacy and device usage management. While the framework does state that AI companies should implement features to "reduce the risks of sexual exploitation and harm to minors," it stops short of laying out clear, enforceable requirements. It uses qualifiers like "commercially reasonable" when discussing safeguards and avoids establishing explicit prerequisites for platforms. Critics argue that this approach places an undue burden on parents, many of whom may lack the technical expertise or resources to effectively navigate the complexities of AI-powered environments, and that it may absolve tech companies of their critical role in designing safer products by default.

This position contrasts sharply with the direction taken by some states, which have moved to regulate AI companion chatbots and other AI-powered tools aimed at children, placing more explicit responsibility on developers for the safety and well-being of young users. The debate over who bears ultimate responsibility for child safety online – parents, platforms, or government regulators – remains a contentious issue with significant social and cultural implications.

Fostering Innovation Through Light-Touch Regulation

The framework outlines seven key objectives, with a strong emphasis on fostering innovation and enabling the rapid scaling of AI technologies. It proposes a "minimally burdensome national standard," aligning with the administration’s broader strategy to remove perceived regulatory impediments to growth across various industries. This pro-growth, light-touch regulatory philosophy is seen by many in the AI industry as a welcome development, providing the clarity and predictability they believe are necessary to accelerate development and deployment without the distraction of navigating diverse state-specific compliance regimes.

Proponents, such as Teresa Carlson, president of General Catalyst Institute, have expressed that such a framework is precisely what startups require: a clear national standard to build and scale rapidly, free from the complexities of a "patchwork of conflicting state AI laws." The argument is that an environment with fewer regulatory hurdles allows companies to allocate more resources to research and development, ultimately leading to faster advancements and maintaining the United States’ competitive edge against nations like China, which are also heavily investing in AI.

However, critics argue that an overly permissive regulatory environment could lead to unforeseen harms. The absence of robust liability frameworks, independent oversight, or clear enforcement mechanisms for potential novel harms caused by AI is a significant concern. They fear that prioritizing innovation at all costs might overlook critical ethical considerations, such as algorithmic bias, privacy violations, or the proliferation of misinformation, allowing these issues to become entrenched before effective remedies can be implemented.

Liability, Copyright, and Free Speech in the AI Era

A crucial element of the framework is its stance on developer liability. It explicitly seeks to prevent states from "penalizing AI developers for a third party’s unlawful conduct involving their models," effectively proposing a liability shield for developers. This provision evokes parallels with Section 230 of the Communications Decency Act, which largely protects online platforms from liability for content posted by their users. The debate over platform liability for user-generated content has been ongoing for years, and extending similar protections to AI developers raises new questions about accountability when AI models generate harmful or illegal outputs. Critics like Brendan Steinhauser, CEO of The Alliance for Secure AI, contend that this approach offers "no path to accountability for AI developers for the harms caused by their products," thereby shifting the burden of addressing AI-generated harm away from the creators of the technology.

On copyright, the framework attempts to strike a balance, acknowledging the need to protect creators while allowing AI systems to be trained on existing works, citing the principle of "fair use." This language mirrors the arguments made by AI companies currently facing a growing number of copyright lawsuits from artists, writers, and other creators whose works have been used as training data for generative AI models without explicit permission or compensation. The interpretation and application of "fair use" in the context of AI training data remain a hotly contested legal and ethical issue.

Perhaps the most ideologically charged aspect of the framework concerns free speech and content moderation. The primary "guardrails" outlined by the administration focus on ensuring "AI can pursue truth and accuracy without limitation" and, crucially, preventing government-driven censorship. The framework explicitly states that "Congress should prevent the United States government from coercing technology providers, including AI providers, to ban, compel, or alter content based on partisan or ideological agendas." It further suggests providing legal redress for Americans against government agencies that attempt to censor expression or dictate information on AI platforms.

This emphasis builds upon the administration’s earlier "anti-woke AI" executive order, which pushed federal agencies to adopt "ideologically neutral" AI systems. The language used reflects a broader political discourse around alleged bias in algorithms and content moderation practices on technology platforms. However, the framework’s distinction between government coercion and standard content moderation remains ambiguous. Critics, such as Samir Jain, Vice President of Policy at the Center for Democracy and Technology, point out the potential contradiction, noting that the administration’s own "woke AI" executive order could be interpreted as coercing AI companies to align with a specific ideological agenda. This ambiguity could complicate efforts by regulators to collaborate with platforms on critical issues like misinformation, election interference, and public safety risks, potentially hindering effective governance in a rapidly evolving digital landscape. The framework also comes amidst a legal challenge from Anthropic, an AI company, against the Department of Defense, with the company alleging First Amendment infringements after being labeled a supply-chain risk, a designation Anthropic claims is retaliatory for its refusal to allow military use of its AI for mass surveillance or lethal autonomous weapons decisions. The administration’s public commentary on Anthropic and its CEO as "woke" further underscores the ideological undercurrents in the AI policy debate.

The Path Forward

The Trump administration’s AI legislative framework represents a significant policy statement, signaling a clear intent to centralize AI policymaking in Washington and prioritize an innovation-first, light-touch regulatory approach. While it seeks to provide a predictable environment for AI development, it simultaneously narrows the scope for states to act as early regulators of emerging risks and places a greater onus on individual users for managing the safety of their digital interactions. The proposal has been met with enthusiasm from parts of the tech industry, eager for a clear national standard, but has drawn sharp criticism from advocates concerned about accountability, child protection, and the potential for unchecked development of powerful AI technologies. As the debate over AI governance continues, this framework will undoubtedly shape future discussions and legislative efforts at both federal and state levels, with profound implications for the future of technology, society, and the economy.