At its recent Android Show: I/O Edition event, Google unveiled a suite of transformative artificial intelligence capabilities, branded under its Gemini Intelligence umbrella, designed to fundamentally alter how users interact with their Android devices. These innovations move beyond simple voice commands, introducing "agentic AI" features that can autonomously complete complex, multi-application tasks, browse the internet on a user’s behalf, and even dictate speech with nuanced formatting. Alongside these powerful automation tools, Google is also rolling out "vibe-coded" widgets, allowing users to generate personalized interface elements using natural language descriptions, ushering in a new era of dynamic customization for the Android ecosystem.

The Dawn of Agentic Intelligence on Android

The concept of agentic AI represents a significant leap from the reactive voice assistants that have dominated mobile interfaces for the past decade. Unlike systems that merely execute discrete commands, an agentic AI is designed to understand broader user intent, plan a sequence of actions, and execute tasks across multiple applications, often without explicit step-by-step instructions. Google’s journey into this more sophisticated realm of AI began with earlier, more contained demonstrations.

For instance, initial agentic capabilities were previewed at the Samsung Galaxy S26 launch earlier this year, where Gemini demonstrated the ability to handle basic transactional tasks such as ordering food or booking a ride. These early integrations hinted at a future where AI could orchestrate interactions between disparate apps, streamlining everyday chores. Building on this foundation, Google expanded Gemini’s potential to more complex scenarios, like securing a front-row spot for a spin class, locating a class syllabus within Gmail, and subsequently searching for relevant textbooks online—all from a single prompt.

The latest announcements push these boundaries further. Android users will soon be able to initiate multi-step processes by simply pressing their phone’s power button and verbally describing the desired outcome. The content currently displayed on the screen will provide essential context, enabling the assistant to intelligently interpret and act. A prime example showcased was the ability to copy a grocery list from a notes application and then seamlessly add those items to a shopping cart in a retail app, with Gemini awaiting final user confirmation before checkout. This level of cross-application coordination signifies a shift from mere convenience to genuine digital assistance, where the AI proactively navigates the digital landscape on the user’s behalf.

Enhanced Web and System Integration

Beyond in-app automation, Google is also bolstering Gemini’s capabilities for web interaction and system-wide integration. An experimental auto-browse feature, first introduced in January for limited testing, is now making its way to all Android devices. This functionality empowers Gemini to autonomously navigate the internet to complete tasks such as booking appointments or gathering information, freeing users from the manual process of opening browsers and searching.

Furthermore, a tighter integration of Gemini within the Chrome browser on Android devices is slated for late June. This feature mirrors the desktop version, allowing users to summarize lengthy web pages or ask specific questions about the content directly within Chrome, fostering a more efficient information consumption experience. This evolution transforms the browser from a passive portal into an active, intelligent research assistant, capable of distilling vast amounts of information on demand.

Privacy and personalization remain critical considerations in this expanded integration. Google’s "Personal Intelligence" feature, which allows Gemini to learn user-specific details over time, will now facilitate automated form filling. Recognizing the sensitive nature of personal data, Google emphasized that this feature is strictly opt-in, providing users with explicit control over when and how their personal information is used and the ability to disable it at any time through device settings. This commitment to user agency in data management is crucial for fostering trust as AI systems become more deeply embedded in personal workflows.

Adding to the robust suite of enhancements, Gemini is also being integrated directly into Android’s Gboard keyboard. A new multimodal feature called "Rambler" leverages Gemini’s advanced capabilities to revolutionize dictation. Unlike traditional speech-to-text systems, Rambler can transcribe spoken words while preserving the user’s natural tone and then intelligently format the text, even removing filler words. This innovation aims to make spoken input as refined and articulate as typed communication, significantly benefiting users who rely on dictation for productivity or accessibility. The integration within Gboard also positions Google to potentially disrupt the market for third-party AI-powered dictation applications, offering a native, deeply integrated solution.

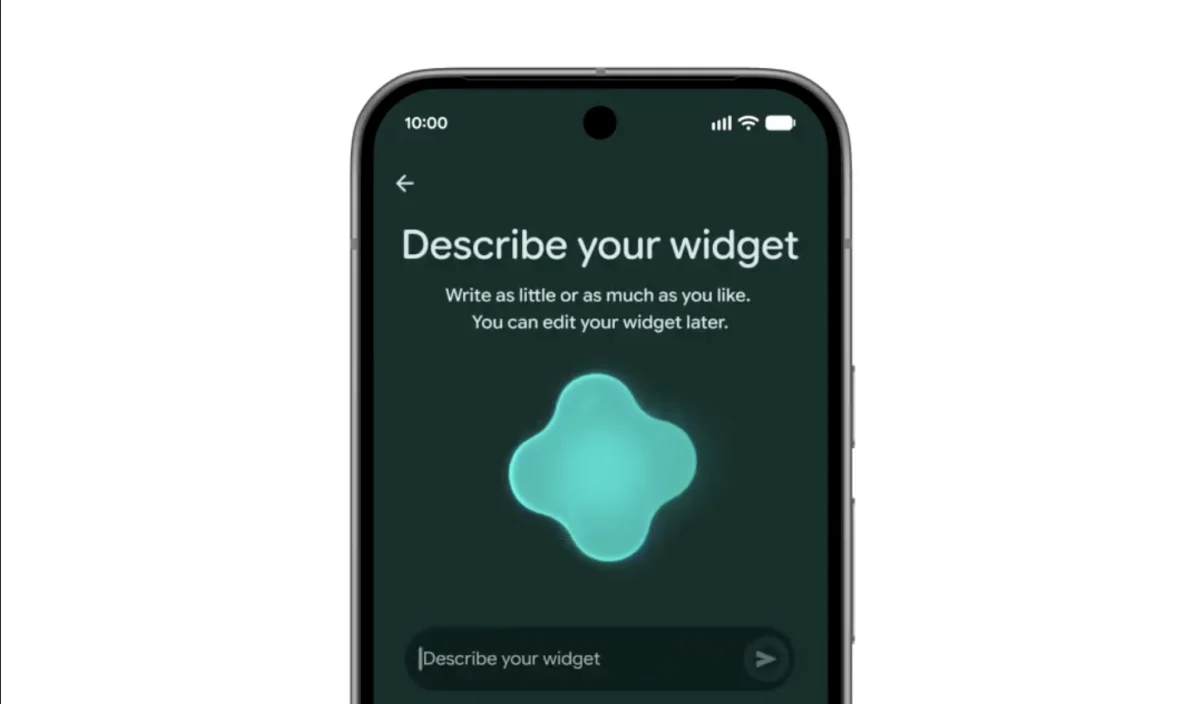

The Rise of Personalized Interfaces: "Vibe-Coded" Widgets

In parallel with the advancements in agentic AI, Google is introducing a novel approach to user interface customization through "vibe-coded" widgets. This feature empowers Android users to create highly personalized widgets using natural language prompts. Instead of selecting from a predefined library, users can simply describe the functionality and aesthetic they desire, and Gemini will generate a bespoke widget.

For example, a user could request a meal-planning widget with the query, "Suggest three high-protein meal prep recipes every week." Gemini would then create a widget tailored to this specific instruction, dynamically displaying relevant content. This concept represents a significant shift towards generative user interfaces, where the interface adapts to the user’s needs and preferences rather than the user adapting to predefined UI elements.

The idea of creating custom mini-applications or widgets through prompts is gaining traction within the tech industry. Notably, the hardware startup Nothing introduced a similar tool last year, demonstrating a growing trend towards democratizing interface design and empowering users to craft their digital environments. Google’s entry into this space, backed by the extensive capabilities of Gemini, could accelerate its adoption across the broader Android ecosystem. These personalized widgets, adhering to Google’s Material 3 expressive design language, promise not only enhanced functionality but also a more aesthetically cohesive and enjoyable user experience.

Market Dynamics and Societal Implications

Google’s aggressive push into agentic AI and generative UI reflects a broader industry trend and a fierce competitive landscape. As large language models (LLMs) and generative AI mature, major tech players like Google, Microsoft, Apple, and OpenAI are vying for supremacy in integrating these technologies into everyday consumer products. For Google, these announcements are critical for maintaining Android’s position as a leading, innovative mobile platform against challenges from rivals like Apple, which is also expected to deepen its AI integrations.

The implications of these advancements are far-reaching. On the one hand, agentic AI promises unprecedented levels of productivity and convenience. By offloading mundane, multi-step digital tasks to an intelligent assistant, users could reclaim significant amounts of time and mental energy. The seamless flow between applications, the automated web interactions, and the refined dictation capabilities could make mobile devices more powerful tools for work, learning, and personal organization.

However, the proliferation of such powerful AI also raises important societal questions and challenges. Data privacy and security become paramount as AI agents gain access to more personal information and operate across sensitive applications like email, notes, and banking. Google’s opt-in approach to "Personal Intelligence" is a step towards addressing these concerns, but continuous vigilance and robust security measures will be essential.

Furthermore, the accuracy and reliability of these AI agents will be under constant scrutiny. Errors in multi-step tasks, misinterpretations of user intent, or biases embedded in the AI models could lead to frustration or even significant issues. User trust will hinge on the AI’s consistent performance and transparency in its operations. There’s also the potential for "over-reliance" on AI, where users might lose some degree of digital literacy or critical thinking if agents automate too many decisions.

The "vibe-coded" widgets, while offering creative freedom, also present questions regarding discoverability and potential fragmentation if every user designs a unique interface. However, the broader impact is likely to be positive, fostering a new wave of user-driven innovation and customization that makes Android devices feel even more personal and responsive to individual needs.

The Road Ahead

The rollout of these Gemini Intelligence features will begin this summer, initially targeting the latest Samsung Galaxy and Google Pixel devices, before expanding to other Android devices later in the year. This phased approach allows Google and its hardware partners to refine the experience and gather user feedback before a wider deployment.

Google’s vision is clear: to evolve Android from a collection of apps and services into a truly intelligent companion that anticipates needs, streamlines tasks, and adapts to the user’s unique "vibe." This ongoing transformation, driven by advancements in agentic AI and generative user interfaces, marks a pivotal moment in mobile computing, promising a future where our devices are not just tools, but proactive partners in our digital lives. The race to define the next generation of human-computer interaction is well underway, and Google’s latest offerings position Android at the forefront of this intelligent evolution.