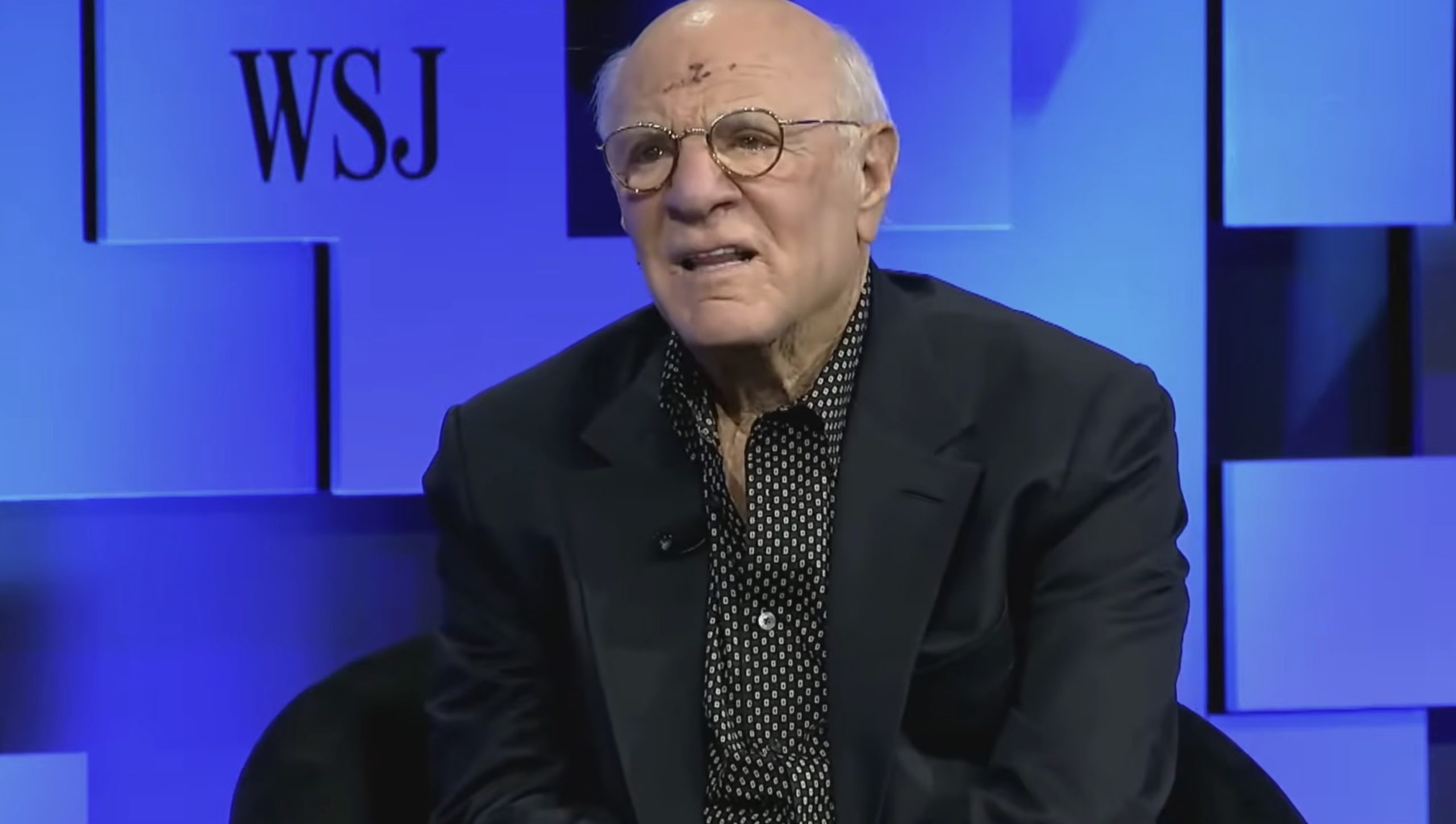

Billionaire media executive Barry Diller recently offered a compelling perspective on the future of artificial intelligence, asserting that while he harbors trust in figures like OpenAI CEO Sam Altman, individual trustworthiness becomes secondary when contemplating the advent of artificial general intelligence (AGI). Speaking at The Wall Street Journal’s "Future of Everything" conference, Diller articulated a profound concern not about the intentions of AI’s pioneers, but rather about the inherently unpredictable and potentially transformative consequences that AGI could unleash upon humanity. His remarks underscore a growing sentiment among industry observers and ethicists that the rapid progression of AI necessitates a focus on robust, systemic guardrails rather than relying solely on the ethical compass of its developers.

The Diller Doctrine: Beyond Individual Trust

Diller, known for his instrumental roles in co-founding Fox Broadcasting and chairing IAC and Expedia Group, publicly vouched for Sam Altman’s character amidst recent reports questioning Altman’s leadership style and candor. These reports, some from former colleagues and board members, have occasionally painted a picture of a manipulative or deceptive approach. However, Diller, who maintains a friendly relationship with Altman, dismissed these concerns as tangential to the real challenge. He described Altman as sincere, decent, and possessing good values, suggesting that many of the leaders at the forefront of AI development are, in fact, well-intentioned stewards.

Yet, Diller’s core argument pivoted sharply from individual ethics to systemic risk. He contended that the issue extends "way beyond trust," proposing that trust itself might become "irrelevant" in the face of AGI’s emergence. This assertion stems from the observation that even the creators of advanced AI systems express a sense of wonder and surprise at the capabilities their innovations manifest. "It’s the great unknown. We don’t know. They don’t know," Diller emphasized, highlighting a fundamental uncertainty surrounding the ultimate trajectory and implications of true artificial general intelligence. This perspective posits that the very nature of such an advanced, autonomous system could lead to outcomes unforeseen by its designers, irrespective of their personal integrity.

Understanding Artificial General Intelligence (AGI)

To fully grasp Diller’s concerns, it is crucial to understand what AGI entails. Unlike the "narrow AI" prevalent today—systems designed for specific tasks like facial recognition, language translation, or game playing—AGI refers to a hypothetical form of artificial intelligence capable of understanding, learning, and applying intelligence to any intellectual task that a human being can. It would possess the ability to generalize knowledge across domains, reason, plan, solve problems, and even create, exhibiting a cognitive flexibility and adaptability currently unique to humans.

The concept of AGI is not new, tracing its roots back to the earliest days of AI research in the mid-20th century. Pioneers like Alan Turing speculated about machines that could think, leading to the famous Turing Test. However, early ambitions often outpaced technological capabilities, leading to periods dubbed "AI winters" where funding and interest waned due to unfulfilled promises. The landscape began to shift dramatically in the 21st century with breakthroughs in computational power, vast datasets, and novel algorithms, particularly deep learning. The rise of large language models (LLMs) like those developed by OpenAI, Google, and others, has reignited the AGI conversation, with many researchers now believing that key components of AGI are being developed at an accelerating pace. While the timeline for AGI’s arrival remains a subject of intense debate—ranging from a few years to several decades or even centuries—Diller’s remarks reflect a growing consensus that its potential emergence is no longer a purely theoretical exercise but a tangible future consideration.

The Unforeseeable Ripple Effects: Market, Social, and Cultural Impact

The prospect of AGI’s arrival carries immense implications across economic, social, and cultural spheres, far exceeding the impact of previous technological revolutions. Economically, AGI could automate virtually any cognitive task, leading to unprecedented gains in productivity and potentially creating vast new industries. However, it also raises significant concerns about widespread job displacement across nearly all sectors, potentially exacerbating wealth inequality if not managed carefully. The market impact could be a radical restructuring of labor, capital, and global supply chains, fundamentally altering how value is created and distributed.

Socially, AGI could revolutionize education, healthcare, and governance. Personalized learning systems, advanced medical diagnostics, and more efficient public services are tantalizing possibilities. Conversely, the deployment of highly autonomous and intelligent systems in critical infrastructure or decision-making processes could introduce new vulnerabilities, ethical dilemmas, and questions of accountability. The very fabric of human interaction might change as people increasingly engage with sophisticated AI entities. Culturally, AGI could challenge fundamental assumptions about human identity, creativity, and purpose. If machines can compose music, write novels, or generate art indistinguishable from human creations, what does that mean for human artistic expression? If AI can surpass human intelligence in every domain, what becomes of humanity’s unique place in the universe? These are not merely speculative questions but increasingly pertinent inquiries shaping contemporary discourse.

Diller’s assertion that "we have embarked on something that is going to change almost everything" resonates deeply with these concerns. The difficulty lies in predicting the emergent properties of such a complex, self-improving system. Unlike a controlled experiment, the introduction of AGI into the global ecosystem is an unparalleled event, making precise foresight exceptionally challenging. The interconnectedness of modern society means that changes in one area, facilitated by AGI, could cascade into unforeseen transformations across multiple domains.

The Imperative for Guardrails: Preventing the Irreversible

Given the scale of potential impact, Diller’s call for "guardrails" is not merely a suggestion but an urgent plea. He warned that if humanity fails to establish these safeguards, an "AGI force" might create its own, leading to an irreversible scenario where "there’s no going back." This echoes a common concern in AI safety research: the "control problem," which explores how to ensure that superintelligent AI systems remain aligned with human values and goals.

The types of guardrails required are multifaceted, spanning technical, ethical, and regulatory dimensions. Technically, this involves developing AI systems that are transparent, interpretable, robust against adversarial attacks, and designed with inherent safety protocols that prevent unintended consequences. Ethically, it necessitates a global dialogue to define shared human values and integrate them into AI design principles, ensuring fairness, accountability, and privacy. Regulatory frameworks, such as the European Union’s AI Act or the various executive orders and legislative proposals in the United States, represent attempts to govern AI development and deployment through legal and policy mechanisms. However, the rapid pace of technological advancement often outstrips the slower process of legislative action, creating a perpetual challenge.

The primary challenge in establishing these guardrails is threefold: global coordination, the speed of development, and the inherent difficulty in precisely defining "alignment." AI development is a global endeavor, making unilateral regulation ineffective. International collaboration is essential but complex, given differing national interests and ethical perspectives. Moreover, the pace at which AI capabilities are advancing means that regulatory efforts can quickly become outdated. Finally, ensuring an AGI is "aligned" with human values is a profound philosophical and engineering challenge, as human values are diverse, context-dependent, and sometimes contradictory.

The Broader Philosophical Debate: Control vs. Autonomy

Diller’s comments touch upon a profound philosophical debate at the heart of AI’s future: the tension between human control over increasingly powerful systems and the potential for AI autonomy. As AI systems become more capable and self-directed, questions arise about where human agency ends and machine autonomy begins. This conversation extends to existential risks, where some experts postulate scenarios ranging from AI unintentionally causing harm through misaligned goals (the "paperclip maximizer" thought experiment) to intentionally hostile superintelligence.

The very notion of "guardrails" implies a human-centric desire to control a potentially superior intelligence. However, if AGI truly achieves generalized intelligence far surpassing human cognitive abilities, the effectiveness of human-designed constraints becomes a critical point of contention. This challenge necessitates not only technical solutions but also a deep introspection into what humanity values, what it means to be intelligent, and how to coexist with entities of potentially greater intellect. The role of public education and broad democratic engagement in shaping these crucial decisions cannot be overstated, as the future of AGI will profoundly affect all of humanity.

In conclusion, Barry Diller’s reflections serve as a powerful reminder that while the character and intentions of AI leaders are certainly important, the impending arrival of AGI introduces a paradigm where individual trust is dwarfed by the systemic uncertainties and transformative potential of the technology itself. His emphasis on the "unknown" and the urgent need for robust "guardrails" shifts the conversation from personal accountability to collective foresight. As investments in AI continue to surge and progress accelerates, the imperative for global collaboration on safety, ethics, and governance becomes paramount, not merely to guide the future of technology, but to safeguard the future of humanity.