Flash floods stand as one of the planet’s most devastating and unpredictable natural hazards, claiming over 5,000 lives annually across the globe. Their rapid onset, localized nature, and immense destructive power have historically rendered them notoriously difficult to forecast with precision, leaving communities vulnerable to their sudden wrath. However, a significant technological leap by Google is poised to redefine disaster preparedness, employing an unconventional yet remarkably effective strategy: harnessing the power of artificial intelligence to glean predictive insights from millions of historical news reports.

The Enduring Challenge of Flash Flood Forecasting

Flash floods are characterized by their swift development, typically occurring within six hours of heavy rainfall, or even instantaneously, following events like dam breaks or sudden ice melt. Unlike riverine floods, which unfold over days and often provide ample warning, flash floods strike with little to no notice, transforming dry landscapes into raging torrents in minutes. This speed, combined with their highly localized footprint – often affecting areas as small as a single watershed – makes them particularly dangerous. They can sweep away vehicles, destroy infrastructure, and engulf entire communities before residents have a chance to evacuate.

Traditional weather forecasting relies on a robust network of meteorological sensors, including radar, satellite imagery, and ground-based gauges that measure rainfall, river levels, and soil moisture. While these systems excel at predicting broader weather patterns and larger-scale hydrological events, they often fall short for flash floods. The sheer density of sensors required to monitor every potential flash flood hotspot globally is economically unfeasible, especially in developing regions. Furthermore, the transient nature of these events means that even where sensors exist, the data collected might be too sparse or intermittent to feed the sophisticated deep learning models that require vast, continuous datasets for accurate training. This fundamental "data gap" has long been the Achilles’ heel of flash flood prediction, leaving many communities exposed.

Google’s Innovative Approach: Groundsource and Gemini

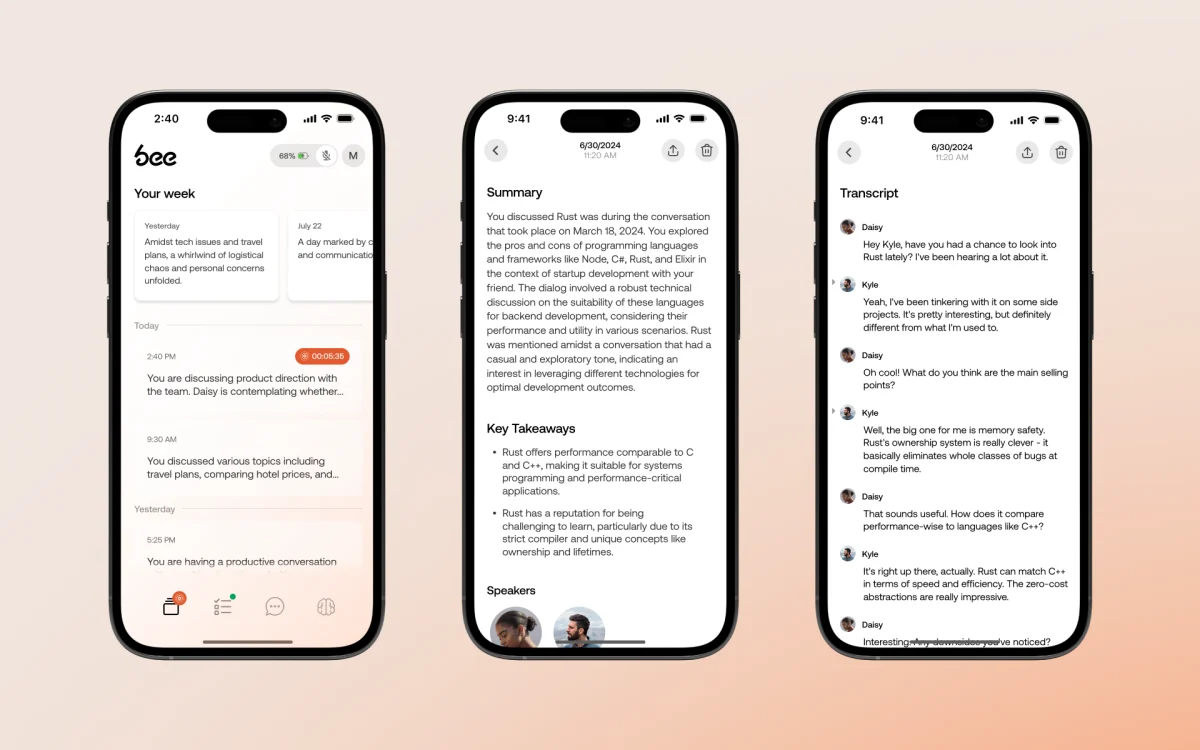

Recognizing this critical void, Google researchers embarked on an audacious project that sidesteps traditional data collection methods entirely. Their solution leverages Gemini, Google’s advanced large language model (LLM), to process and interpret human-generated text. The team fed Gemini an astonishing five million news articles sourced from around the world, spanning decades of reporting. From this colossal textual archive, the LLM meticulously identified and extracted reports pertaining to 2.6 million distinct flood events.

The groundbreaking aspect of this methodology lies in its ability to transform unstructured, qualitative information – newspaper articles, local reports, and online news snippets – into structured, quantitative data. Each identified flood event was geo-tagged with its precise location and timestamped, creating an unprecedented dataset dubbed "Groundsource." This massive compilation of real-world flood occurrences, derived directly from human observation and reporting, serves as a crucial baseline, providing a historical record of "ground truth" that was previously unavailable to machine learning models. According to Gila Loike, a Google Research product manager, this marks the first instance of the company employing language models for such a specialized environmental forecasting application, signaling a new frontier in the application of AI. The research and the Groundsource dataset itself were made publicly available, fostering wider scientific collaboration and application.

Training the Predictive Engine

With Groundsource established as a comprehensive historical record, the next step involved training a sophisticated predictive model. Researchers employed a Long Short-Term Memory (LSTM) neural network, a type of recurrent neural network particularly adept at processing sequential data, like time series. This LSTM model was then fed a continuous stream of global weather forecasts, encompassing various meteorological parameters such as precipitation, temperature, and atmospheric pressure. By correlating these real-time and forecasted weather conditions with the historical flood events documented in Groundsource, the model learns complex patterns and relationships. This allows it to generate a probability of flash floods occurring in a given area, offering a forward-looking assessment of risk.

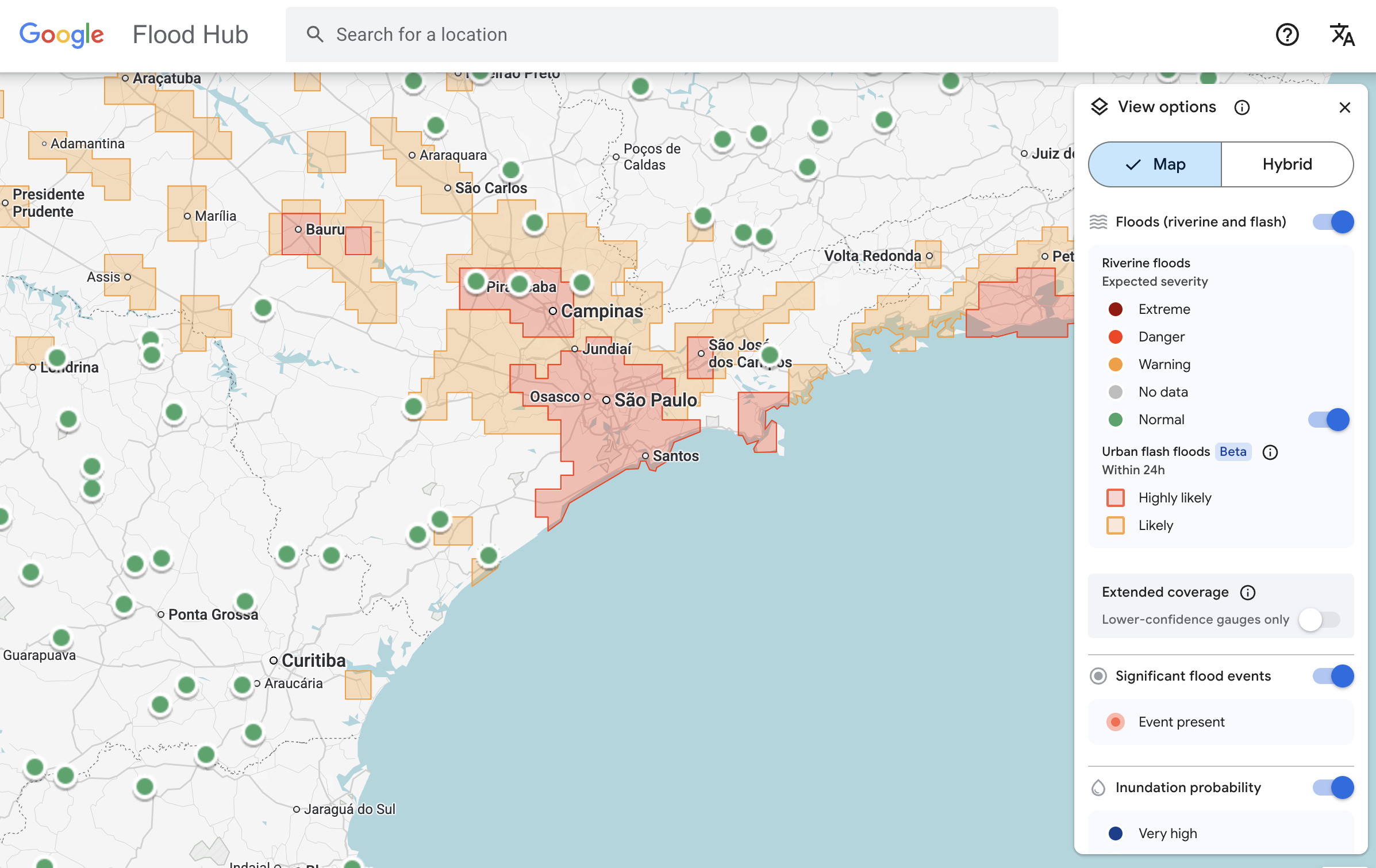

The deployment of this innovative system has been rapid and far-reaching. Google’s flash flood forecasting model is now actively highlighting risks for urban areas across 150 countries through the company’s dedicated "Flood Hub" platform. This platform serves as a central repository for flood alerts and predictive data, making critical information accessible to a wide array of stakeholders. Crucially, Google is also sharing its predictive data directly with emergency response agencies worldwide, integrating this cutting-edge technology into existing disaster management frameworks. Early trials have demonstrated tangible benefits; Antóntio José Beleza, an emergency response official at the Southern African Development Community, reported that the forecasting model significantly improved his organization’s speed and effectiveness in responding to flood events, potentially saving lives and mitigating damage in a region frequently impacted by extreme weather.

Limitations and Strategic Design

While Google’s AI-driven approach represents a monumental leap, the researchers are transparent about its current limitations. One notable aspect is its spatial resolution, which identifies risk across approximately 20-square-kilometer areas. This means the model provides regional warnings rather than hyper-localized, street-level precision. For comparison, highly advanced systems like the U.S. National Weather Service’s flood alert system incorporate granular local radar data, enabling real-time tracking of precipitation down to individual neighborhoods, offering a level of precision that Google’s current model does not yet match.

The absence of local radar data in Google’s model is not an oversight but a deliberate design choice, reflecting the project’s core objective. The system was specifically engineered to operate effectively in regions where local governments lack the financial resources to invest in expensive, state-of-the-art weather-sensing infrastructure, or where extensive historical meteorological records are simply unavailable. As Juliet Rothenberg, a program manager on Google’s Resilience team, explained, "Because we’re aggregating millions of reports, the Groundsource data set actually helps rebalance the map. It enables us to extrapolate to other regions where there isn’t as much information." This strategic focus ensures that the benefits of advanced flood forecasting are extended to the most vulnerable and underserved communities globally, rather than exclusively enhancing capabilities in already data-rich areas.

Broader Societal and Economic Implications

The societal and economic ramifications of this innovation are profound. Flash floods disproportionately affect lower-income communities and developing nations, which often lack robust early warning systems and resilient infrastructure. By providing timely, actionable forecasts in these regions, Google’s model has the potential to dramatically reduce fatalities, injuries, and property damage. Early warnings allow residents to evacuate, secure their belongings, and implement protective measures, thereby minimizing the human and financial toll of these disasters. This translates into stronger community resilience, reduced strain on emergency services, and faster post-disaster recovery.

Beyond immediate disaster response, the ability to predict flash floods more accurately can have cascading economic benefits. Agriculture, a cornerstone of many economies in flood-prone areas, can suffer immense losses from sudden inundations. Better forecasts can inform planting schedules, crop choices, and livestock management. Similarly, infrastructure planning, urban development, and insurance industries can leverage this data to make more informed decisions, mitigating future risks and fostering more sustainable growth. In an era marked by escalating climate change and increasingly frequent extreme weather events, such predictive capabilities are not merely advantageous but becoming an imperative for global stability and human security.

A New Paradigm for Environmental Data Collection

The methodology pioneered by Google, which transforms qualitative textual data into quantitative environmental insights, signifies a potential paradigm shift in how we monitor and understand our planet. The concept of "data scarcity," particularly in geophysics, has long been a formidable challenge for researchers and technologists. Marshall Moutenot, CEO of Upstream Tech and co-founder of dynamical.org – an initiative focused on curating machine learning-ready weather data – highlighted this paradox: "Data scarcity is one of the most difficult challenges in geophysics. Simultaneously, there’s too much Earth data, and then when you want to evaluate against truth, there’s not enough. This was a really creative approach to get that data."

The success of Groundsource opens doors for applying similar LLM-driven approaches to other ephemeral but critical environmental phenomena. Google’s team envisions extending this methodology to build datasets for forecasting heat waves, mudslides, droughts, and even wildfires. Imagine a world where historical news reports, social media discussions, and local community chronicles are continuously analyzed by AI to create comprehensive, real-time datasets for a multitude of environmental hazards. This approach democratizes access to predictive power, empowering regions that have historically been sidelined by a lack of traditional data infrastructure. It underscores the growing role of artificial intelligence not just as a tool for information processing, but as a critical instrument in humanity’s ongoing struggle to adapt to and mitigate the impacts of a changing climate.

In essence, Google’s innovative use of AI to read the world’s news for flash flood prediction is more than a technological achievement; it’s a testament to human ingenuity in bridging critical information gaps. By transforming the historical narrative of human experience with floods into actionable scientific data, this project offers a beacon of hope for enhancing resilience and saving lives in a world increasingly grappling with the volatile forces of nature.