The long-standing quest to accurately measure developer productivity has entered a new, complex era with the widespread adoption of artificial intelligence coding agents. For decades, software engineering managers grappled with metrics ranging from the rudimentary "lines of code" (LOC) to more sophisticated agile methodologies focusing on story points and velocity. However, the advent of generative AI tools, capable of churning out vast quantities of code at unprecedented speeds, has thrown traditional measurement paradigms into disarray, revealing a critical disconnect between perceived efficiency and tangible value.

The Elusive Metric of Developer Productivity

The challenge of quantifying software development output is as old as the industry itself. Early attempts, like counting lines of code, quickly proved fallacious, often incentivizing verbosity over conciseness and quality. The focus then shifted to more abstract measures, such as function points, which aimed to quantify the functional user requirements delivered by software. With the rise of agile development in the early 2000s, metrics like story points, sprint velocity, and burndown charts became prevalent, attempting to gauge team throughput and progress in a more collaborative and iterative environment. Yet, even these metrics, while superior to LOC, often struggled to capture the nuanced contributions of individual engineers, the impact of technical debt, or the long-term maintainability of codebases.

In recent years, the industry has seen a push towards more holistic frameworks like DORA (DevOps Research and Assessment) metrics, which focus on lead time for changes, deployment frequency, mean time to recovery, and change failure rate. These metrics emphasize outcomes related to delivery speed, reliability, and stability, moving beyond mere input or immediate output. This historical context underscores a fundamental truth: what gets measured tends to improve, but measuring the wrong thing can lead to perverse incentives and a skewed understanding of true progress. The current fascination with "tokenmaxxing"—the practice of maximizing the consumption of AI processing power—appears to be the latest iteration of this measurement dilemma, albeit with a new technological twist.

The Rise of AI-Powered Coding and the "Token Economy"

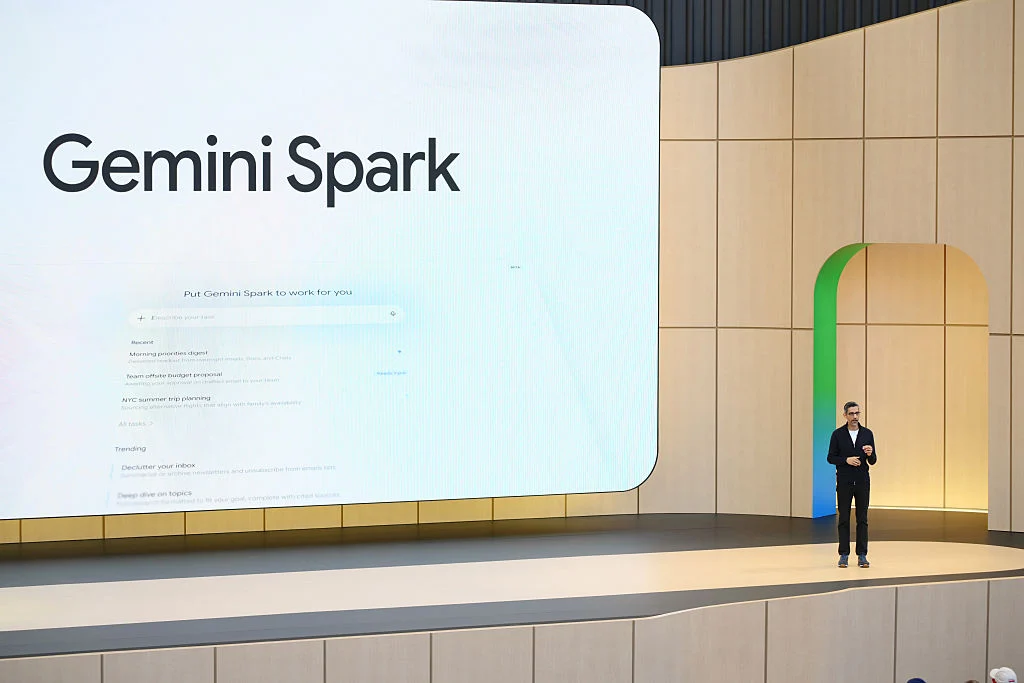

The integration of artificial intelligence into the software development lifecycle began subtly with intelligent autocomplete features and syntax checkers. However, the landscape dramatically shifted with the emergence of powerful large language models (LLMs) specifically trained on vast datasets of code. Tools like GitHub Copilot, Amazon CodeWhisperer, and Google’s Codey, along with specialized platforms such as Claude Code and Cursor, have revolutionized how developers interact with their integrated development environments (IDEs). These tools can generate entire functions, suggest code completions, refactor existing code, and even translate code between languages, all in response to natural language prompts.

The underlying mechanism for these AI models often involves "tokens," which are essentially units of text or code that the AI processes. The "token budget" represents the amount of AI processing power a developer is authorized to consume, often correlating with the complexity or volume of code the AI is asked to generate or analyze. In the fast-paced, innovation-driven culture of Silicon Valley and beyond, a substantial token budget has, for some, become an unofficial badge of honor. It signifies access to advanced computational resources and a perceived commitment to leveraging cutting-edge AI for enhanced productivity. This cultural phenomenon, dubbed "tokenmaxxing," however, represents a dangerous simplification of productivity. It prioritizes an input—the consumption of AI resources—over the actual, valuable output that contributes to a stable, functional, and maintainable software product. Encouraging the use of tokens might boost AI adoption or benefit AI service providers, but it offers little insight into whether development teams are becoming genuinely more efficient or producing higher-quality software.

Initial Gains Versus Lingering Liabilities: The Churn Conundrum

While the initial allure of AI coding agents is undeniable, promising a future of hyper-efficient development, accumulating evidence from specialized analytics firms suggests a more nuanced reality. These companies, operating in the burgeoning "developer productivity insight" sector, are beginning to expose a significant gap between the immediate perception of productivity gains and the long-term impact on code quality and maintainability.

One of the most striking findings revolves around "code churn," which refers to the rate at which newly written code is subsequently modified, rewritten, or deleted. Initial observations often show high "acceptance rates" for AI-generated code, with engineering managers reporting that 80% to 90% of the code proposed by AI tools is approved and integrated into the codebase. This seems to indicate a substantial boost in immediate throughput.

However, firms like Waydev, which provides developer analytics and has recently re-engineered its platform to track AI-generated metadata, reveal a deeper, more problematic trend. Alex Circei, Waydev’s CEO and founder, highlights that while initial acceptance is high, engineers are frequently forced to revisit and revise that "accepted" code in the weeks following its initial integration. This subsequent churn dramatically drives down the real-world acceptance rate, sometimes to as low as 10% to 30% of the original AI-generated code. This means a significant portion of the code initially deemed acceptable ultimately requires substantial human intervention, negating much of the supposed efficiency gain.

Further corroborating these findings, GitClear, another prominent company in this space, published a report in January that detailed how, despite increasing overall code output, regular AI users experienced an alarming 9.4 times higher code churn compared to their non-AI counterparts. This figure more than doubles the productivity gains these tools were observed to provide, suggesting that the velocity increase comes at a substantial cost in terms of code stability and maintainability. Similarly, Faros AI, drawing on two years of customer data for its March 2026 report, identified an astounding 861% increase in code churn under conditions of high AI adoption, a clear indicator that the sheer volume of code generated is often accompanied by a commensurate increase in necessary revisions and refactorings.

Unpacking the Cost: Volume Over Value?

The implications of this high code churn extend beyond mere inconvenience; they carry significant economic and operational costs. While AI tools can rapidly generate code, this speed does not automatically translate into value. In fact, it often introduces "technical debt" at an accelerated pace. Technical debt, analogous to financial debt, represents the deferred cost of choosing an easier, faster solution now instead of a better, more robust approach that would take longer. AI-generated code, particularly when not meticulously reviewed and refined, can be overly generic, inefficient, or simply incorrect for specific architectural needs, leading to future rework, debugging, and maintenance challenges.

Jellyfish, an intelligence platform specializing in AI-integrated engineering, conducted an analysis in the first quarter of 2026 across 7,548 engineers. Their findings offered a stark illustration of the "volume over value" dilemma. Engineers with the largest token budgets did indeed produce the highest number of pull requests—proposed changes to a shared codebase. However, the productivity improvement did not scale proportionally with the increased AI resource consumption. The study found that these high-token users achieved roughly two times the throughput (in terms of pull requests) but at approximately ten times the cost in tokens. This disparity highlights a crucial economic inefficiency: organizations are paying a premium for AI processing power that delivers a disproportionately smaller return in terms of truly valuable, stable code. This translates to wasted computational resources, increased cloud costs for AI services, and ultimately, higher operational expenditures without a corresponding increase in long-term software quality or business value.

Impact on the Engineering Workforce: A Divided Experience

The introduction of AI coding agents is also reshaping the roles and responsibilities within engineering teams, creating a bifurcated experience for developers. While many engineers express enthusiasm for these tools, appreciating the accelerated pace and the ability to offload repetitive tasks, they simultaneously grapple with the mounting burden of code review and technical debt.

A recurring observation is the differential impact on junior versus senior engineers. Junior developers, often eager to leverage new technologies and sometimes lacking the deep architectural understanding or critical code review experience of their senior counterparts, tend to accept a much higher proportion of AI-generated code. This immediate acceptance, while seemingly productive, frequently leads to a greater amount of subsequent rewriting and refactoring. Senior engineers, with their extensive experience and deeper understanding of system complexities, are typically more discerning, spending more time reviewing and correcting AI output. This suggests that while AI can democratize code generation, it also elevates the importance of human expertise in critical evaluation and strategic architectural design. The role of the developer is evolving from purely writing code to increasingly becoming a "prompt engineer," a critical reviewer, and a quality assurance specialist, ensuring that the AI’s output aligns with project requirements and long-term maintainability standards.

The Emergence of "Developer Productivity Insight" Platforms

The challenges posed by AI-driven development have spurred the growth of a new category of enterprise software: "developer productivity insight" platforms. These companies are building sophisticated intelligence layers to track the intricate dynamics of modern software development, especially in an AI-augmented environment. Their rise signals a growing recognition among large organizations that traditional metrics are insufficient for understanding the true return on investment (ROI) from AI coding agents.

Waydev, for instance, founded in 2017, proactively reworked its entire platform in response to the rapid proliferation of AI coding tools. Its new offerings aim to track metadata generated by AI agents, providing analytics on the quality, cost, and eventual churn of AI-assisted code. This allows engineering managers to gain deeper insights into both the adoption rates and the actual efficacy of these tools. The market is clearly responding to this need; Atlassian, a major player in collaboration and development tools, acquired DX, another engineering intelligence startup, for a reported $1 billion, specifically to enhance its customers’ ability to measure and understand the ROI of their coding agents. This strategic acquisition underscores the significant value placed on granular, data-driven insights into engineering performance in the AI era.

Navigating the New Era: Adaptation and Strategic Imperatives

Despite the emerging data highlighting the complexities and potential pitfalls of unbridled AI adoption in coding, there is a unanimous consensus that these tools are not a passing fad. As Alex Circei aptly puts it, "This is a new era of software development, and you have to adapt, and you are forced to adapt as a company. It’s not like it will be a cycle that will pass." The transformational power of AI is undeniable, offering unprecedented speed and assistance, but its effective integration demands a strategic, rather than merely reactive, approach.

Organizations must move beyond simplistic metrics like token consumption or initial code acceptance rates. The imperative now is to develop a more sophisticated understanding of "developer productivity" that accounts for code quality, long-term maintainability, the reduction of technical debt, and the ultimate delivery of business value. This requires investing in robust analytics platforms, fostering a culture of critical code review, and training engineers to effectively prompt, evaluate, and refine AI-generated content. The future of software development will undoubtedly be AI-augmented, but true productivity will stem not from maximizing inputs, but from intelligently integrating these powerful tools to produce high-quality, sustainable outputs that drive genuine innovation and growth. The journey ahead involves continuous adaptation, learning, and a recalibration of what success truly means in an increasingly intelligent coding landscape.