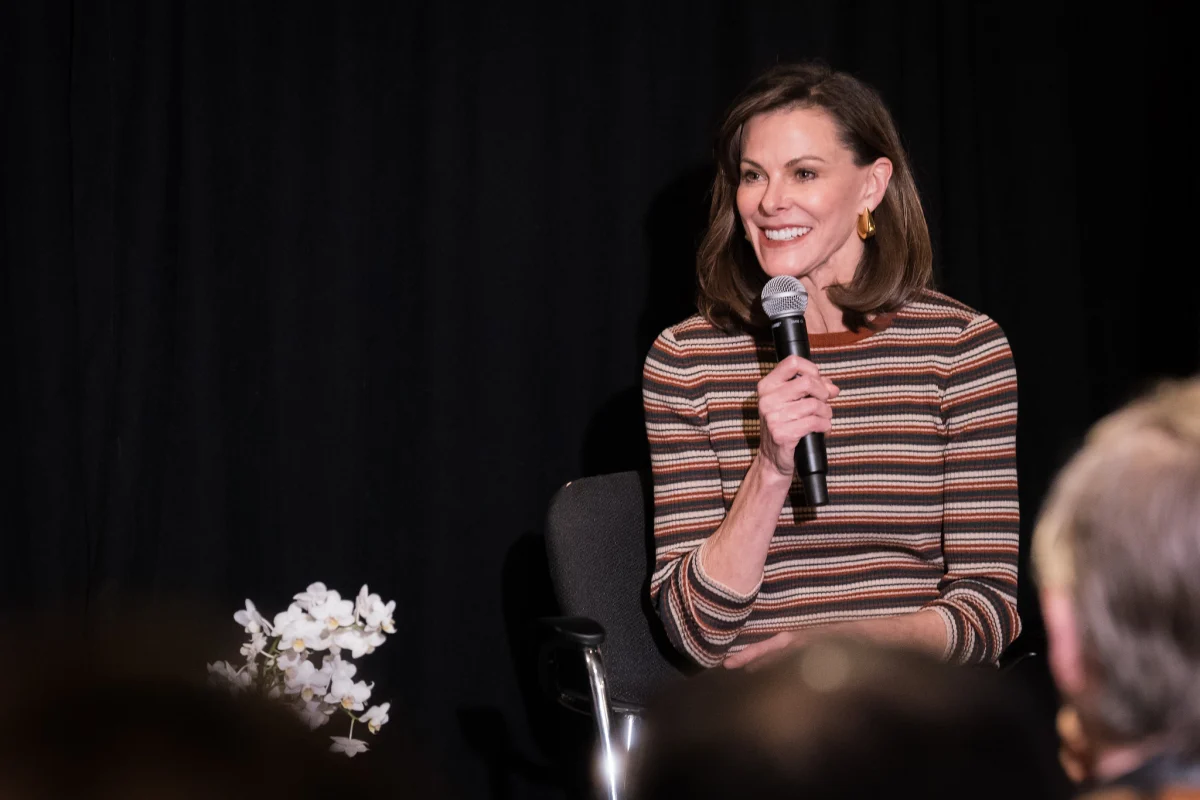

Campbell Brown, a figure long synonymous with the pursuit of accurate information—first through her distinguished career as a broadcast journalist and later as Meta’s inaugural head of news partnerships—now confronts a familiar specter in the burgeoning age of artificial intelligence. Having witnessed firsthand how digital platforms can inadvertently amplify misinformation, she perceives a critical juncture in how society consumes information, prompting a proactive stance to prevent historical missteps from recurring. Her latest endeavor, Forum AI, represents a decisive move to imbue AI systems with a profound understanding of truth and nuance, particularly in areas fraught with complexity.

The Genesis of Forum AI

The impetus for Forum AI, a New York-based venture established 17 months ago, crystallized for Brown during a pivotal moment: the public release of ChatGPT while she was still at Meta. "I remember really shortly after realizing this is going to be the funnel through which all information flows. And it’s not very good," she reflected, articulating a profound concern that transcended professional responsibility to touch on personal anxieties, particularly regarding the intellectual development of her own children. This recognition underscored an alarming gap between the rapid advancement of AI capabilities and the foundational commitment to factual integrity. Brown observed that while foundational model companies were intensely focused on technical aspects like coding and mathematical precision, the more amorphous and challenging domain of news and nuanced information was often relegated to a secondary concern, if addressed at all. For her, this omission was not merely an oversight but a critical flaw demanding immediate rectification, a sentiment that fueled the creation of Forum AI.

Navigating High-Stakes Topics

Forum AI’s operational core revolves around evaluating how advanced AI models perform on what Brown terms "high-stakes topics." These encompass critical domains such as geopolitics, mental health, finance, and hiring – subjects characterized not by binary "yes-or-no" answers but by intricate layers of murkiness, nuance, and complexity. The company’s methodology is groundbreaking: it enlists preeminent global experts to design sophisticated benchmarks, which then serve as the training ground for specialized AI judges. These AI judges are subsequently tasked with evaluating the performance of various foundation models at scale. In the realm of geopolitics, for instance, Forum AI has assembled an impressive roster of advisors, including distinguished academics like Niall Ferguson, renowned journalists such as Fareed Zakaria, former Secretary of State Tony Blinken, former House Speaker Kevin McCarthy, and Anne Neuberger, who previously directed cybersecurity efforts in the Obama administration. The ambitious objective is to achieve a roughly 90% consensus between these AI judges and their human counterparts, a benchmark that Forum AI reports it has consistently met. This collaborative approach underscores a belief that human expertise remains indispensable in shaping reliable AI, ensuring that artificial intelligence understands the subtleties that machines often miss.

Lessons from the Social Media Era

Brown’s journey to Forum AI is deeply informed by her tenure at Meta, where she gained an intimate understanding of the perils inherent in platforms optimizing for metrics other than truth. During her time overseeing news partnerships at Facebook, she candidly acknowledged the significant challenges encountered in combating misinformation. The fact-checking initiatives she helped establish, for instance, no longer exist in their original form. This experience served as a stark lesson: prioritizing engagement metrics, while potentially beneficial for platform growth and advertising revenue, often yields detrimental societal consequences, leaving users less informed and contributing to a fractured information landscape. The erosion of trust in traditional media, exacerbated by the viral spread of unverified content on social platforms, provides a powerful historical backdrop to Brown’s current mission. Landmark events like the Cambridge Analytica scandal, the proliferation of "fake news" during elections, and the ongoing debates around content moderation all highlight the profound and often unforeseen societal impacts when technological innovation outpaces ethical oversight and a commitment to accuracy. Brown is determined that AI not repeat these mistakes, recognizing that the stakes are even higher given AI’s potential to generate and disseminate information at an unprecedented scale, influencing everything from individual decisions to geopolitical stability.

The Current State of AI Accuracy

Initial evaluations conducted by Forum AI have unveiled a sobering reality regarding the leading AI models. Brown cited instances of models like Gemini inadvertently sourcing information from Chinese Communist Party websites, even for topics unrelated to China, highlighting potential biases or flawed data ingestion processes. Furthermore, a discernible left-leaning political bias was observed across nearly all models tested. Beyond overt biases, Forum AI’s assessments have uncovered subtler yet equally problematic deficiencies: missing crucial context, overlooking diverse perspectives, and presenting "straw-man" arguments without adequate acknowledgment or counter-perspectives. These findings collectively paint a picture of AI systems that, despite their impressive linguistic capabilities, often struggle with the nuanced understanding and balanced presentation characteristic of human-level journalistic or expert analysis. "There’s a long way to go," Brown affirmed, acknowledging the complexity of the problem. However, she added, "But I also think that there are some very easy fixes that would vastly improve the outcomes." This optimistic outlook suggests that while the challenges are significant, they are not insurmountable, provided the industry shifts its focus from sheer output to qualitative accuracy and contextual integrity.

The Enterprise Imperative and Market Dynamics

Brown harbors the hope that AI can break the cycle of prioritizing engagement over truth, a paradigm that plagued social media. She posits that while the trajectory of AI remains uncertain – either catering to user preferences or striving for veracity – the enterprise sector might prove to be an unexpected but crucial ally. Businesses increasingly rely on AI for critical functions such as credit decisions, loan approvals, insurance assessments, and hiring processes. In these contexts, liability and accuracy are paramount. Companies deploying AI in these sensitive areas will inherently demand systems that are rigorously tested and demonstrably "right," not merely engaging or plausible. This burgeoning enterprise demand forms the bedrock of Forum AI’s business model. However, translating this interest in compliance into consistent revenue presents its own set of challenges. Brown notes that a significant portion of the current market remains content with perfunctory "checkbox audits" and standardized benchmarks that she considers woefully inadequate for true evaluation. The existing compliance landscape, in her estimation, is "a joke." She points to the example of New York City’s pioneering hiring bias law, which mandated AI audits, yet an investigation by the state comptroller revealed that over half of the audited systems contained violations that went undetected. This illustrates a critical disconnect between regulatory intent and practical implementation, underscoring the need for more sophisticated, domain-specific evaluation methods that extend beyond superficial checks.

The Quest for Trust and Rigorous Evaluation

Real evaluation, Brown argues, necessitates deep domain expertise to navigate not only predictable scenarios but also the myriad "edge cases" that can lead to unforeseen complications and legal liabilities. This level of scrutiny demands considerable time and specialized knowledge, making it clear that "smart generalists aren’t going to cut it." The investment required for such thorough vetting is substantial, yet it is precisely this rigor that Brown believes will foster genuine trust in AI. Forum AI, having secured $3 million in funding last autumn led by Lerer Hippeau, is strategically positioned to bridge the chasm between the AI industry’s often utopian self-perception and the tangible experiences of everyday users. While leaders in big tech frequently evangelize about AI’s transformative potential – predicting cures for diseases or radical shifts in employment – the average person interacting with a chatbot often encounters "slop and wrong answers." This stark contrast fuels a justifiable skepticism among consumers, with trust in AI systems currently residing at remarkably low levels. Brown observes a "totally different conversation" unfolding within Silicon Valley compared to the one among consumers, highlighting a critical need for the industry to align its aspirations with the practical realities of accurate, reliable information delivery. Ultimately, Forum AI’s mission extends beyond mere technical refinement; it aims to re-anchor artificial intelligence in a framework of truth and accountability, ensuring that as AI reshapes the world, it does so on a foundation of integrity, earning the public’s confidence rather than eroding it.