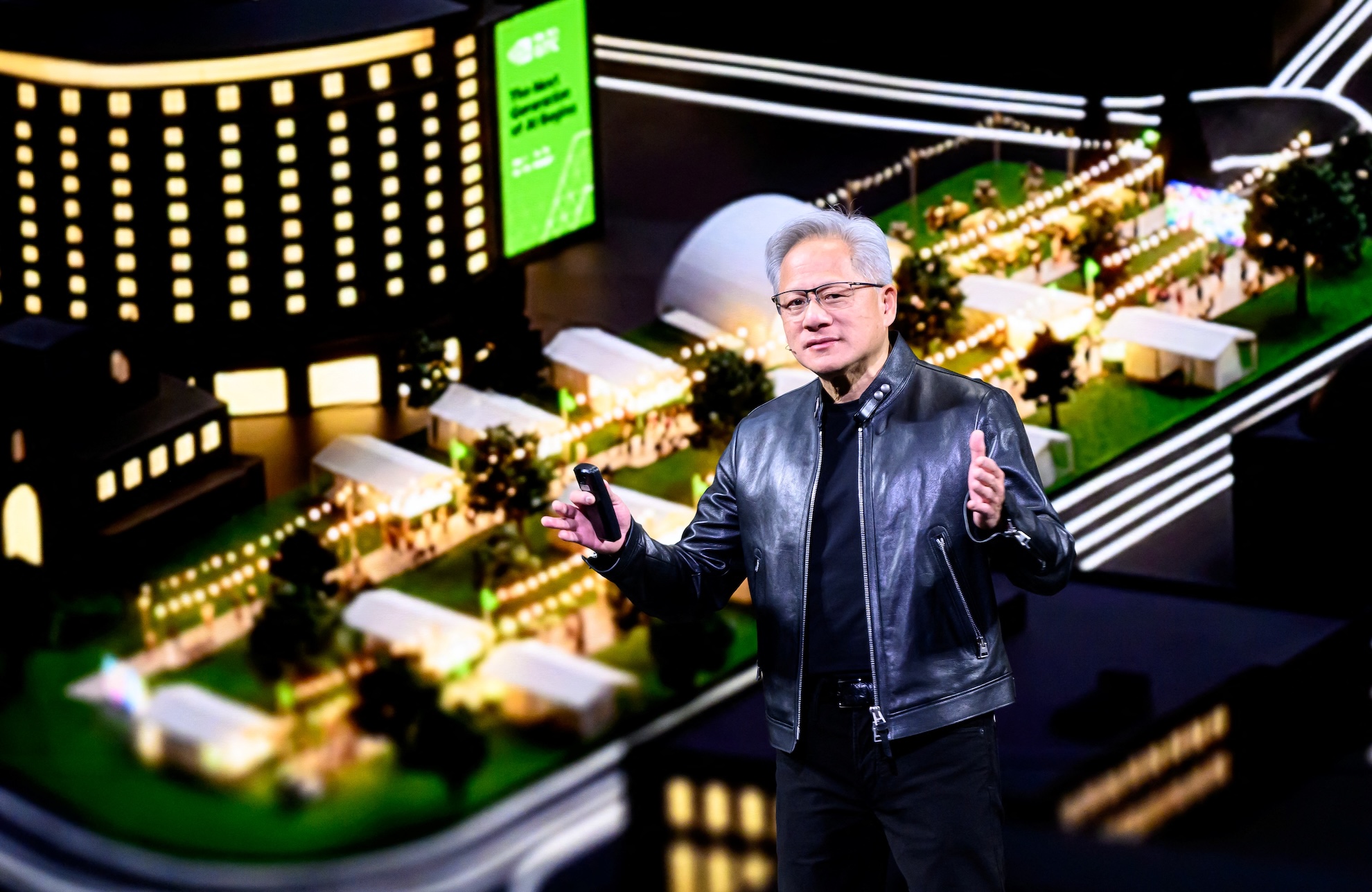

Nvidia CEO Jensen Huang captivated the technology world at the company’s annual GTC Conference in San Jose, California, on March 16, 2026, by unveiling a staggering financial projection that underscores the relentless acceleration of the artificial intelligence revolution. Amidst a flurry of technical specifications and advancements, Huang declared an anticipated $1 trillion in orders for Nvidia’s next-generation Blackwell and Vera Rubin computing chips through 2027, a forecast that signals profound implications for the semiconductor industry and the broader digital economy. This revised outlook doubles an earlier projection of $500 billion in demand for the same architectures through 2026, illustrating an unprecedented surge in the global appetite for advanced AI infrastructure.

The Epicenter of AI Innovation: GTC and Nvidia’s Vision

The GPU Technology Conference (GTC) serves as Nvidia’s premier platform for showcasing its latest innovations and setting the agenda for the future of accelerated computing and artificial intelligence. For years, this event has evolved from a niche gathering for graphics developers into a pivotal summit for AI researchers, industry leaders, and tech enthusiasts worldwide. Jensen Huang, a co-founder and the charismatic leader of Nvidia, has consistently used the GTC stage to articulate a grand vision for how graphics processing units (GPUs) are not merely enhancing but fundamentally reshaping computing paradigms, moving from a niche component for gaming to the foundational engine of modern AI. His pronouncements often carry significant weight, directly influencing market sentiment and strategic directions across the tech sector.

Nvidia’s journey to becoming a dominant force in AI hardware began decades ago with its focus on high-performance graphics cards for gaming. However, the company shrewdly recognized the parallel processing capabilities inherent in its GPU architecture were perfectly suited for the computationally intensive demands of deep learning and machine learning algorithms. This strategic pivot, championed by Huang, transformed Nvidia from a graphics chip manufacturer into the indispensable provider of "picks and shovels" for the AI gold rush. The company’s CUDA platform, a parallel computing architecture, further solidified its ecosystem, enabling developers to harness the power of GPUs for a vast array of scientific and commercial applications, thereby creating a formidable moat against competitors.

Unpacking the Power of Blackwell and Vera Rubin

The core of Huang’s latest projection rests on the capabilities of Nvidia’s Blackwell and the upcoming Vera Rubin chip architectures. The Blackwell platform, designed to succeed the highly successful Hopper architecture, represents a significant leap forward in GPU technology, specifically tailored to meet the escalating demands of training and deploying increasingly complex AI models. These chips are engineered to deliver unparalleled performance, energy efficiency, and scalability, crucial factors as AI models grow in size and complexity, often requiring billions or even trillions of parameters.

The Vera Rubin architecture, first announced in 2024 and officially entering production in January 2026, takes this innovation even further. Huang has consistently described Rubin as the cutting edge in AI hardware, designed to outperform its Blackwell predecessor by substantial margins. Nvidia’s specifications for Rubin indicate a performance increase of 3.5 times faster than Blackwell on model-training tasks and an astonishing 5 times faster on inference tasks, reaching a peak performance of 50 petaflops. These figures translate into tangible benefits for AI developers and enterprises: faster training times for sophisticated neural networks, quicker deployment of AI models into real-world applications, and the ability to tackle previously intractable computational problems. The company has articulated plans to ramp up Rubin production significantly in the latter half of the year, signaling its readiness to meet the burgeoning demand.

A Historical Trajectory to AI Supremacy

Nvidia’s current market position is the culmination of a deliberate, long-term strategy that began shifting its focus beyond consumer graphics in the early 2000s. The introduction of CUDA in 2006 was a watershed moment, opening GPUs to general-purpose computing and laying the groundwork for their eventual dominance in scientific computing and, critically, artificial intelligence. Researchers quickly discovered that GPUs, with their thousands of processing cores, were far more efficient than traditional CPUs for the parallel computations required by early machine learning algorithms.

The mid-2010s marked the true inflection point. As deep learning models gained prominence, fueled by breakthroughs in neural network architectures and the availability of large datasets, the demand for GPU acceleration exploded. Nvidia’s Pascal, Volta, and Ampere architectures rapidly succeeded one another, each offering significant performance improvements that became essential for training state-of-the-art models like those powering large language models (LLMs) and generative AI. The Hopper architecture, preceding Blackwell, further cemented Nvidia’s leadership, becoming the backbone for many of the foundational AI models being developed today. This rapid succession of increasingly powerful architectures, coupled with a robust software ecosystem, has created a formidable barrier to entry for potential competitors and positioned Nvidia as the indispensable partner for anyone building advanced AI.

Market Implications and Economic Ripple Effects

Jensen Huang’s $1 trillion sales projection for Blackwell and Rubin chips by 2027 is more than just a financial forecast; it’s a barometer of the global economic shift towards AI-centric infrastructure. For Nvidia, this figure signifies sustained, aggressive growth, potentially cementing its position as one of the world’s most valuable technology companies. Investors are keenly attuned to these signals, as Nvidia’s stock performance has often mirrored the broader excitement and investment in the AI sector.

The ripple effects extend far beyond Nvidia itself. The unprecedented demand puts immense pressure and opportunity on the entire semiconductor supply chain, from raw material suppliers to advanced packaging facilities. Foundries like TSMC, which are critical partners in manufacturing Nvidia’s advanced chips, stand to benefit significantly. The increased production of these sophisticated components also influences global trade dynamics, technology policy, and even geopolitical considerations, given the strategic importance of advanced semiconductors.

Furthermore, this projection highlights the massive capital expenditure being undertaken by cloud service providers (CSPs), enterprise customers, and AI startups worldwide. Companies like Microsoft, Amazon, Google, and Meta are investing billions in building out their AI infrastructure, with Nvidia’s GPUs forming the core of these data centers. This investment is driven by a competitive imperative to develop and deploy cutting-edge AI applications, from advanced conversational agents and intelligent automation to scientific discovery and autonomous systems. The sheer scale of this investment indicates a collective belief across industries that AI is not a fleeting trend but a fundamental paradigm shift that will redefine products, services, and business models for decades to come.

The Broader Societal and Technological Shift

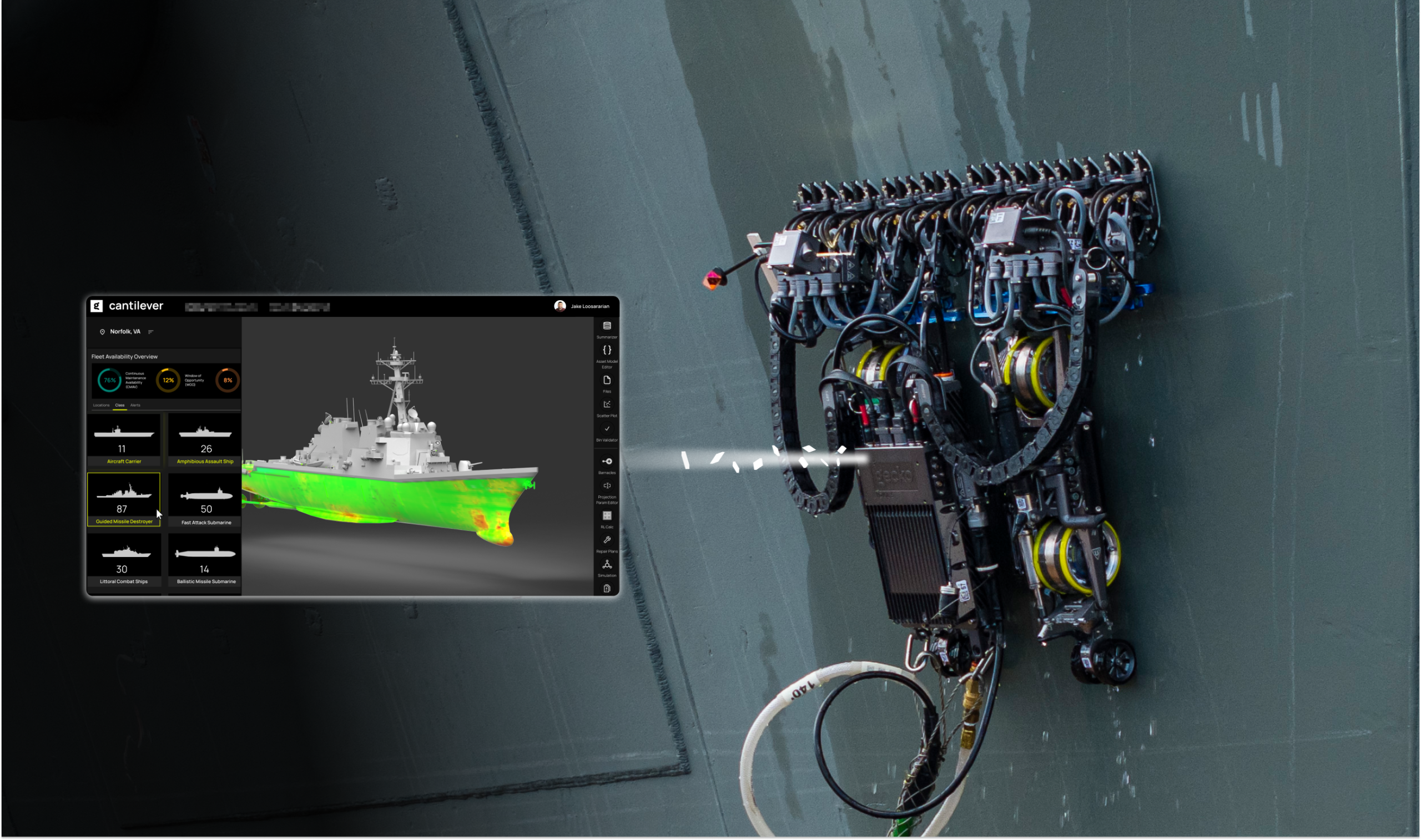

The deployment of Blackwell and Rubin architectures on such a colossal scale will undoubtedly accelerate the pace of AI development and its integration into daily life. With more powerful and efficient hardware, researchers can train larger, more complex AI models, leading to breakthroughs in areas such as drug discovery, climate modeling, materials science, and personalized medicine. For instance, the ability to process vast amounts of genomic data or simulate complex molecular interactions at unprecedented speeds could dramatically shorten the time it takes to develop new therapies or understand diseases.

In the enterprise sector, these chips will power a new generation of AI-driven solutions, enhancing productivity, automating routine tasks, and providing deeper insights from data. From sophisticated fraud detection systems in finance to predictive maintenance in manufacturing and hyper-personalized customer experiences in retail, the applications are boundless. The entertainment industry will see further advancements in generative content creation, making everything from special effects to interactive narratives more immersive and realistic. Even autonomous driving, a field heavily reliant on real-time AI processing, stands to benefit from the enhanced inference capabilities of Rubin, leading to safer and more reliable self-driving vehicles.

Culturally, the widespread adoption of AI powered by such advanced hardware will continue to reshape human-computer interaction, alter job markets, and raise important ethical considerations. As AI becomes more ubiquitous and capable, discussions around data privacy, algorithmic bias, and the future of work will intensify, requiring thoughtful societal responses to manage this transformative technology responsibly.

Challenges and Future Outlook

While Jensen Huang’s optimistic forecast paints a picture of boundless growth, the path forward is not without potential challenges. Maintaining such aggressive production ramp-ups requires robust supply chain management and resilience against geopolitical uncertainties that could disrupt semiconductor manufacturing. Competition, though currently lagging, is also a factor. Companies like AMD and Intel are investing heavily in their own AI accelerator roadmaps, aiming to capture a share of this lucrative market. Moreover, the long-term sustainability of exponential growth in AI hardware demand depends on the continued development of innovative AI applications and the willingness of industries to integrate these technologies at scale.

Nvidia also faces the strategic dilemma of balancing its proprietary ecosystem, built around CUDA, with the industry’s increasing push for open standards. While CUDA has been a key competitive advantage, a more open AI hardware landscape could emerge, potentially altering market dynamics. Despite these considerations, Nvidia’s aggressive innovation cycle, coupled with its deep engagement with the developer community, positions it strongly for the foreseeable future. The company’s commitment to releasing new architectures at a rapid pace—roughly every two years—ensures that it remains at the forefront of AI hardware evolution.

Jensen Huang’s declaration of a $1 trillion demand for Blackwell and Rubin chips is a powerful testament to the transformative power of AI and Nvidia’s pivotal role in enabling it. It signifies not just a financial milestone for a single company, but a critical indicator of the technological trajectory of the entire global economy, propelling humanity further into an AI-driven era.