In a significant development for the artificial intelligence landscape, Multiverse Computing, a rising technology firm based in Spain, has unveiled a freely accessible, highly compressed large language model (LLM). This strategic move, which sees the release of an enhanced version of its HyperNova 60B model on the popular Hugging Face platform, directly addresses a core challenge facing widespread AI adoption: the prohibitive scale and computational demands of cutting-edge AI systems. By offering a significantly smaller yet powerful alternative, Multiverse aims to bridge the chasm between the advanced capabilities of frontier models and the practical, cost-effective deployment needs of businesses and developers globally.

The Quest for Leaner AI: Addressing the Scale Challenge

The rapid evolution of large language models has been nothing short of transformative, enabling breakthroughs in natural language understanding, generation, and complex problem-solving. However, this progress has come with a substantial trade-off: immense size. Modern LLMs, often comprising hundreds of billions or even trillions of parameters, demand colossal computational resources for both training and inference. This translates into staggering energy consumption, extended processing times, and significant financial outlays for specialized hardware and cloud infrastructure. For many enterprises, particularly small and medium-sized businesses, the cost and technical complexity associated with deploying and maintaining these behemoths have acted as formidable barriers to entry, limiting their ability to leverage advanced AI solutions.

The sheer volume of data and the intricate neural network architectures required for these models contribute to their substantial footprint. Training a single state-of-the-art LLM can consume as much energy as hundreds of homes in a year, raising concerns about environmental sustainability and the democratization of AI. Consequently, the pursuit of more efficient, compact AI models has become a critical area of research and development, with innovators striving to maintain high performance while drastically reducing resource requirements. This challenge forms the backdrop against which Multiverse Computing’s latest offering emerges, promising a viable pathway to more accessible and sustainable AI.

Quantum-Inspired Compression: Multiverse’s CompactifAI Breakthrough

At the heart of Multiverse Computing’s solution is its proprietary compression technology, aptly named CompactifAI. This innovative methodology draws inspiration from principles typically found in quantum computing, applying sophisticated algorithms to condense the vast datasets and complex parameters of large language models without significantly compromising their accuracy or output quality. While the precise quantum mechanics are proprietary, the conceptual connection suggests an approach that leverages highly efficient data representation and optimized computational pathways, echoing how quantum algorithms can process information in fundamentally different, often more efficient, ways than classical computing.

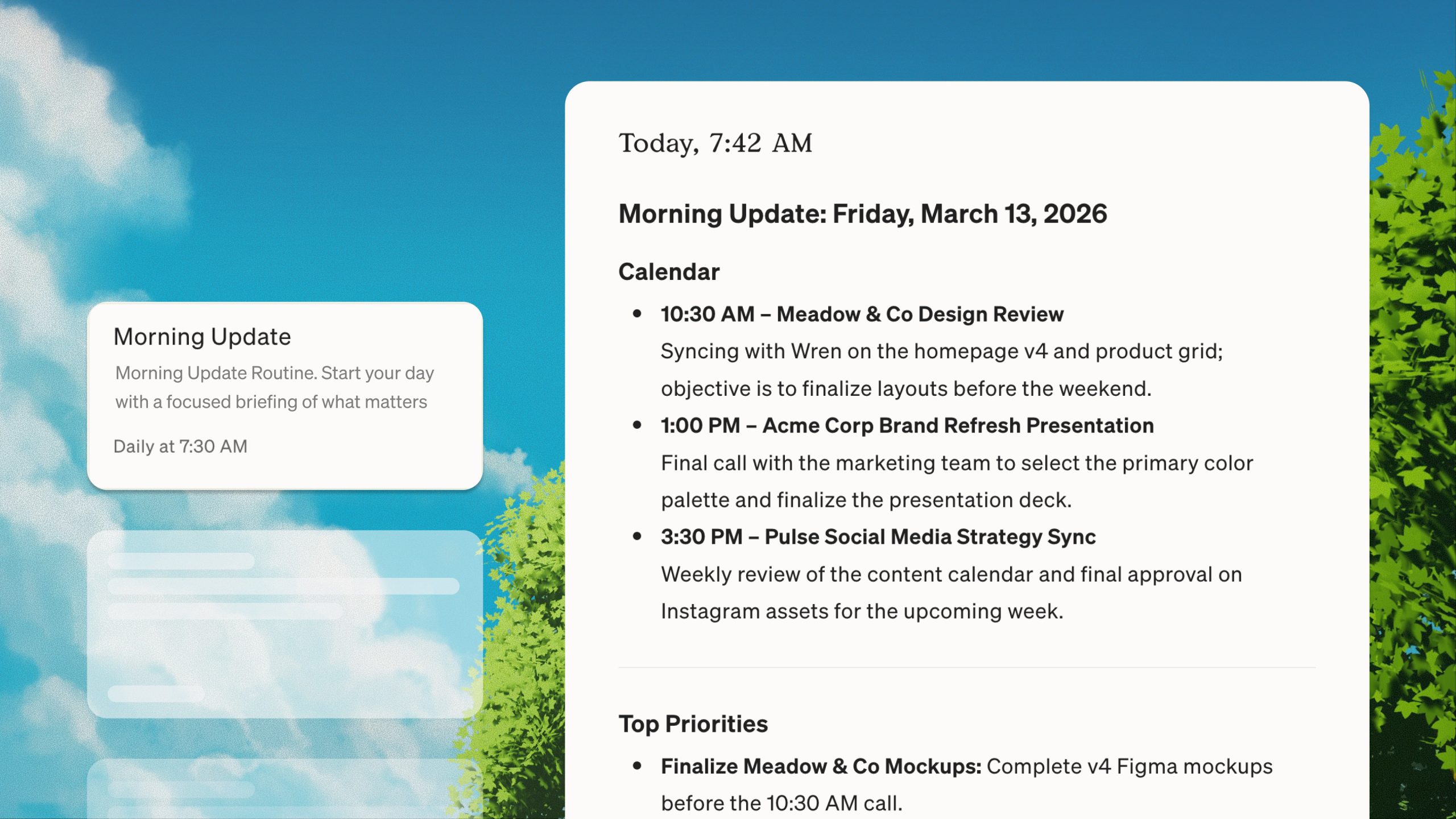

Multiverse has strategically applied CompactifAI to existing models, including those originally released by industry leaders like OpenAI. The result is a series of models that retain much of the predictive power and linguistic nuance of their larger counterparts but with a dramatically reduced footprint. The updated HyperNova 60B 2602 model exemplifies this achievement. Weighing in at just 32GB, it represents approximately half the deployment size of the OpenAI model it is derived from, which is indicated to be a 120-billion parameter model (gpt-oss-120b). This significant reduction in file size directly translates to lower memory usage during operation and, critically, lower latency during inference. For real-world applications, this means faster responses, more efficient processing on less powerful hardware, and a substantial decrease in operational costs.

Beyond its core efficiency, the HyperNova 60B 2602 version introduces enhanced support for "tool calling" and "agentic coding." These advanced capabilities are crucial for enterprise adoption, allowing the AI model to interact with external tools, APIs, and databases, and to plan and execute multi-step tasks autonomously. In scenarios where inference costs can quickly escalate due to complex workflows or iterative decision-making, the optimized performance of HyperNova 60B 2602 offers a compelling economic advantage. This move positions Multiverse Computing not just as a provider of smaller models, but as an enabler of more sophisticated and cost-effective AI applications in demanding business environments.

Democratizing Advanced AI: Open Access and Developer Empowerment

The decision by Multiverse Computing to make its HyperNova 60B 2602 model freely available on Hugging Face underscores a broader trend towards democratizing access to advanced AI technologies. Hugging Face has become a de facto central repository for machine learning models, datasets, and tools, fostering a vibrant global community of developers and researchers. By contributing its compressed model to this ecosystem, Multiverse Computing is not only increasing the visibility and adoption of its technology but also empowering a wider array of innovators who might otherwise be constrained by the resource demands of larger models.

This open-access strategy holds significant implications for the future of AI development. It enables startups, academic institutions, and individual developers to experiment with and integrate high-performance LLMs into their projects without incurring prohibitive licensing fees or infrastructure costs. Furthermore, Multiverse’s stated intention to open-source additional compressed models in 2026 suggests a long-term commitment to fostering an open and collaborative AI landscape. Such initiatives can accelerate innovation, drive diverse use cases, and reduce the technological gap between well-funded industry giants and emerging players. The availability of efficient, open-source models can also spur competition, pushing the entire industry towards more optimized and accessible solutions.

A European Powerhouse Emerges: Competing on the Global Stage

Multiverse Computing’s ascent marks a significant moment for the European AI ecosystem, positioning it as a formidable competitor to established tech giants predominantly based in the United States and, increasingly, Asia. The company openly positions its HyperNova model as a rival to offerings from other prominent AI firms, including the French decacorn Mistral AI and its Mistral Large 3 model. This technological rivalry highlights a broader ambition within Europe to cultivate its own champions in the critical field of artificial intelligence.

Beyond direct competition, Multiverse Computing shares notable similarities with Mistral AI, underscoring a common strategic playbook for European AI success. Both companies have rapidly expanded their global footprint, establishing offices across the United States, Canada, and various European locations. This international presence is crucial for attracting top talent, securing diverse client bases, and staying abreast of global market trends. Furthermore, both firms have successfully engaged with large enterprise customers, a testament to the robustness and practical applicability of their AI solutions. Multiverse Computing boasts an impressive roster of clients, including the Spanish energy giant Iberdrola, the German engineering and technology company Bosch, and the Bank of Canada, demonstrating its ability to deliver value in highly regulated and demanding sectors.

The emergence of strong European AI players like Multiverse and Mistral is not merely about market share; it carries significant geopolitical and economic implications. It addresses growing concerns about "AI sovereignty," referring to the ability of nations and regions to control and develop their own AI infrastructure, data, and models, rather than relying solely on foreign providers. This is particularly important for sensitive sectors like defense, finance, and critical infrastructure, where data security and ethical governance are paramount. By fostering local AI capabilities, Europe aims to ensure digital autonomy, protect national interests, and stimulate economic growth within the continent.

Strategic Investments and Sovereign Ambitions

Multiverse Computing’s rapid growth has garnered considerable attention from investors. The company is currently rumored to be in active discussions for a fresh funding round that could see it raise approximately €500 million, potentially valuing the firm at over €1.5 billion. While Multiverse has confirmed ongoing discussions without commenting on specific figures, such an investment would solidify its "soonicorn" status – a term for a startup rapidly approaching the coveted $1 billion valuation mark – and underscore investor confidence in its technological prowess and market strategy. This potential funding round would be a significant indicator of the increasing capital flowing into European deep tech.

To put Multiverse’s trajectory into perspective, its rumored annual recurring revenue (ARR) of €100 million in January, though unconfirmed by the company, pales in comparison to OpenAI’s reported $20 billion ARR. However, it aligns more closely with the impressive growth seen by Mistral AI, whose ARR soared past $400 million, fueled by a surging demand for alternatives to dominant U.S. technologies. Multiverse’s strategic alignment with the concept of "sovereign solutions across the AI stack" resonates deeply with European governments and industries keen on developing independent technological capabilities. This focus on digital sovereignty is not just a marketing slogan but a strategic imperative.

This geopolitical undercurrent has already translated into concrete support for Multiverse Computing. The company recently secured a significant collaboration with the regional government of Aragón in northeastern Spain, further cementing its role in national technological development. Additionally, the Spanish Agency for Technological Transformation (SETT) participated in Multiverse’s substantial $215 million Series B funding round last year. Since its inception, the startup has also benefited from consistent backing from the Basque region, its home base, which has actively fostered an environment conducive to innovation and the growth of high-tech enterprises. This robust government and regional support highlights the strategic importance placed on nurturing domestic AI champions within Spain and the broader European Union.

The Path Forward: Sustaining Innovation and Impact

As Multiverse Computing continues its impressive growth trajectory, it faces the challenges inherent in scaling a cutting-edge AI company. Attracting and retaining top-tier talent in a highly competitive global market, managing expanding infrastructure demands, and continuously innovating to stay ahead of the rapidly evolving AI landscape will be crucial. The ongoing debate within the AI community regarding the optimal balance between model size and efficiency remains a dynamic field, with new architectural breakthroughs and compression techniques constantly emerging. Multiverse’s commitment to quantum-inspired approaches and open-source models positions it well within this evolving discussion.

The broader impact of accessible, efficient AI models like HyperNova 60B 2602 cannot be overstated. By lowering the barriers to entry, Multiverse is enabling a wider range of industries—from healthcare and finance to manufacturing and logistics—to explore and implement advanced AI solutions. This democratization fosters innovation, drives productivity, and can lead to the development of novel applications previously deemed too costly or complex. The company’s plans to open-source more models in 2026 suggest a long-term vision for contributing to a more inclusive and sustainable AI future. As the world grapples with the immense power and potential of artificial intelligence, Multiverse Computing’s focus on efficiency and accessibility offers a compelling model for responsible and impactful technological advancement.