OpenAI, a leading force in artificial intelligence research and development, has officially announced its acquisition of Promptfoo, a specialized startup focusing on AI security. The strategic move, finalized on March 9, 2026, underscores a growing industry imperative to fortify advanced AI systems, particularly autonomous agents, against an evolving landscape of digital threats. This integration is poised to significantly enhance the robustness and reliability of OpenAI’s enterprise-grade AI agent platform, OpenAI Frontier, addressing critical concerns surrounding data integrity, operational security, and compliance in the deployment of sophisticated AI solutions.

The Strategic Imperative of AI Security

The rapid proliferation of large language models (LLMs) and the subsequent emergence of AI agents capable of performing complex, multi-step tasks have generated immense excitement across industries. These agents promise unprecedented gains in productivity, automation, and innovation, ranging from automating customer service workflows and financial analysis to streamlining research processes and personal digital assistance. However, this transformative potential comes with inherent risks. As AI systems become more autonomous and integrate deeper into critical business operations, their security vulnerabilities present fresh opportunities for malicious actors. These threats include sophisticated prompt injection attacks designed to manipulate agent behavior, data exfiltration attempts targeting sensitive information processed by LLMs, and the potential for agents to be coerced into executing harmful or unintended actions.

The acquisition of Promptfoo directly addresses these escalating concerns. By embedding specialized security tools and methodologies directly into its core offerings, OpenAI aims to preemptively mitigate risks and establish a higher standard of trust for AI deployment in sensitive environments. This move reflects a broader industry recognition that AI safety is no longer a theoretical research pursuit but an urgent, practical challenge demanding robust engineering solutions.

Understanding Promptfoo’s Expertise

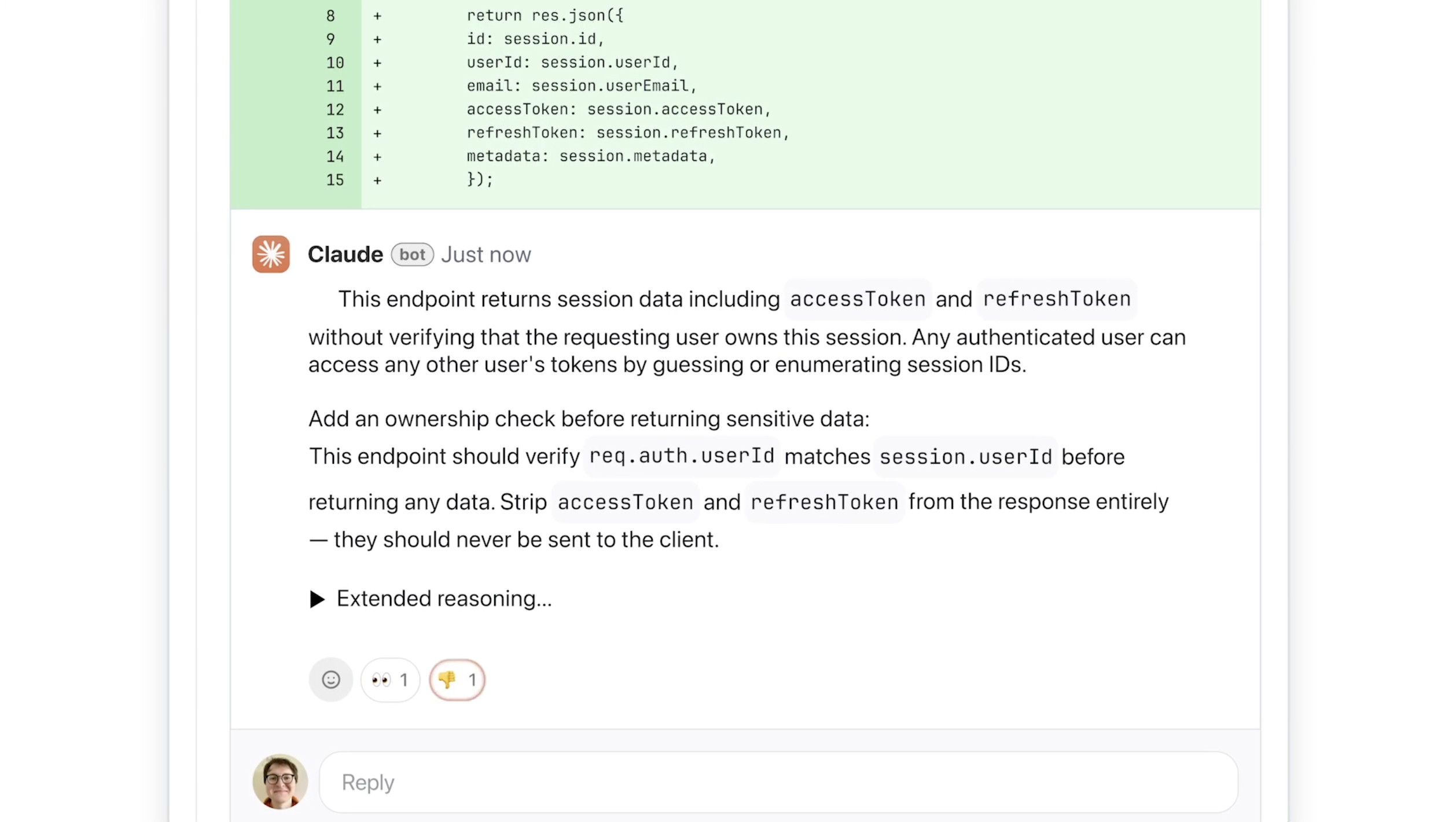

Founded in 2024 by Ian Webster and Michael D’Angelo, Promptfoo quickly established itself as a key innovator in the nascent field of AI security. The company’s core mission revolves around providing developers and enterprises with sophisticated tools to test and validate the security posture of their LLM-powered applications and AI agents. Among its flagship offerings are an intuitive open-source interface and a comprehensive library designed to identify vulnerabilities such as prompt injection, data leakage, and adversarial attacks. These tools allow organizations to systematically evaluate how their AI models respond to various inputs, ensuring they behave as intended and do not inadvertently expose sensitive information or execute unauthorized commands.

Promptfoo’s rapid adoption by a significant portion of the Fortune 500—reportedly over 25%—highlights the acute need for such specialized solutions within the enterprise sector. Despite having raised a modest $23 million in funding, culminating in an $86 million valuation in July 2025, the company’s impact has been substantial. OpenAI’s decision to acquire Promptfoo, with the transaction value undisclosed, signals a clear endorsement of its technology and a recognition of its critical role in securing the next generation of AI applications. The integration will empower OpenAI Frontier to perform automated "red-teaming" exercises, systematically evaluate agentic workflows for potential security gaps, and continuously monitor activities for risks and compliance deviations. Furthermore, OpenAI has committed to continuing the development and support of Promptfoo’s open-source initiatives, ensuring that the broader AI community benefits from these advanced security capabilities.

The Rise of AI Agents and Their Inherent Challenges

The journey of artificial intelligence has seen several paradigm shifts, from expert systems and machine learning algorithms to the recent explosion of generative AI and large language models. The current frontier is increasingly defined by "AI agents"—software entities that can autonomously perceive their environment, reason about their goals, plan a sequence of actions, and execute those actions without direct human intervention for each step. Unlike simple LLMs that generate responses based on a single prompt, agents can break down complex tasks, interact with various tools (databases, APIs, web browsers), learn from feedback, and adapt their strategies over time.

This evolution unlocks immense potential for automation and complex problem-solving. Imagine AI agents handling end-to-end customer support, managing intricate supply chains, or even conducting scientific research autonomously. However, their very autonomy introduces a new class of security and safety challenges. An agent operating with access to sensitive data and external systems presents a larger attack surface than a static model. A compromised agent could lead to unauthorized data access, system manipulation, financial fraud, or even physical damage if integrated with real-world control systems. The ability to "jailbreak" an LLM (tricking it into bypassing safety guidelines) is one concern; the ability to "jailbreak" an agent (coercing it to perform a series of harmful actions across multiple systems) is an entirely different magnitude of risk. Securing these sophisticated, interconnected systems is paramount for their responsible and widespread deployment.

OpenAI’s Broader Vision and AI Safety

OpenAI’s trajectory since its founding in 2015 has been characterized by an ambitious mission: to ensure that artificial general intelligence (AGI) benefits all of humanity. Initially established as a non-profit, it later transitioned to a capped-profit model to attract the necessary capital for developing increasingly powerful AI models. Key milestones like the release of the GPT series (GPT-3, GPT-4), DALL-E, and the widely adopted ChatGPT have dramatically pushed the boundaries of what AI can achieve, bringing sophisticated generative capabilities into the mainstream.

Throughout its history, OpenAI has consistently emphasized the importance of AI safety and alignment. Early research focused on theoretical aspects of preventing AI systems from developing unintended or harmful goals. However, as AI models became more capable and real-world deployment became imminent, the focus shifted towards practical security measures, ethical guidelines, and robust testing protocols. The acquisition of Promptfoo represents a significant tactical step in operationalizing this commitment to safety. By integrating advanced red-teaming and monitoring capabilities, OpenAI is not merely reacting to existing threats but proactively building resilience into its foundational agent platforms. This aligns with its broader strategy of fostering responsible AI development, which includes ongoing research into interpretability, adversarial robustness, and fair AI practices. It also serves to reassure enterprise clients, for whom security and compliance are non-negotiable prerequisites for adopting cutting-edge AI technologies.

Market Dynamics and the Competitive Landscape

The acquisition signals a maturing AI security market, where specialized startups are increasingly becoming attractive targets for larger AI developers. As the AI industry continues its rapid growth, fueled by substantial investments and intense competition, the demand for robust security solutions is skyrocketing. Every major player, from Google and Microsoft to Anthropic and Meta, is grappling with the complexities of securing their own AI models and the applications built upon them. The ability to demonstrate superior security and reliability could become a significant differentiator in a crowded market.

This trend of strategic acquisitions is likely to continue as AI companies seek to integrate best-in-class solutions rather than building everything in-house. It reflects a race not just for computational power and model scale, but also for foundational trust and safety infrastructure. Regulatory bodies worldwide are also taking a keen interest in AI safety, with legislation like the EU’s AI Act and various proposed frameworks in the U.S. aiming to establish standards for transparency, risk assessment, and accountability. Companies that can demonstrate a proactive approach to security, such as through comprehensive testing and monitoring facilitated by tools like Promptfoo’s, will be better positioned to navigate this evolving regulatory landscape and gain a competitive edge. The market impact extends beyond direct security solutions, influencing the insurance industry, cybersecurity services, and even corporate governance as organizations grapple with the implications of AI-driven decision-making and automation.

The Future of AI Deployment and Building Trust

OpenAI’s acquisition of Promptfoo is more than just a business transaction; it represents a tangible commitment to fostering trust in the next generation of AI systems. The ability of AI agents to operate autonomously, often interacting with sensitive data and critical infrastructure, necessitates an unparalleled level of confidence in their security and ethical alignment. By enhancing its agent platform with Promptfoo’s advanced testing and monitoring capabilities, OpenAI is signaling to developers, enterprises, and the public that the safety and reliability of its AI technologies are paramount.

The commitment to continue building out Promptfoo’s open-source offering is particularly noteworthy. This approach allows the broader developer community to benefit from sophisticated security tools, fostering a more secure AI ecosystem overall. It also enables greater transparency and collaborative problem-solving, which are crucial for addressing complex and rapidly evolving AI security challenges. In an era where AI promises to reshape industries and daily life, the foundation of secure and trustworthy AI systems will be the bedrock upon which widespread adoption and societal benefit are built. This acquisition marks a significant step toward that future, emphasizing that innovation must walk hand-in-hand with robust security measures to truly unlock the transformative potential of artificial intelligence.