A fundamental challenge in the burgeoning field of artificial intelligence, particularly with advanced deep learning models, lies in comprehending the rationale behind their outputs. Whether grappling with a large language model’s (LLM) unexpected political leanings, its tendency towards excessive flattery, or its propensity for generating factually incorrect information, delving into the intricate workings of a neural network comprising billions of parameters has remained an elusive endeavor. Now, a San Francisco-based startup, Guide Labs, is presenting a novel solution to this persistent problem. The company, co-founded by CEO Julius Adebayo and Chief Science Officer Aya Abdelsalam Ismail, recently introduced Steerling-8B, an 8-billion parameter LLM made available as open-source, distinguished by a pioneering architectural design engineered to facilitate unprecedented transparency in its operations.

The Enigma of AI Decision-Making: A Pervasive Industry Challenge

The term "AI black box" has become synonymous with the opaqueness inherent in many complex machine learning models. These systems, particularly deep neural networks, process vast amounts of data through layers of interconnected nodes, making decisions based on learned patterns that are often inscrutable to human observers. This lack of transparency poses significant hurdles across various sectors. For developers, debugging and refining models can be an exercise in frustration, as pinpointing the source of an error or undesirable behavior within the neural network’s architecture is exceedingly difficult. For end-users and organizations deploying AI, the inability to understand why a model reached a particular conclusion erodes trust and complicates accountability.

Instances abound where this opacity has led to critical issues. Reports from various corners of the AI landscape highlight models exhibiting biases learned from their training data, manifesting as discriminatory outcomes in areas like hiring or loan applications. Others struggle with "hallucinations," confidently generating plausible-sounding but entirely fabricated information. The fine-tuning process itself can be fraught with unexpected consequences, as seen with some cutting-edge LLMs that have displayed peculiar ideological biases or a tendency to agree excessively with user prompts, regardless of accuracy. The increasing sophistication of AI only magnifies these concerns, emphasizing the urgent need for mechanisms that can demystify their internal processes and render their decision-making understandable.

A Historical Quest for Explainable AI

The pursuit of explainable AI (XAI) is not a new concept, but its urgency has intensified with the rise of deep learning. In the early days of artificial intelligence, systems were often rule-based or symbolic, making their logic inherently transparent. If an expert system recommended a certain action, its underlying rules could be easily traced and understood. However, as machine learning evolved, particularly with the advent of powerful statistical models and later deep learning, performance soared, often at the cost of interpretability. These models excelled at identifying complex patterns in data but offered little insight into the how and why of their predictions.

For years, research into XAI predominantly focused on "post-hoc" explanation techniques. These methods attempt to interpret a pre-existing, opaque model after it has been trained, essentially trying to peer into the black box from the outside. Techniques like LIME (Local Interpretable Model-agnostic Explanations) and SHAP (SHapley Additive exPlanations) gained prominence, providing insights into which features were most influential in a model’s prediction for a specific instance. While valuable, these post-hoc approaches often provide approximations rather than a fundamental understanding of the model’s internal reasoning. Julius Adebayo, during his doctoral studies at MIT, contributed to this critical discourse, co-authoring a widely referenced 2020 paper that highlighted the inherent limitations and potential unreliability of many existing methods for understanding deep learning models. This foundational research underscored the need for a paradigm shift, moving beyond attempts to dissect opaque models towards designing interpretability directly into the AI’s architecture from its inception.

Guide Labs’ Architectural Innovation: Inherent Interpretability

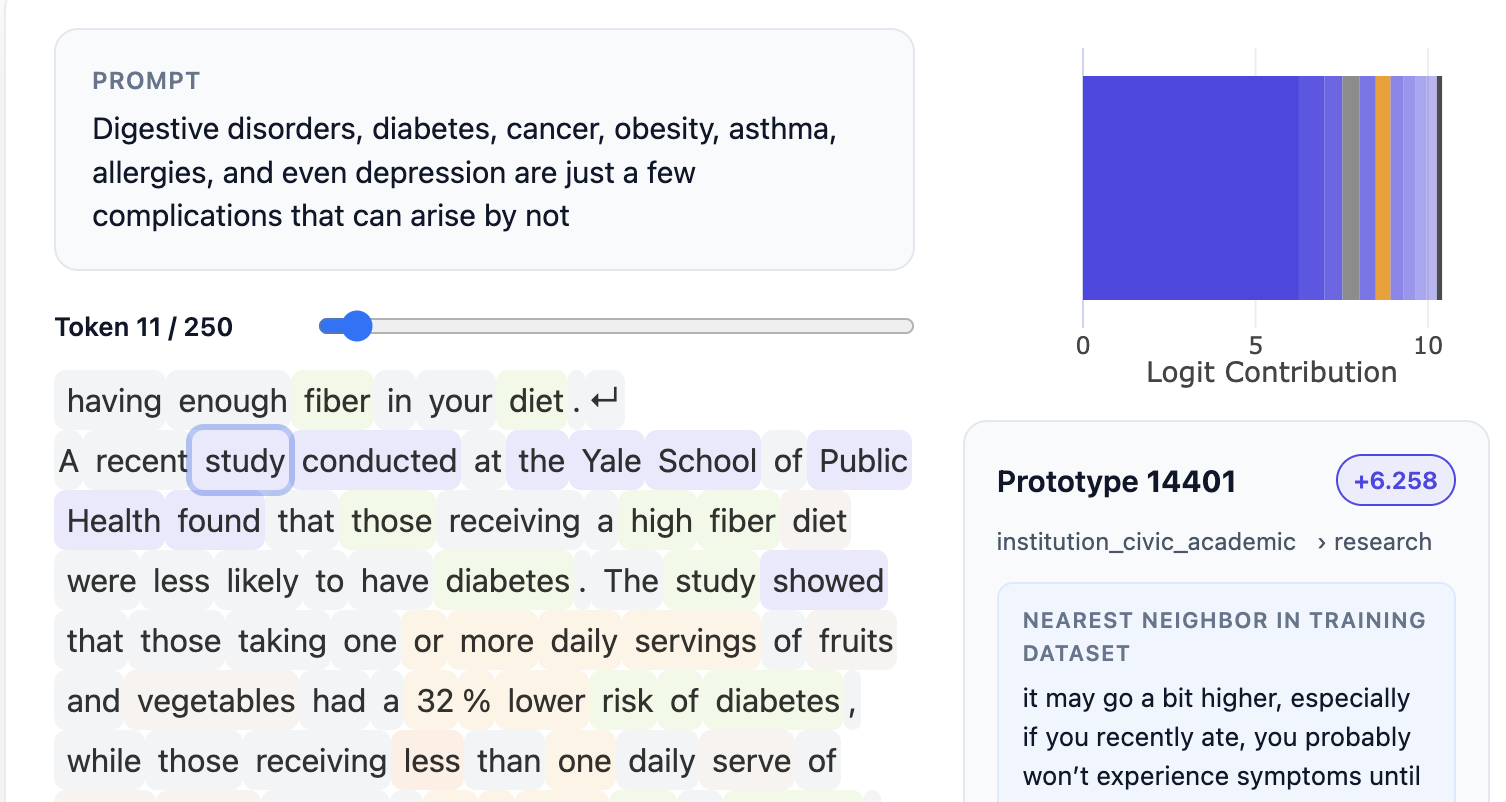

Guide Labs’ Steerling-8B represents this very paradigm shift. Instead of attempting to explain a black box after the fact, the company has engineered interpretability into the very fabric of the model. At the heart of this innovation lies a novel architectural design that integrates a "concept layer" within the LLM. This layer functions by categorizing and associating specific pieces of training data with distinct concepts. The groundbreaking aspect of this approach is that every single token generated by the model can be directly traced back to its originating data points and the concepts it represents within the LLM’s vast training corpus.

This traceability offers a spectrum of insights, ranging from straightforward to highly complex. On a basic level, it allows developers and users to pinpoint the specific reference materials or factual sources that informed a model’s statement. This capability is invaluable for verifying information, combating misinformation, and ensuring factual accuracy. On a more sophisticated plane, this architecture can illuminate how the model interprets and encodes nuanced concepts such as humor, gender, or cultural contexts. For instance, understanding how a model processes and represents gender information, or any other sensitive attribute, enables developers to precisely manage and control these conceptual representations. As Adebayo explained, current methods for controlling such intricate concepts within traditional models are often "fragile," making this inherent interpretability a "holy grail" in AI development. While this approach necessitates more upfront data annotation, Guide Labs has mitigated this challenge by leveraging other AI models to assist in the labeling process, enabling them to scale this novel training methodology effectively. This method fundamentally redefines the pursuit of AI understanding, shifting it from an investigative "neuroscience on a model" to a deliberate act of engineering, embedding transparency directly into the system’s design.

Broadening the Horizons: Applications and Societal Impact

The implications of such inherently interpretable LLMs are far-reaching, promising to reshape how AI is developed, deployed, and regulated across various sectors.

-

Regulatory Compliance and Ethical AI: For industries subject to stringent regulations, such as finance, healthcare, and legal services, interpretability is not merely a desirable feature but a critical necessity. In finance, for example, an LLM evaluating loan applications must base its decisions solely on relevant financial records, explicitly excluding factors like race or gender. The ability to trace every output to its conceptual origins ensures compliance with anti-discrimination laws and facilitates robust auditing. Similarly, in healthcare, where AI assists in diagnostics or treatment recommendations, understanding the model’s reasoning is paramount for patient safety and medical ethics. This architectural innovation empowers developers to proactively identify and mitigate biases, ensuring fairness and accountability in AI systems.

-

Intellectual Property and Content Control: In the realm of content generation, interpretability offers powerful tools for managing intellectual property. Model builders can effectively block the use of copyrighted materials by tracing and deactivating the concepts linked to such data. Furthermore, it provides granular control over outputs related to sensitive subjects like violence, hate speech, or drug abuse, enabling the development of safer and more responsible AI.

-

Accelerating Scientific Discovery: Beyond commercial applications, interpretability holds immense promise for scientific research. Deep learning models have achieved remarkable breakthroughs, such as in protein folding, where they can predict complex protein structures with high accuracy. However, scientists often require more than just the correct answer; they need insight into why the software arrived at a particular successful combination. Understanding the model’s reasoning process can unlock new hypotheses, accelerate experimental design, and deepen human comprehension of complex biological or physical phenomena.

-

Addressing the "Emergent Behavior" Concern: A common concern surrounding highly structured and interpretable AI architectures is whether they might inadvertently stifle the "emergent behaviors" that make current LLMs so powerful – their ability to generalize and discover novel patterns not explicitly programmed. Guide Labs addresses this by tracking what they term "discovered concepts," where the model autonomously identifies and learns new conceptual relationships, such as "quantum computing," without explicit prior tagging. This suggests that the balance between interpretability and emergent intelligence might not be a zero-sum game, but rather a carefully engineered coexistence.

Performance, Scalability, and the Future Landscape

Despite its novel architecture, Steerling-8B demonstrates competitive performance, achieving approximately 90% of the capabilities of existing, less transparent models. Crucially, it accomplishes this with less training data, a testament to the efficiency gained through its designed interpretability. This indicates that the trade-off between performance and transparency, often perceived as an insurmountable barrier, can be significantly narrowed. Adebayo asserts that training interpretable models is no longer a purely scientific endeavor but has matured into an engineering problem, suggesting that these models can scale and potentially match the performance of frontier-level models with many more parameters.

Guide Labs, having emerged from the prestigious Y Combinator accelerator and secured a $9 million seed round led by Initialized Capital in November 2024, is now focused on its next strategic steps. This includes developing larger, more powerful interpretable models and expanding access to users through APIs and agentic interfaces. This move signifies a clear intent to commercialize their innovation and make inherent interpretability widely available to developers and enterprises.

Cultivating Trust in an Increasingly Intelligent World

The long-term vision articulated by Guide Labs extends beyond mere technical prowess; it aims to profoundly impact the relationship between humans and artificial intelligence. As Adebayo emphasized, the current methods for training models are still "primitive" in terms of transparency, and "democratizing inherent interpretability is actually going to be a long-term good thing for our human race." In a future where AI systems are poised to become "super intelligent" and increasingly integral to decision-making processes across all facets of life, the ability to understand their rationale is not just a convenience but a fundamental requirement for trust and control. The open-sourcing of Steerling-8B marks a significant step towards fostering a future where AI’s power is matched by its transparency, ensuring that as these intelligent systems evolve, humanity retains a clear understanding of their inner workings and the foundations of their decisions. This development paves the way for a more accountable, ethical, and ultimately, more trustworthy artificial intelligence ecosystem.