The semiconductor titan, Nvidia, currently holding the distinction of being one of the world’s most valuable corporations, recently unveiled a quarterly financial report that shattered previous records, underscoring the relentless, exponential ascent in demand for artificial intelligence computing power. This remarkable performance solidifies Nvidia’s pivotal role as the primary architect of the foundational infrastructure powering the global AI revolution, positioning it at the very heart of the technological transformation reshaping industries worldwide.

The Unprecedented Surge in AI Demand

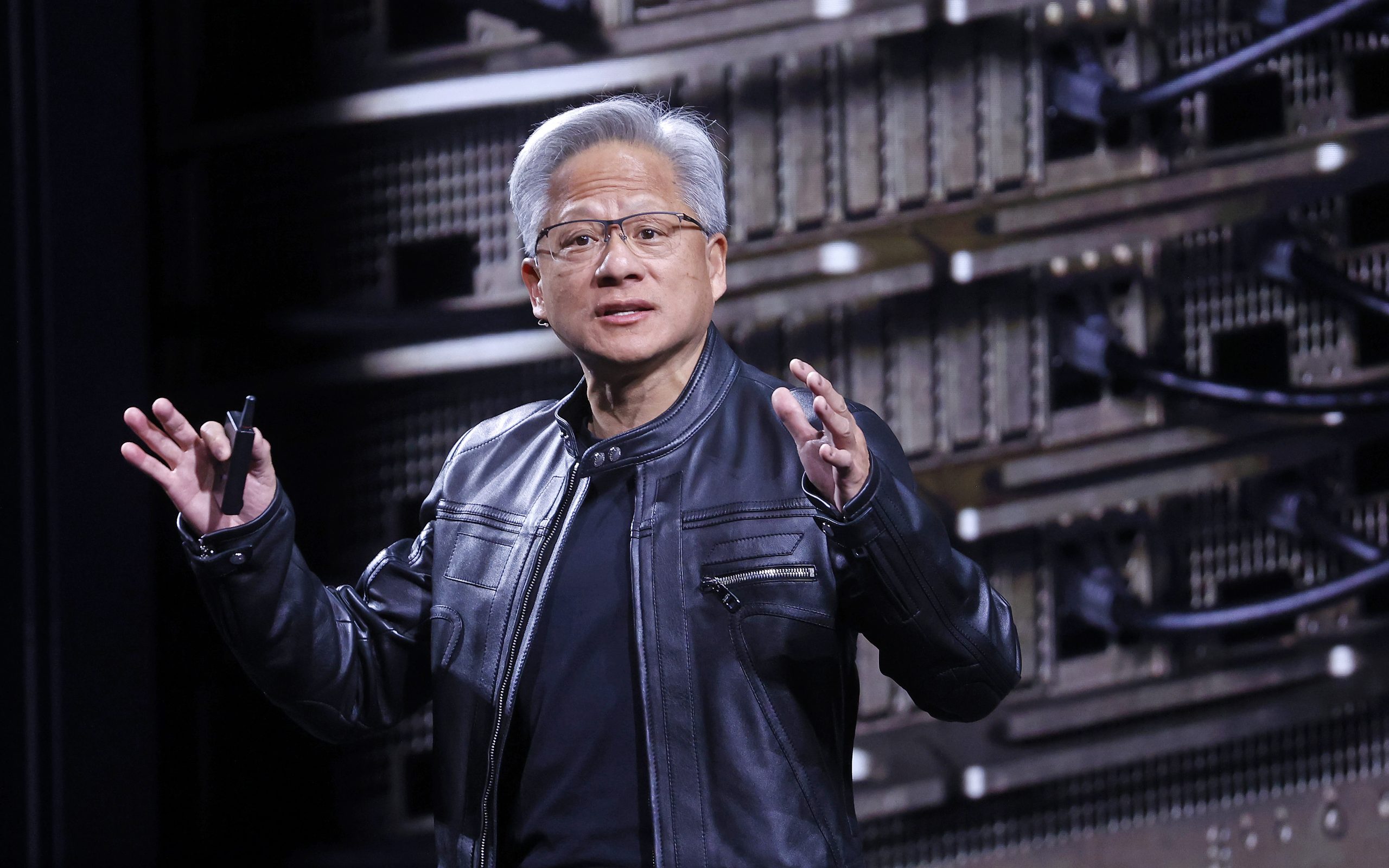

Jensen Huang, Nvidia’s visionary Chief Executive Officer, articulated the current market dynamics with striking clarity during an analyst call following the earnings release. He observed that "the demand for tokens in the world has gone completely exponential," a statement that encapsulates the voracious appetite for processing capacity required by advanced AI models. This "token" demand refers to the discrete units of data processed by large language models (LLMs) and other generative AI applications, which are becoming ubiquitous across consumer and enterprise sectors. The CEO highlighted an extraordinary symptom of this demand: even graphics processing units (GPUs) that are six years old and typically considered legacy hardware within the rapidly evolving tech landscape are fully utilized in cloud environments, driving up their market value and rental prices. This phenomenon vividly illustrates the critical shortage of AI-capable compute resources and Nvidia’s near-monopoly on the high-performance chips essential for training and deploying these sophisticated AI systems.

The current AI boom can be traced back to a series of breakthroughs in deep learning, particularly the advent of transformer architectures in 2017, which revolutionized natural language processing and paved the way for the sophisticated large language models we see today. Nvidia’s foresight in shifting its focus from primarily gaming GPUs to developing specialized architectures for parallel processing, like CUDA, in the mid-2000s, positioned it perfectly for this paradigm shift. Its GPUs became the de facto standard for AI research and development, providing the massive parallel processing capabilities necessary to handle the immense datasets and complex algorithms of modern neural networks. The ongoing explosion in generative AI applications, from text generation and image creation to advanced scientific simulations and drug discovery, continues to fuel this insatiable demand for Nvidia’s silicon.

Financial Triumphs and Strategic Splits

In its most recent fiscal quarter, Nvidia reported an astonishing $68 billion in revenue, marking a staggering 73% increase from the same period in the prior year. The vast majority of this financial surge, specifically $62 billion, originated from the company’s data center business. This segment is the bedrock of Nvidia’s AI dominance, encompassing the high-performance GPUs and supporting infrastructure that power cloud computing, enterprise AI, and scientific research. The comprehensive annual revenue reached an impressive $215 billion, underscoring a period of sustained, explosive growth for the company.

A deeper dive into the data center revenue reveals a strategic segmentation. Nvidia delineated $51 billion as "compute revenue," predominantly derived from the sale of its cutting-edge GPUs, such as the H100 and the newer H200 series, which are specifically engineered for AI workloads. The remaining $11 billion came from "networking products," primarily NVLink. This distinction is critical, as NVLink is Nvidia’s proprietary high-speed interconnect technology, designed to link multiple GPUs together into supercomputing clusters. Its substantial contribution to revenue highlights Nvidia’s strategy to provide not just individual chips, but comprehensive, integrated AI platforms. This full-stack approach ensures optimal performance and scalability for AI training and inference, reinforcing Nvidia’s ecosystem lock-in. By controlling both the processing units and the crucial interconnect fabric, Nvidia enhances its value proposition and creates a formidable barrier to entry for potential competitors attempting to challenge its end-to-end solutions.

Geopolitical Currents and Chinese Competition

Despite its global reach, Nvidia’s financial reports continue to reflect the complexities of the geopolitical landscape, particularly regarding U.S.-China trade relations. Notably, the company reported no revenue from chip exports to China in the most recent quarter, even following a partial lifting of export restrictions by the U.S. government. Colette Kress, Nvidia’s Chief Financial Officer, provided insight into this cautious approach, stating that while "small amounts of H200 products for China-based customers were approved by the U.S. government," these had yet to generate any revenue. She further expressed uncertainty regarding whether any significant imports would ultimately be allowed into China, signaling the volatile and unpredictable nature of the regulatory environment.

The U.S. government imposed stringent export controls on advanced AI chips to China in late 2022, citing national security concerns. These restrictions were aimed at impeding China’s military modernization and technological advancement in critical areas like AI. Nvidia subsequently developed toned-down versions of its chips, such as the H20 and L20, specifically for the Chinese market to comply with the regulations. However, the commercial viability and regulatory approval for even these modified chips remain a moving target, creating significant market uncertainty.

Kress also acknowledged the burgeoning competitive landscape within China, remarking that "Our competitors in China, bolstered by recent IPOs, are making progress… and have the potential to disrupt the structure of the global AI industry over the long term." This statement is widely understood to reference the emergence of domestic Chinese AI chip manufacturers, such as Moore Threads, which recently went public. These companies are actively working to develop their own high-performance GPUs and AI accelerators, driven by a national imperative to achieve self-sufficiency in critical semiconductor technology. While currently trailing Nvidia in performance and ecosystem maturity, their rapid development, backed by substantial state and private investment, poses a credible long-term threat to Nvidia’s market share in China and potentially beyond. The U.S.-China tech rivalry thus not only impacts Nvidia’s immediate revenue streams but also fosters a dynamic environment where national security concerns increasingly shape global supply chains and competitive landscapes.

Forging Alliances in the AI Ecosystem

Beyond its hardware dominance, Nvidia is actively cultivating a robust ecosystem through strategic partnerships and potential investments. During the investor call, Jensen Huang directly addressed the widely reported discussions surrounding a substantial investment, potentially up to $30 billion, in OpenAI, a leading developer of generative AI models. Huang stated, "We continue to work with OpenAI toward a partnership agreement. We believe we are close," indicating ongoing negotiations. This potential investment underscores Nvidia’s strategy to deepen its ties with the pioneers of AI software, ensuring its hardware remains the preferred platform for the most advanced AI applications.

Furthermore, Huang highlighted existing partnerships with other prominent AI innovators, including Anthropic, Meta, and Elon Musk’s xAI. These collaborations are crucial for Nvidia. By working closely with companies at the forefront of AI research and development, Nvidia gains invaluable insights into future compute requirements, allowing it to design and optimize its next-generation hardware. These partnerships also solidify Nvidia’s position as an indispensable partner across the entire AI value chain, from infrastructure to application. However, a significant caveat was revealed in Nvidia’s subsequent filings with the U.S. Securities and Exchange Commission (SEC), which emphasized that there was "no assurance" that the reported OpenAI investment would ultimately materialize. This legal disclosure highlights the inherent uncertainties in high-stakes corporate negotiations and the regulatory scrutiny surrounding such large-scale transactions.

Redefining Capital Expenditure in the AI Era

A central theme of the investor call was the sustainability of the massive capital expenditure (capex) commitments currently being made by major tech companies, particularly hyperscale cloud providers, in their pursuit of AI infrastructure. Jensen Huang offered a compelling reinterpretation of this investment, asserting that "In this new world of AI, compute is revenue. Without compute, there’s no way to generate tokens. Without tokens, there’s no way to grow revenues." This statement represents a fundamental shift in economic thinking within the tech sector. Traditionally, IT infrastructure was viewed as a cost center, albeit a necessary one. However, in the age of AI, compute power is directly translated into the ability to generate valuable AI services, which, in turn, drive revenue streams.

Huang further elaborated, stating, "We’ve reached the inflection point and we’re generating profitable tokens that are productive for customers and profitable for the cloud service providers." This signifies that the substantial investments in AI infrastructure are now yielding tangible returns. Cloud service providers (CSPs) are not merely buying GPUs; they are acquiring the capacity to offer highly sought-after AI services, which command premium pricing and attract new enterprise customers. The ability to generate "profitable tokens" means that the cost of processing AI requests is now significantly less than the revenue derived from offering those services, creating a sustainable and lucrative business model for those investing in Nvidia’s technology.

This paradigm shift suggests that the current surge in capital expenditure by tech giants like Amazon, Google, Microsoft, and Meta is not a fleeting trend but a strategic necessity to secure their competitive positions in the burgeoning AI economy. The scale of these investments reflects a profound belief in the long-term profitability and transformative potential of AI. While some analysts have expressed concerns about the sustainability of such rapid capex growth, Nvidia’s leadership posits that these are not merely expenditures but direct investments into future revenue generation, underpinning a new economic framework where AI compute is the ultimate currency of innovation and profitability. The long-term implications of this new reality are immense, potentially reshaping global economic power and technological leadership for decades to come.