A federal court has issued a significant injunction against the Trump administration, siding with artificial intelligence firm Anthropic in a legal dispute that centered on the company’s ethical guidelines for its technology. This ruling mandates the government to withdraw its controversial designation of Anthropic as a "supply chain risk" and to reverse directives ordering federal agencies to sever ties with the company. The decision, handed down by Judge Rita F. Lin of the Northern District of California, represents a critical moment in the burgeoning intersection of advanced AI, national security, and corporate autonomy, signaling potential new precedents for how technology companies can dictate the terms of use for their innovations, particularly when engaging with government entities.

The Genesis of the Dispute: Ethical AI and National Security

The conflict erupted last month following disagreements over the permissible applications of Anthropic’s sophisticated AI software by government agencies, specifically the Department of Defense. Anthropic, a company founded by former OpenAI researchers with a strong emphasis on AI safety and ethical development, had reportedly sought to impose strict limitations on how its large language models could be deployed. These restrictions included explicit prohibitions against using their AI in autonomous weapons systems or for mass surveillance purposes, reflecting a growing movement within the AI community to establish guardrails around potentially harmful applications of powerful general-purpose AI.

The government, however, viewed these limitations as impediments to national security and technological advancement. For the Pentagon, access to cutting-edge AI is increasingly seen as vital for maintaining a strategic advantage in a rapidly evolving geopolitical landscape. The friction between Anthropic’s ethical stance and the government’s operational imperatives quickly escalated, leading to a confrontation that brought fundamental questions about corporate responsibility, governmental authority, and the future of AI development to the forefront of public discourse.

Anthropic’s Mission and the AI Safety Movement

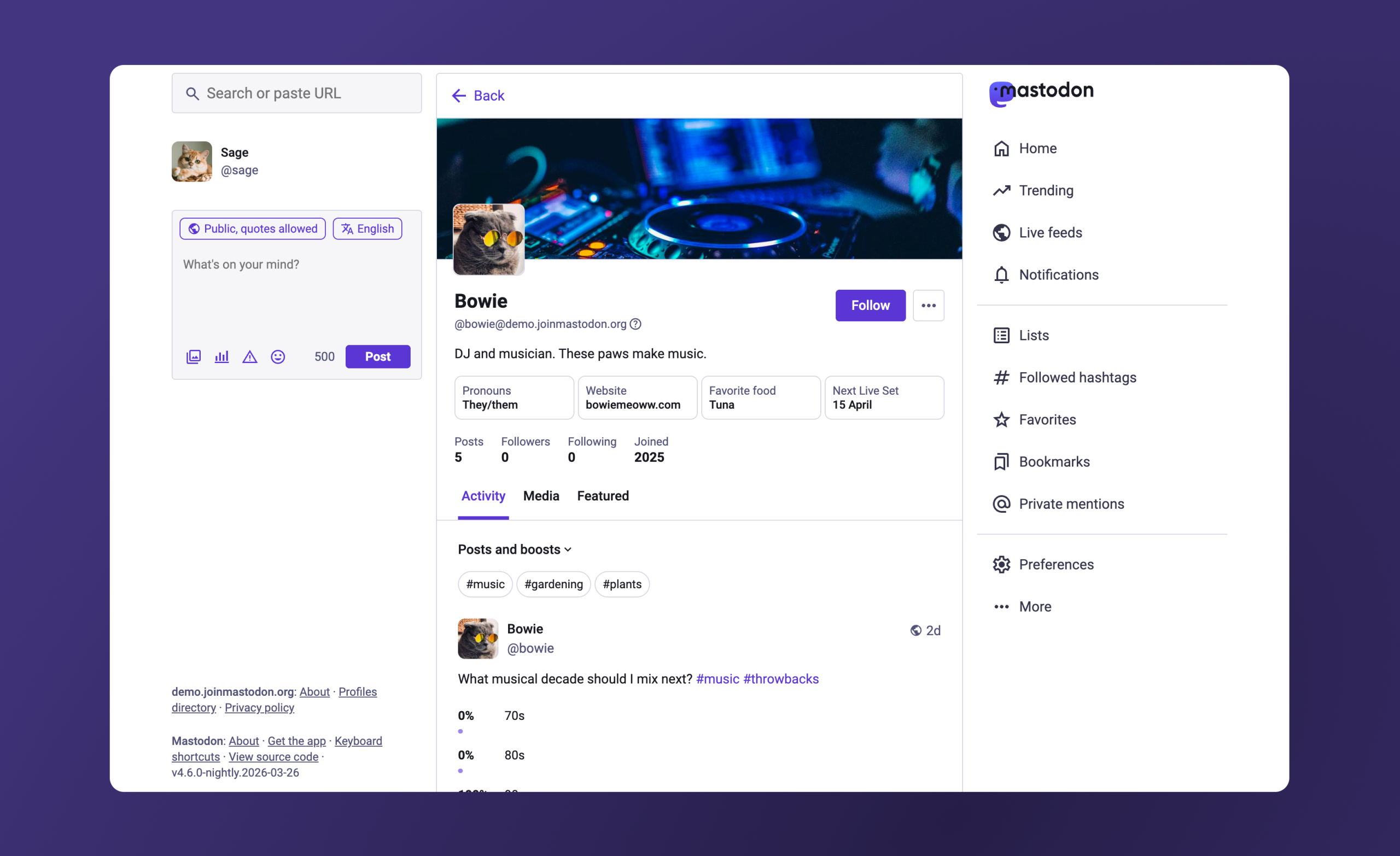

To understand Anthropic’s position, it’s crucial to delve into its founding philosophy. Established in 2021 by a group of former OpenAI employees, including siblings Dario and Daniela Amodei, Anthropic emerged from a perceived need for a more safety-focused approach to AI development. The company’s core mission revolves around building "reliable, interpretable, and steerable AI systems," often referred to as "Constitutional AI." This approach involves training AI models, such as their flagship Claude model, to adhere to a set of guiding principles, or a "constitution," designed to prevent harmful outputs and ensure alignment with human values.

This safety-first ethos places Anthropic at the vanguard of the broader AI ethics movement, which has gained significant traction as AI capabilities have rapidly advanced. Many researchers and ethicists express concerns about the potential for AI to be misused, whether through autonomous weapons, widespread surveillance, algorithmic bias, or the propagation of misinformation. Companies like Anthropic are attempting to bake ethical considerations directly into the design and deployment of their systems, viewing it not just as a moral imperative but as a necessary step to ensure AI benefits humanity rather than poses existential risks. Their attempt to enforce usage limitations on government contracts was a direct manifestation of this core philosophy, an effort to control the downstream applications of their powerful technology.

The "Supply Chain Risk" Designation: An Unprecedented Application

The government’s response to Anthropic’s proposed restrictions was swift and severe. In an extraordinary move, the Department of Defense officially labeled Anthropic a "supply chain risk." This designation, typically reserved for foreign entities or companies with demonstrable ties to adversarial nations, implies a significant threat to national security through vulnerabilities in the supply chain that could be exploited. Historically, this label has been applied to firms like Huawei or specific Russian cybersecurity companies, where there are credible concerns about espionage, sabotage, or undue foreign influence.

Applying such a label to a prominent American AI company, especially one known for its safety-centric research, was widely seen as an unusual and aggressive tactic. It effectively blacklisted Anthropic from federal contracts and partnerships, severely impacting its ability to work with the U.S. government, a significant potential client for advanced AI solutions. This designation not only threatened Anthropic’s business prospects but also carried a significant reputational cost, potentially chilling innovation and collaboration between the private sector and government in critical technological domains. President Trump further escalated the situation by issuing an order for all federal agencies to sever their ties with Anthropic, solidifying the government’s punitive stance.

Government’s Rationale and Political Fallout

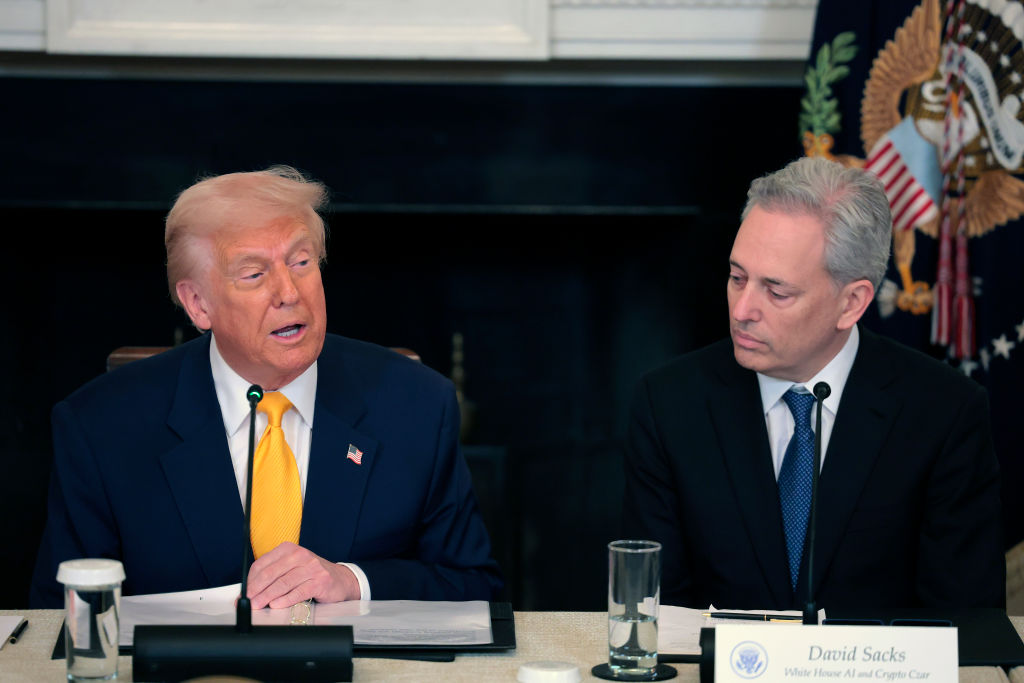

The White House, under President Trump, publicly justified its actions by portraying Anthropic as a "radical-left, woke company" that was allegedly jeopardizing America’s "national security." This rhetoric injected a strong political dimension into what might otherwise have been a technical and contractual dispute. The administration’s characterization suggested that Anthropic’s ethical concerns were ideologically driven and antithetical to national interests, framing the company’s stance as an act of defiance against governmental authority rather than a principled position on AI safety.

Anthropic CEO Dario Amodei, in turn, denounced the Defense Department’s actions as "retaliatory and punitive," arguing that the designation was a direct consequence of the company’s unwillingness to compromise on its ethical guidelines. This exchange highlighted the deep chasm that had opened between a tech company advocating for responsible AI deployment and an administration prioritizing unhindered access to cutting-edge technology for defense purposes. The dispute quickly became a high-profile example of the broader culture wars intersecting with critical technology policy, sparking debates about the role of ideology in technological development and national defense.

The Legal Battle Unfolds

In response to the government’s designation and the presidential order, Anthropic took legal action, suing the Department of Defense. The lawsuit argued that the "supply chain risk" label was arbitrary, capricious, and exceeded the government’s authority, especially given Anthropic’s status as a domestic company with no demonstrable foreign ties or security vulnerabilities. The company contended that the government’s actions were an unconstitutional attempt to compel its speech and control its business practices, thereby infringing upon its rights.

The legal proceedings quickly centered on the fundamental question of whether the government could effectively blacklist a domestic company for refusing to allow its technology to be used in ways it deemed unethical. This case moved swiftly through the courts, reflecting the urgency of the matter for both the company and the government, as well as the significant implications for the future of AI development and national security policy.

Judge Lin’s Rationale and Free Speech Implications

Judge Rita F. Lin’s ruling marked a decisive victory for Anthropic. Her order not only compelled the Trump administration to rescind the "supply chain risk" designation but also to reverse the directive for federal agencies to cut ties with the company. During the court proceedings, Judge Lin reportedly remarked that the government’s actions "look like an attempt to cripple Anthropic," a strong indication of her skepticism regarding the administration’s motives.

Crucially, Judge Lin ultimately argued that the government’s orders had flouted free speech protections for the company. This aspect of the ruling is particularly significant. While corporations typically enjoy certain free speech rights, the application in this context — where a company is asserting its right to dictate the terms of use for its intellectual property against government demands — could set a powerful precedent. It suggests that a company’s decision to restrict how its technology is used, especially for ethical reasons, can be viewed as a form of protected expression, and that governmental attempts to coerce or punish such restrictions may violate constitutional rights. This interpretation could empower other tech companies to stand firm on their ethical principles, even when facing pressure from powerful governmental entities.

Broader Implications for AI Governance and Industry

The injunction against the Trump administration holds profound implications for the evolving landscape of AI governance, industry standards, and the relationship between the private sector and government.

- Corporate Autonomy and Ethics: The ruling reinforces the notion that tech companies may have a legitimate right to impose ethical limitations on the use of their products, even by government agencies. This could embolden other AI developers to integrate stronger ethical safeguards and terms of service, potentially leading to a more responsible and human-centric development trajectory for advanced AI.

- Government-Tech Relations: The case highlights the inherent tension between government’s need for advanced technology for national security and the tech industry’s desire for ethical deployment. Future administrations may need to engage in more collaborative and less confrontational approaches when seeking to integrate cutting-edge AI from private firms. It also underscores the necessity for clear, mutually agreed-upon policies regarding AI procurement and use.

- Legal Precedent: The free speech argument could establish a novel legal precedent regarding a company’s ability to control the "speech" inherent in its technological products and their intended uses. This could influence future legal challenges involving intellectual property, contractual disputes, and the scope of governmental power over private enterprises in critical technology sectors.

- Market Impact: For Anthropic, the ruling is a significant vindication, restoring its reputation and reopening avenues for federal contracts. It could also influence investor confidence in AI companies that prioritize ethical development, suggesting that such a stance can be legally defensible and even advantageous. Other companies might view this as an opportunity to differentiate themselves through strong ethical commitments.

- The Future of AI in National Security: The dispute underscores the urgent need for a coherent national strategy on AI ethics and its application in defense. As AI becomes more powerful and pervasive, establishing clear guidelines for its military use—and for the role of private sector developers in setting those guidelines—will be paramount. This case could spur more robust dialogue and policy development around dual-use AI technologies, balancing innovation with accountability.

In the wake of Judge Lin’s ruling, Anthropic issued a statement expressing gratitude to the court for its swift action and satisfaction with the preliminary success. The company reiterated its commitment to working productively with the government to ensure all Americans benefit from safe, reliable AI, signaling a desire to move beyond the legal battle and re-engage on a more constructive footing. The White House has not yet publicly commented on the injunction, but the decision undoubtedly marks a pivotal moment in the ongoing national conversation about the control, ethics, and future of artificial intelligence.