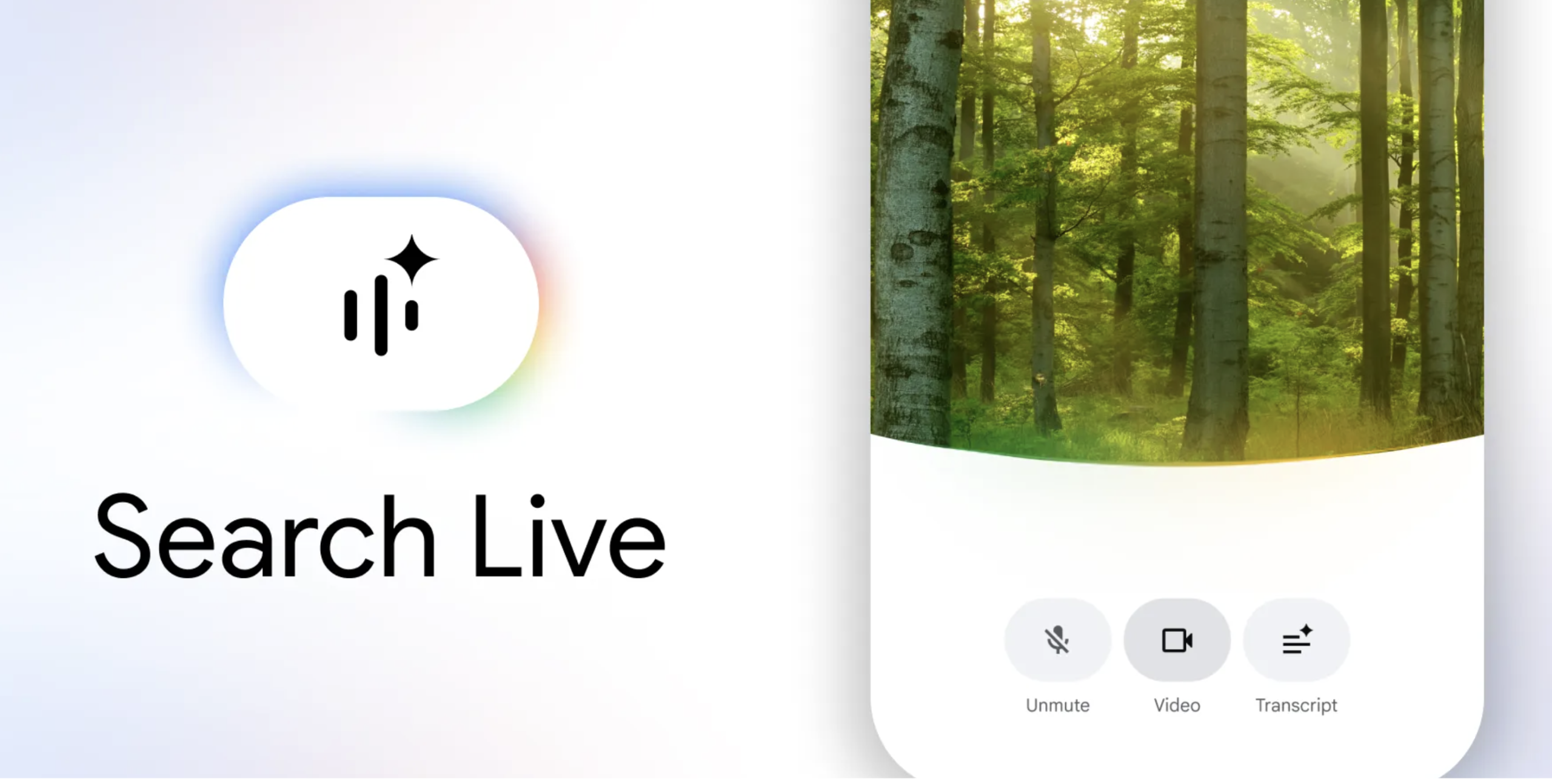

Google has announced a significant expansion of its artificial intelligence capabilities, deploying its multimodal conversational search feature, Search Live, to a global audience. This move makes the advanced AI assistant available in over 200 nations and territories, supporting all languages where Google’s AI Mode is currently operational. The widespread rollout marks a pivotal moment in the evolution of information retrieval, shifting the paradigm from traditional keyword-based queries to dynamic, real-time visual and audio interactions.

The Dawn of Multimodal Search

For decades, Google Search has served as the primary gateway to online information, evolving from a simple text input box to incorporating voice commands and sophisticated image recognition through tools like Google Lens. The introduction of Search Live represents the next frontier: a seamless blend of visual perception, natural language understanding, and conversational AI. This progression reflects a broader industry trend towards more intuitive and context-aware computing, where technology anticipates and responds to human needs in a more naturalistic manner.

The journey towards this multimodal future has been incremental yet relentless. Initially launched in July 2025, Search Live first debuted in the United States and subsequently expanded to India. Its core functionality allows users to direct their smartphone cameras at physical objects or scenes, initiating a back-and-forth dialogue with the AI that draws on the live visual context. This capability moves beyond merely identifying objects, enabling users to ask complex, follow-up questions and receive interactive guidance, fundamentally changing how individuals seek information and assistance in their daily lives. The strategic initial rollout in two diverse and populous markets like the U.S. and India likely provided crucial data and feedback for optimizing the system for global deployment.

A Deeper Dive into Search Live’s Capabilities

Search Live is engineered to address situations where conventional text or even voice search falls short. Imagine encountering a complex DIY project, a foreign plant, or an unfamiliar piece of technology. Instead of attempting to describe the object in words or struggling to find the right keywords, users can simply point their device’s camera. The AI then "sees" what the user sees, providing immediate, contextually relevant information and solutions. For instance, a user grappling with the installation of a new shelving unit can activate Search Live, point their camera at the components, and verbally ask for guidance. The system can then offer step-by-step instructions, troubleshoot potential issues, and even suggest relevant web links for more detailed information, all in real-time.

The user experience is designed for simplicity and immediacy. Accessing the feature involves opening the Google application on either Android or iOS devices and tapping the designated "Live" icon located beneath the search bar. From there, users can vocalize their queries to receive audio responses, fostering an ongoing, natural conversation. The ability to seamlessly transition from visual input to verbal dialogue and then to exploring supplementary web resources creates a comprehensive information-seeking ecosystem. Furthermore, for those already utilizing Google Lens for visual searches, the "Live" option can be activated directly from within the Lens interface, streamlining the process even further. This integration underscores Google’s commitment to embedding advanced AI capabilities across its product suite, making them accessible and intuitive for a broad user base.

The Technological Backbone: Gemini 3.1 Flash Live

Powering this global expansion is Google’s sophisticated new audio and voice model, Gemini 3.1 Flash Live. This advanced model is crucial for delivering the highly natural and intuitive conversational experience that defines Search Live. Unlike previous generations of AI models, Gemini 3.1 Flash Live is optimized for speed, efficiency, and nuanced understanding of both spoken language and ambient audio, alongside visual input. Its ability to process information rapidly and generate coherent, contextually appropriate responses in real-time is a significant technological leap.

The "Flash" designation implies a focus on low-latency interactions, critical for a feature designed to provide immediate assistance. This technological prowess allows the AI to maintain the flow of conversation even as the visual context changes or as users pose complex, multi-part questions. The underlying architecture of Gemini 3.1 Flash Live likely incorporates advanced neural networks capable of multimodal reasoning, enabling it to synthesize information from various sensory inputs simultaneously. This fusion of sight and sound processing is what grants Search Live its unique ability to "understand" and respond to the world around the user in a truly interactive manner. The continued development of the Gemini family of models is central to Google’s strategy in the ongoing AI race, positioning it at the forefront of conversational and multimodal AI innovation.

Expanding the AI Ecosystem: Global Translate Enhancements

Beyond Search Live, Google is also significantly enhancing its translation capabilities, announcing the expansion of Google Translate’s "Live Translate" feature to iOS devices and a host of new countries. This feature, which facilitates real-time audio translation through headphones, is now accessible to users in Germany, Spain, France, Nigeria, Italy, the United Kingdom, Japan, Bangladesh, and Thailand, among other regions. This broadens the reach of instant, spoken language translation, making global communication more seamless than ever.

With this expansion, individuals on both Android and iOS platforms can leverage any pair of headphones to experience real-time translations across more than 70 languages. This represents a monumental step towards breaking down linguistic barriers in various social and professional contexts. From international travel and business meetings to cross-cultural education and personal interactions, Live Translate offers an immediate solution for understanding and being understood in diverse linguistic environments. The integration of such advanced translation technology within a widely accessible framework like Google Translate underscores the company’s vision of a more interconnected and comprehensible world, powered by AI.

Transforming User Interaction and Information Access

The global rollout of Search Live and the enhanced Live Translate features signify a profound shift in human-computer interaction. No longer are users confined to explicit keyword searches or predefined commands; instead, they can engage with technology in a way that mimics natural human conversation and perception. This change has far-reaching social and cultural implications. For individuals in developing nations or those with limited digital literacy, a camera-based conversational interface could be significantly more accessible than traditional typing. It democratizes access to information, allowing users to leverage their immediate surroundings as a query input.

Consider the educational impact: students could point their cameras at complex diagrams or scientific experiments and receive immediate, interactive explanations. For the visually impaired or those with motor skill challenges, verbal interaction combined with visual context offers an invaluable tool for navigating the world and accessing information independently. The cultural impact extends to how knowledge is disseminated and acquired, fostering a more personalized and adaptive learning experience. Furthermore, the ability to instantly translate conversations across dozens of languages can foster greater understanding and collaboration across diverse communities, potentially mitigating communication friction in an increasingly globalized society.

Market Implications and the Future of Search

From a market perspective, Google’s aggressive expansion of these AI features is a strategic maneuver in the intensely competitive landscape of artificial intelligence. With rivals like OpenAI, Microsoft, and Meta rapidly advancing their own AI models and applications, Google is leveraging its long-standing dominance in search and its vast ecosystem of user data to maintain its leadership position. Search Live and Live Translate are not just new features; they represent a fundamental reimagining of what a search engine can be, moving towards an "answer engine" that proactively assists users rather than merely listing links.

This shift could have significant implications for traditional search advertising models. As users engage in more conversational and direct interactions with AI assistants, the path to discovery for products and services might change. Businesses will need to adapt their digital strategies to ensure their offerings are discoverable and relevant within these new AI-driven interfaces. The potential for integrating e-commerce directly into conversational AI, where users can ask for product recommendations based on visual cues and make purchases within the same interaction, is immense. Moreover, the enhanced accessibility and utility of these tools could expand the overall market for digital services, bringing new users online and deeper into Google’s ecosystem.

Navigating the New Frontier: Challenges and Opportunities

While the potential benefits are substantial, the global deployment of such powerful AI tools also presents challenges. Ensuring the accuracy and impartiality of AI-generated responses across diverse contexts and cultures is paramount. The ethical considerations surrounding data privacy, the potential for algorithmic bias, and the responsible use of multimodal AI remain critical areas of ongoing development and scrutiny. Google, like all major AI developers, must navigate these complex issues with transparency and user trust at the forefront.

User adoption rates will also be a key factor. While the technology is impressive, integrating new habits into daily routines takes time. Education and clear demonstrations of practical utility will be essential for widespread embrace. However, the opportunities presented by this new frontier are equally compelling. The future could see these AI capabilities integrated into augmented reality devices, smart home systems, and even robotics, creating an ambient intelligence that proactively assists users throughout their day. Google’s global expansion of Search Live and Live Translate is not merely an update; it is a declaration of intent, signaling a future where AI is deeply embedded in the fabric of how humanity interacts with information and each other.