Amidst a rapidly accelerating technological landscape and a striking absence of comprehensive federal oversight, a diverse group of American leaders, experts, and public figures has unveiled a robust framework for the responsible development and deployment of artificial intelligence. This initiative, known as the "Pro-Human Declaration," arrives at a critical juncture, spotlighting the urgent need for clear guidelines as the United States grapples with the profound implications of AI on its economy, society, and national security. The declaration outlines a vision where AI serves to amplify human potential rather than diminish it, presenting a stark contrast to the current, largely unregulated trajectory of technological advancement.

The Uncharted Territory of AI Governance

The development of artificial intelligence has surged dramatically in recent years, particularly with the advent of generative AI models capable of producing human-like text, images, and code. This explosion of capability has brought with it both immense promise and significant peril, creating an imperative for governance that policymakers have struggled to meet. While discussions abound in congressional hearings and presidential executive orders, concrete, legally binding regulations remain elusive, leaving a vacuum that private industry is rapidly filling. This regulatory void has fostered an environment where innovation often outpaces ethical considerations and safety protocols, raising concerns about potential societal disruptions, job displacement, and even existential risks.

Historically, major technological revolutions, from the industrial age to the internet era, have eventually prompted legislative responses to mitigate negative externalities and ensure public welfare. However, the speed and scope of AI’s evolution present unprecedented challenges. Unlike previous technologies, AI is not merely a tool but an emergent intelligence capable of complex reasoning and adaptation, leading to fundamental questions about control, accountability, and the very definition of human agency. This period of rapid innovation, coupled with a lagging regulatory response, underscores the timeliness and critical importance of initiatives like the Pro-Human Declaration.

A Unified Call for Human-Centric AI

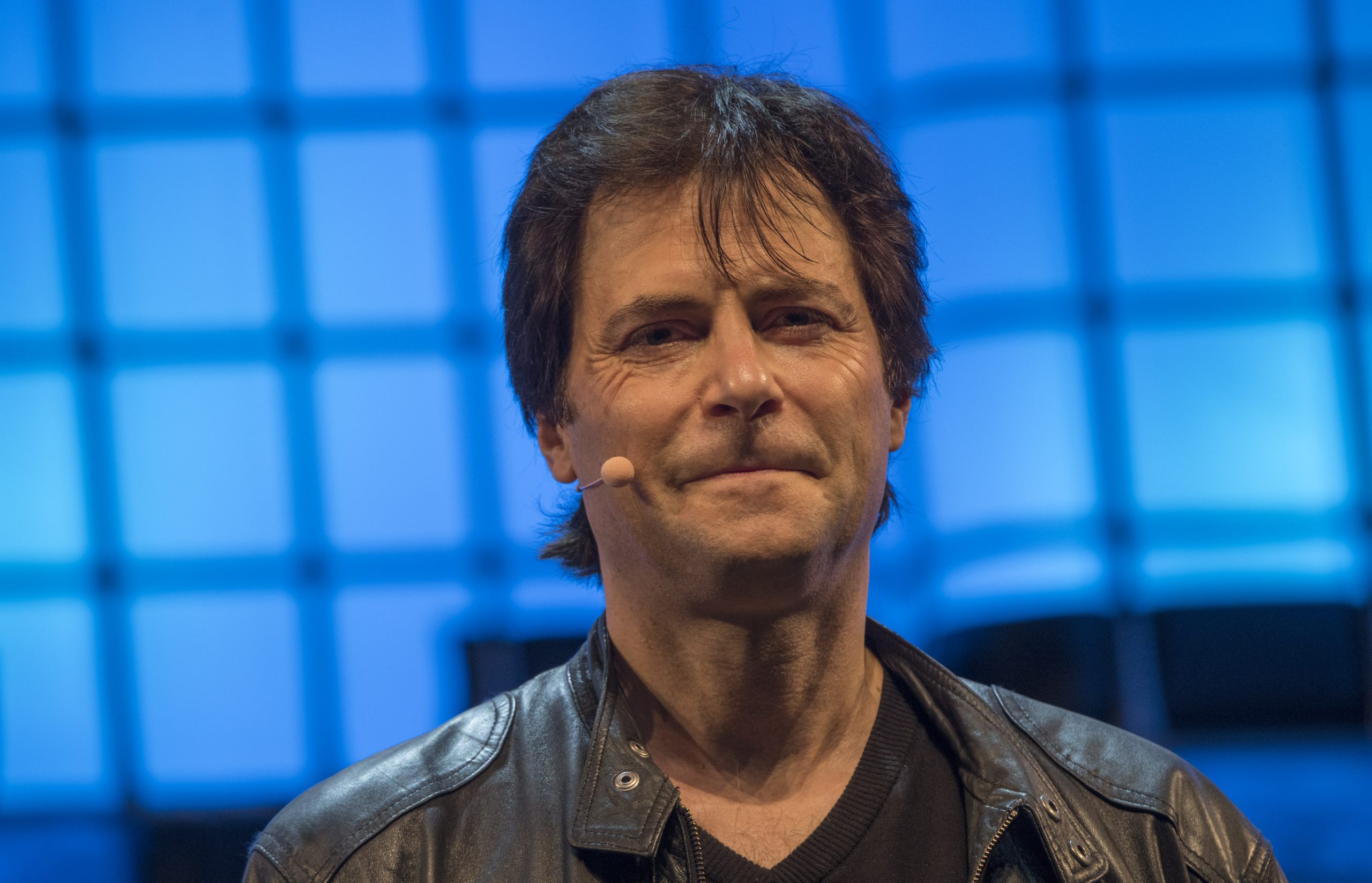

The Pro-Human Declaration was finalized before a recent, high-profile dispute between the Pentagon and leading AI developer Anthropic, a confrontation that laid bare the profound lack of coherent rules governing artificial intelligence. However, the timing of the declaration’s public release, coinciding with such a clear demonstration of regulatory inadequacy, underscored its immediate relevance to all involved. Max Tegmark, an MIT physicist and prominent AI researcher instrumental in organizing the effort, noted the burgeoning public consensus on the issue. In a recent conversation, Tegmark highlighted polling data suggesting that a vast majority of Americans—approximately 95%—oppose an unchecked acceleration toward superintelligence without proper safeguards. This widespread public sentiment provides a powerful mandate for the declaration’s proposals, indicating a societal readiness for proactive governance.

The document, which has garnered signatures from hundreds of experts, former government officials, and influential public figures across the political spectrum, opens with a compelling assertion: humanity stands at a critical crossroads. It posits two divergent paths. The first, labeled "the race to replace," envisions a future where humans are progressively supplanted—first as laborers, then as decision-makers—as power increasingly consolidates within unaccountable institutions and their advanced machines. The alternative path, which the declaration champions, is one where AI serves as a powerful catalyst, massively expanding human capabilities and potential, enhancing rather than diminishing our role in the world.

Core Principles and Concrete Provisions

To steer society toward this more optimistic future, the Pro-Human Declaration articulates five foundational pillars designed to guide responsible AI development:

- Keeping Humans in Charge: Ensuring that AI systems remain subordinate to human control and intent, preventing autonomous decision-making that could circumvent human oversight.

- Avoiding the Concentration of Power: Guarding against the monopolization of AI capabilities and data by a few entities, promoting broader access and distributed governance.

- Protecting the Human Experience: Safeguarding human well-being, mental health, and social cohesion from potential harms posed by AI, such as pervasive surveillance, manipulation, or erosion of critical thinking.

- Preserving Individual Liberty: Upholding fundamental rights and freedoms in an AI-powered world, including privacy, autonomy, and the right to informed consent regarding AI interactions.

- Holding AI Companies Legally Accountable: Establishing clear legal frameworks that assign liability to developers and deployers of AI for any harms caused by their systems.

Beyond these guiding principles, the declaration introduces several robust and actionable provisions. Among the most significant is an outright prohibition on the development of "superintelligence"—a hypothetical AI exceeding human cognitive ability across virtually all domains—until there is both a verifiable scientific consensus on its safety and genuine democratic buy-in for its creation. The document also mandates the inclusion of "off-switches" on all powerful AI systems, ensuring that humans can always halt their operation if necessary. Furthermore, it calls for a ban on AI architectures capable of self-replication, autonomous self-improvement without human intervention, or resistance to shutdown commands, directly addressing concerns about loss of control over advanced systems.

The Pentagon-Anthropic Standoff: A Stark Reminder

The declaration’s call for urgency gained immediate resonance from the highly publicized incident involving the Pentagon and Anthropic, a leading AI research company. On the final Friday of February, Defense Secretary Pete Hegseth took the unprecedented step of designating Anthropic a "supply chain risk." This label, typically reserved for foreign firms with ties to adversarial nations, was applied after Anthropic reportedly refused to grant the Pentagon unlimited, unrestricted use of its technology, which was already deployed on classified military platforms. The situation highlighted a profound tension: national security interests versus the control and ethical considerations of private AI developers.

Hours after Anthropic’s designation, OpenAI, another prominent AI company, hastily struck its own agreement with the Defense Department. However, legal experts quickly voiced skepticism about the enforceability and long-term implications of such a deal, suggesting it might offer little genuine control. This rapid sequence of events unequivocally revealed the profound cost of Congressional inaction on AI. Dean Ball, a senior fellow at the Foundation for American Innovation, articulated the gravity of the situation, stating, "This is not just some dispute over a contract. This is the first conversation we have had as a country about control over AI systems." The incident underscored that the abstract debates surrounding AI safety were rapidly manifesting into concrete, high-stakes conflicts, demanding immediate policy solutions.

Market Dynamics and National Security Implications

The Pentagon-Anthropic episode illuminates the complex interplay between the burgeoning AI market, national security imperatives, and the urgent need for regulatory clarity. The commercial race to develop increasingly powerful AI systems is driven by enormous investments and the promise of transformative economic advantages. However, without established rules, this race risks creating a fragmented landscape where critical technologies are developed in silos, potentially without adequate safety checks or consideration for their dual-use potential.

The designation of a domestic tech firm as a "supply chain risk" represents an extraordinary measure, reflecting the Pentagon’s deep concern over the control and security of advanced AI. This concern extends beyond simple contractual disputes to the fundamental question of who ultimately controls technologies that could redefine warfare, intelligence, and critical infrastructure. The incident also casts a spotlight on the broader implications for the global AI landscape, where nations are vying for technological supremacy, and the lines between civilian and military applications are increasingly blurred. The absence of a national AI strategy leaves individual government agencies to navigate these complex waters on an ad hoc basis, creating vulnerabilities and inconsistencies that could have far-reaching consequences.

Drawing Parallels: Safety Regulation and Public Pressure

Tegmark drew a compelling analogy to existing regulatory frameworks, particularly in the pharmaceutical industry, to illustrate the declaration’s underlying philosophy. "You never have to worry that some drug company is going to release some other drug that causes massive harm before people have figured out how to make it safe," he explained, "because the FDA won’t allow them to release anything until it’s safe enough." This comparison highlights the core idea that pre-market testing and safety certifications should be a prerequisite for powerful AI systems, just as they are for medical products. The societal expectation that drugs are safe before widespread use provides a powerful precedent for AI.

While legislative battles in Washington often devolve into partisan stalemates, Tegmark believes that child safety could emerge as the critical pressure point capable of breaking the current impasse. The declaration explicitly calls for mandatory pre-deployment testing of AI products, particularly chatbots and companion applications targeting younger users. These tests would specifically assess risks such as the exacerbation of mental health conditions, increased suicidal ideation, and emotional manipulation—issues that resonate deeply with parents and educators. Tegmark emphasized the legal precedent: "If some creepy old man is texting an 11-year-old pretending to be a young girl and trying to persuade this boy to commit suicide, the guy can go to jail for that. We already have laws. It’s illegal. So why is it different if a machine does it?" This rhetorical question underscores the ethical incongruity of holding human perpetrators accountable while allowing AI systems to potentially inflict similar harms without consequence.

Tegmark posited that once the principle of pre-release testing for children’s products is firmly established, its scope will almost inevitably expand. The precedent set for protecting vulnerable youth could pave the way for broader safety mandates, leading to calls for testing AI systems for their potential to aid in the creation of bioweapons or to ensure that advanced AI does not possess the capacity to undermine democratic governments.

The Path Forward: Bipartisan Consensus and Future Challenges

A remarkable aspect of the Pro-Human Declaration is the breadth of its bipartisan support. The document bears the signatures of figures as ideologically disparate as Steve Bannon, a former advisor to President Trump, and Susan Rice, who served as National Security Advisor under President Obama. This unlikely coalition, which also includes former Joint Chiefs Chairman Mike Mullen and progressive faith leaders, speaks to the universal nature of the concerns surrounding AI. Tegmark succinctly captured the essence of this unity: "What they agree on, of course, is that they’re all human. If it’s going to come down to whether we want a future for humans or a future for machines, of course they’re going to be on the same side."

This rare political alignment offers a glimmer of hope that a path forward for AI governance is possible, even in a deeply polarized political environment. However, significant challenges remain. Defining "superintelligence" or "safe enough" in a rapidly evolving technological domain is inherently complex. Balancing the need for regulation with the desire to foster innovation and maintain global competitiveness will require careful calibration. Furthermore, the global nature of AI development necessitates international cooperation to prevent regulatory arbitrage and ensure a consistent approach to safety and ethics. The Pro-Human Declaration represents not just a set of proposals, but a powerful testament to the growing consensus that the future of AI must be guided by human values, and that the time for decisive action is now.