San Francisco, CA – Anthropic, a prominent artificial intelligence research company, has introduced a sophisticated AI-driven code review tool designed to meticulously scrutinize the burgeoning volume of AI-generated software code. This new offering, named Code Review, integrates directly into existing developer workflows, aiming to enhance software quality, mitigate risks, and accelerate the development cycle in an era increasingly defined by machine-assisted programming. The launch arrives at a critical juncture for the software industry, which is grappling with the unprecedented speed and scale of code production facilitated by advanced AI models.

The Evolution of Software Development and the Rise of AI Coding

For decades, the bedrock of robust software engineering has been human peer review. Developers traditionally submit their code changes, known as pull requests, for scrutiny by colleagues. This collaborative process is invaluable for identifying logical errors, ensuring code consistency, adhering to best practices, and preventing security vulnerabilities from propagating into a larger codebase. It’s a fundamental mechanism for maintaining high standards and collective knowledge within development teams.

The landscape of software creation, however, has undergone a profound transformation with the advent of large language models (LLMs) capable of generating code. This shift has given rise to a new paradigm often colloquially termed "vibe coding," where developers leverage AI tools to translate plain language instructions into substantial blocks of functional code with remarkable speed. While this innovation promises significant gains in productivity and reduces the manual effort involved in writing boilerplate or complex algorithms, it simultaneously introduces a new set of challenges. The speed of generation often outpaces the capacity for human review, leading to a potential influx of poorly understood code, subtle bugs, and overlooked security flaws. This trade-off between velocity and veracity has created an urgent demand for automated solutions that can keep pace with AI’s output.

Early forms of AI assistance in coding, such as intelligent auto-completion and syntax checkers, have been commonplace for years. These tools incrementally improved developer efficiency by reducing repetitive tasks and catching trivial errors. However, the current generation of AI, exemplified by models like Anthropic’s Claude Code, represents a qualitative leap. These systems can generate entire functions, classes, or even significant portions of applications from high-level prompts. Enterprises across various sectors have rapidly adopted these tools, recognizing their potential to dramatically shorten development timelines and empower smaller teams to achieve more. This rapid adoption, however, has inadvertently created a new bottleneck: the human capacity to review and validate the sheer volume of code now being produced.

Anthropic’s Code Review: A Deep Dive into the Solution

Anthropic’s Code Review product is positioned as a direct answer to this growing challenge. Launched initially for Claude for Teams and Claude for Enterprise customers as a research preview, this AI reviewer is engineered to intercept potential issues before they become embedded within a project’s core software. Cat Wu, Anthropic’s head of product, articulated the market demand, noting, "We’ve seen a lot of growth in Claude Code, especially within the enterprise, and one of the questions that we keep getting from enterprise leaders is: Now that Claude Code is putting up a bunch of pull requests, how do I make sure that those get reviewed in an efficient manner?" The exponential increase in code output from tools like Claude Code has led to a corresponding surge in pull requests, creating a review backlog that can significantly impede the speed at which new features or bug fixes can be shipped.

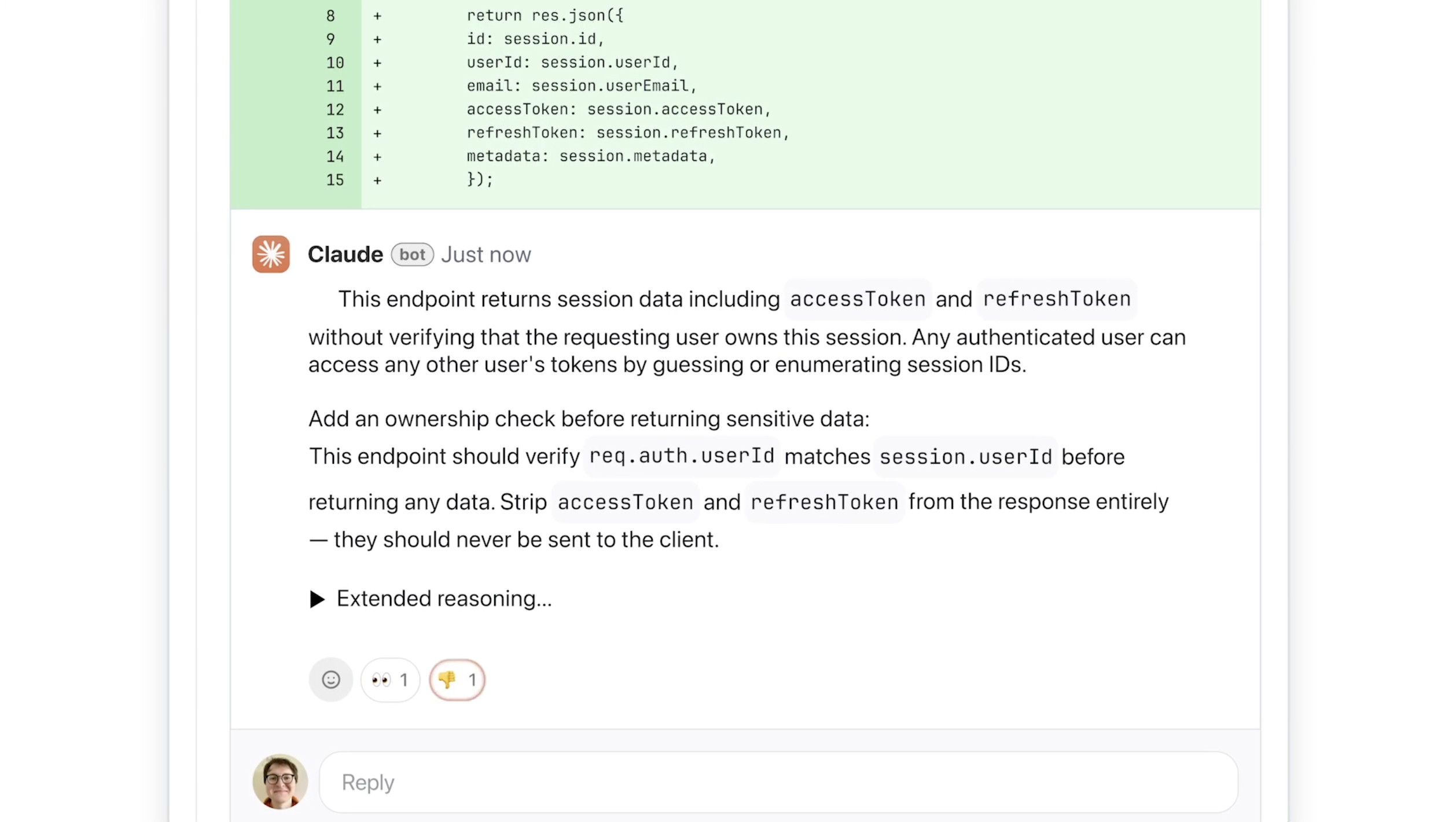

The Code Review system is designed for seamless integration into existing developer toolchains, particularly with GitHub, a widely used platform for version control and collaborative software development. Once enabled by developer leads, the tool automatically analyzes incoming pull requests. It then provides direct feedback on the code, flagging potential issues and offering actionable suggestions for remediation. A key differentiator highlighted by Wu is the tool’s focused approach: it primarily targets logical errors rather than subjective stylistic preferences. This strategic decision is crucial for ensuring the utility and acceptance of AI-driven feedback among developers. Automated style guides have existed for years, often leading to minor, non-critical suggestions that can annoy or distract human developers. By prioritizing logic, Anthropic aims to deliver feedback that is immediately relevant and impactful for improving software functionality and stability.

The system communicates its findings with clarity, providing step-by-step explanations of perceived issues, their potential implications, and concrete proposals for resolution. To aid developers in prioritizing their efforts, Code Review employs a color-coded severity labeling system:

- Red: Indicates issues of the highest severity, demanding immediate attention due to potential critical bugs or security vulnerabilities.

- Yellow: Denotes potential problems that warrant careful review, suggesting areas for optimization or improvement that might not be immediately critical but could impact long-term stability or performance.

- Purple: Identifies issues related to pre-existing code or historical bugs, helping developers understand the broader context of a new change within the legacy codebase.

Underpinning this sophisticated analysis is a multi-agent architecture. This innovative design allows multiple specialized AI agents to examine the codebase concurrently, each approaching the review from a distinct perspective or dimension. For instance, one agent might focus on data flow integrity, another on error handling, and yet another on algorithmic efficiency. A final, aggregating agent then synthesizes these individual findings, eliminates redundancies, and prioritizes the most critical and actionable insights. This parallel processing capability contributes to the tool’s efficiency and depth of analysis.

Addressing Industry Bottlenecks and Enhancing Security

The bottleneck created by an overwhelming number of pull requests is a tangible problem impacting the development cycles of many organizations. As companies increasingly rely on AI to accelerate feature delivery, the subsequent human review phase often becomes the slowest link in the chain. Anthropic’s Code Review seeks to alleviate this pressure, enabling enterprises like Uber, Salesforce, and Accenture—companies already leveraging Claude Code—to manage their burgeoning code output more effectively. By automating a significant portion of the initial review, the tool frees up human developers to focus on more complex architectural decisions, innovative problem-solving, and the most critical aspects of peer feedback. This shift promises to accelerate time-to-market for new products and features, providing a competitive edge in rapidly evolving digital landscapes.

Beyond logical error detection, Code Review also incorporates a light security analysis. For organizations requiring a more comprehensive and dedicated security audit, Anthropic offers Claude Code Security, a specialized product designed for deeper vulnerability detection. This layered approach allows enterprises to choose the level of security scrutiny appropriate for their specific needs and risk profiles. Furthermore, engineering leads can customize Code Review to include additional checks tailored to their organization’s internal best practices, coding standards, and compliance requirements. This flexibility ensures that the AI reviewer aligns with a company’s unique development culture and regulatory obligations.

Market Dynamics and Strategic Positioning

Anthropic’s launch of Code Review comes at a pivotal moment for the company, underscoring its strategic focus on the enterprise market. The firm has witnessed substantial growth in its enterprise business, with subscriptions reportedly quadrupling since the beginning of the year. Claude Code, in particular, has demonstrated impressive commercial traction, with its run-rate revenue surpassing $2.5 billion since its introduction. This robust financial performance highlights the significant demand for AI-powered development tools within large organizations.

The timing of this product release also coincides with Anthropic navigating significant legal challenges. The company recently filed two lawsuits against the Department of Defense following its designation as a supply chain risk. In such an environment, solidifying and expanding its enterprise client base becomes even more crucial. Products like Code Review reinforce Anthropic’s commitment to providing indispensable tools that solve tangible business problems for its corporate customers, potentially strengthening its market position and revenue streams amidst external pressures.

The competitive landscape for AI development tools is intensifying, with major tech players and numerous startups vying for market share. While many offerings focus on code generation, the niche of AI-driven code review presents a distinct opportunity. By addressing the post-generation quality assurance challenge, Anthropic is positioning itself as a comprehensive partner in the AI-assisted software development lifecycle, moving beyond mere code creation to encompass the entire development pipeline.

Economic and Social Implications

The economic implications of Code Review are multifaceted. While the tool is a premium offering, with an estimated cost of $15 to $25 per review based on code complexity (utilizing a token-based pricing model common in AI services), the value proposition for enterprises is substantial. The ability to catch critical bugs earlier in the development cycle translates directly into reduced debugging costs, fewer post-release patches, and enhanced software reliability, which can save companies millions in the long run. Moreover, the acceleration of development cycles means products can reach the market faster, capturing revenue opportunities sooner.

From a social and cultural perspective, the introduction of advanced AI code reviewers signals a further evolution in the human-AI collaboration paradigm within technology. Developers are not being replaced but augmented. Their roles are shifting from writing every line of code to orchestrating AI-generated code, refining it, and focusing on higher-level architectural design and complex problem-solving. This requires a new skill set, including prompt engineering, critical evaluation of AI outputs, and effective integration of AI tools into existing workflows. The fear of job displacement often associated with AI is mitigated by the reality that these tools create new efficiencies and allow human talent to be deployed more strategically.

However, the reliance on AI for critical tasks like code review also introduces considerations around explainability and bias. While Anthropic emphasizes the tool’s step-by-step reasoning, ensuring full transparency and the ability for human developers to understand and override AI suggestions will remain paramount. The potential for AI models to perpetuate or even amplify biases present in their training data, if not carefully managed, could lead to unforeseen issues in the generated and reviewed code.

Future Outlook

The launch of Code Review signifies a significant step towards a more fully integrated and AI-optimized software development lifecycle. As AI models continue to advance, the capabilities of such review tools are expected to grow, encompassing more sophisticated security analyses, performance optimizations, and even proactive architectural suggestions. The goal, as articulated by Wu, is to "enable enterprises to build faster than they ever could before, and with much fewer bugs than they ever had before."

The ongoing evolution of AI in coding necessitates a continuous dialogue between AI developers, software engineers, and organizational leaders. Balancing the immense potential for acceleration with the imperative for quality, security, and human oversight will be key to unlocking the full benefits of this technological revolution. Anthropic’s Code Review tool represents a crucial component in navigating this complex, yet promising, future of software engineering.