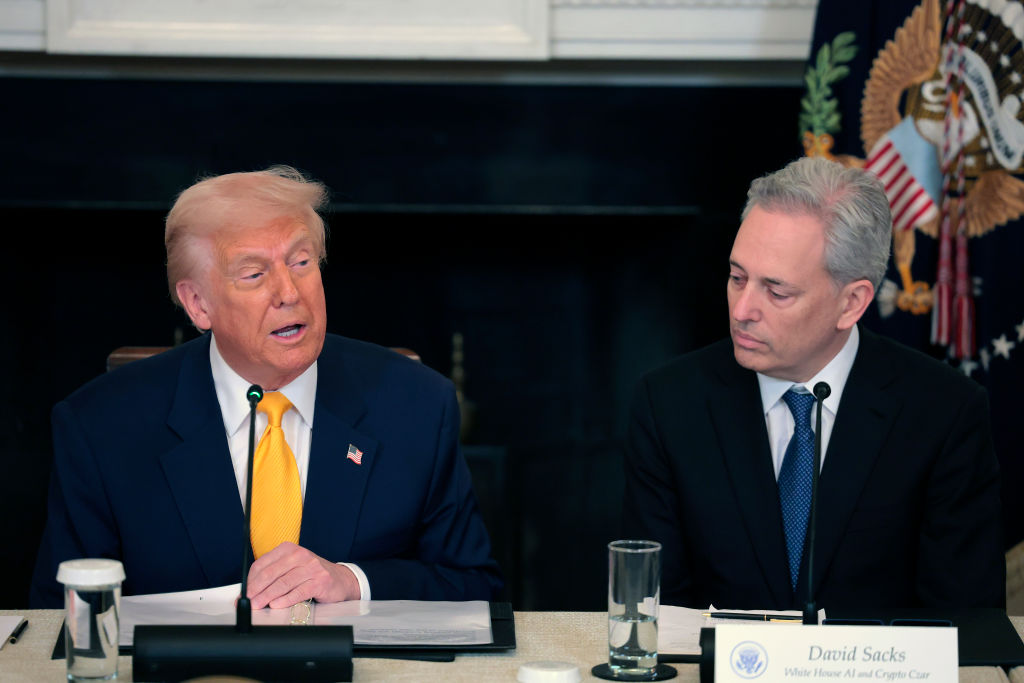

A pivotal moment in the rapidly evolving landscape of artificial intelligence governance is unfolding as Dario Amodei, CEO of leading AI developer Anthropic, has publicly refused the Pentagon’s demand for unrestricted access to its advanced AI systems. This firm stance, articulated less than 24 hours before a critical deadline set by Defense Secretary Pete Hegseth, highlights the growing tension between technological innovation, national security imperatives, and the ethical considerations surrounding powerful AI. Amodei’s declaration underscores a fundamental disagreement over the appropriate boundaries for AI deployment, particularly concerning its potential military applications.

A Confrontation Over AI’s Future

The core of the dispute centers on the U.S. Department of Defense’s insistence that it should be able to utilize Anthropic’s sophisticated AI models for all lawful purposes, without limitations imposed by a private entity. Conversely, Amodei, in a statement issued on Thursday, February 26, 2026, asserted that he "cannot in good conscience accede to [the Pentagon’s] request." He acknowledged the Department of Defense’s prerogative in making military decisions but emphasized that "in a narrow set of cases, we believe AI can undermine, rather than defend, democratic values." He further elaborated that certain uses simply fall "outside the bounds of what today’s technology can safely and reliably do."

Specifically, Anthropic has outlined two red lines: the mass surveillance of American citizens and the development or deployment of fully autonomous weapons systems operating without human oversight. This ethical firewall erected by Anthropic challenges the traditional paradigm of defense procurement, where technology providers typically cede control over the application of their innovations once sold to the military. The impending deadline, Friday at 5:01 p.m., marks a critical juncture that could redefine the relationship between Silicon Valley’s cutting-edge AI labs and the nation’s defense apparatus.

Anthropic’s Foundational Principles

To fully grasp the significance of Amodei’s position, it is essential to understand Anthropic’s origins and philosophical underpinnings. Founded in 2021 by former senior members of OpenAI’s safety and policy team, including Dario and Daniela Amodei, Anthropic was established with a mission to develop advanced AI while prioritizing safety and alignment. The founders departed OpenAI partly due to differing views on the commercialization pace and safety protocols of large language models. This background deeply informs their current stance.

Anthropic is renowned for its "Constitutional AI" approach, a methodology designed to align AI models with human values by providing them with a set of principles, or a "constitution," to guide their behavior. This innovative technique aims to make AI systems more helpful, harmless, and honest, reducing the risk of unintended or malicious outputs. Their flagship model, Claude, has quickly become a prominent competitor in the generative AI space, known for its advanced reasoning capabilities and robust safety features. This focus on ethical development is not merely a marketing strategy but a core tenet of the company’s identity and a primary driver behind its reluctance to compromise on its principles, especially in the sensitive context of military applications. The company has attracted significant investment from tech giants like Google and Amazon, underscoring its stature as a frontier AI lab with considerable influence and technological prowess.

The Pentagon’s Urgent Imperative

The Department of Defense’s pursuit of Anthropic’s technology is rooted in a broader strategic imperative to maintain technological superiority in an increasingly complex global landscape. The Pentagon views advanced AI as crucial for enhancing intelligence gathering, logistical operations, cybersecurity, and future warfare capabilities. Initiatives such as the Joint Artificial Intelligence Center (JAIC), established in 2018, and the Defense Innovation Unit (DIU) reflect a concerted effort to accelerate the adoption of AI across all military branches.

The urgency is further amplified by the ongoing global "AI race," with nations like China and Russia heavily investing in their own AI capabilities for defense. The U.S. military’s doctrine emphasizes the ethical and responsible use of AI, as outlined in its 2020 Ethical Principles for Artificial Intelligence. However, the interpretation of these principles often differs from the stricter ethical frameworks championed by private AI developers. For the Pentagon, the ability to integrate cutting-edge AI without custom limitations from a vendor is paramount to operational flexibility and strategic readiness. Anthropic’s Claude, being one of the few "frontier AI labs that has classified-ready systems for the military," represents a uniquely valuable asset, making the current standoff particularly high-stakes.

Escalating Tensions and Legal Levers

The Department of Defense has attempted to exert significant pressure on Anthropic to comply with its demands. Defense Secretary Hegseth’s office has reportedly explored two severe measures: classifying Anthropic as a "supply chain risk" or invoking the Defense Production Act (DPA). The "supply chain risk" designation is typically reserved for foreign adversaries or entities deemed to pose a national security threat, making its potential application to a U.S.-based, highly-regarded AI firm an unprecedented and potentially damaging move.

Even more drastic is the threat of invoking the Defense Production Act. The DPA, enacted in 1950 during the Korean War, grants the President broad authority to compel private companies to prioritize or expand production of materials and services deemed essential for national defense. While historically used to secure resources like steel, medical supplies, or semiconductor components, its application to force an AI company to alter its ethical policies regarding its proprietary software would mark a novel and highly controversial expansion of governmental power over private technological development.

Amodei quickly highlighted the inherent contradiction in these threats: "One labels us a security risk; the other labels Claude as essential to national security." This observation underscores the dilemma faced by the Pentagon, which simultaneously needs Anthropic’s technology and is frustrated by its ethical boundaries. Despite the pressure, Amodei reiterated Anthropic’s preference to continue serving the Department of Defense, provided its two requested safeguards remain in place. He also expressed a willingness to facilitate a smooth transition to another provider should the Department decide to "offboard" Anthropic, aiming to prevent any disruption to critical military operations.

Historical Echoes and Emerging Precedents

This confrontation is not without historical parallels, though the specifics of AI introduce new complexities. Debates over the ethical responsibilities of scientists and technologists regarding military applications date back to the Manhattan Project, where many scientists grappled with the implications of their work. More recently, in 2018, Google faced widespread internal dissent and public outcry over Project Maven, a contract to develop AI for drone imagery analysis for the Pentagon. Employee protests ultimately led Google to withdraw from the project and commit to a set of ethical AI principles.

The current dispute with Anthropic further crystallizes the "dual-use dilemma" inherent in many advanced technologies. AI, with its potential for both immense societal benefit and profound destructive capability, epitomizes this challenge. The standoff sets a crucial precedent for how private sector AI developers will engage with national security interests, and whether their ethical frameworks will hold sway against governmental demands. This is not merely a contractual dispute; it is a battle for the soul of AI development and the establishment of ethical guardrails in an era of rapid technological advancement.

The Dual-Use Dilemma and Industry Response

The broader AI industry is closely watching this unfolding drama. Anthropic’s principled stand could inspire other AI developers to adopt similar ethical restrictions on their technology’s use, particularly for military applications. This could lead to a fragmented landscape where different AI providers adhere to varying ethical codes, complicating the Department of Defense’s procurement strategies. Conversely, if Anthropic is forced to concede or is severely penalized, it could chill future attempts by tech companies to impose ethical constraints on their powerful technologies, reinforcing the idea that national security trumps corporate ethics.

The mention of xAI, Elon Musk’s AI venture, as a potential alternative provider for classified-ready systems, suggests the Pentagon is already exploring contingency plans. xAI, with its more explicit focus on "maximizing humanity’s collective understanding of the universe" and less overt emphasis on specific ethical red lines concerning military applications, might present a more amenable partner for the defense establishment. However, switching providers for highly complex, classified systems is not a trivial task and could incur significant costs and delays.

Broader Implications for AI Governance

The Anthropic-Pentagon standoff underscores a fundamental governance challenge: who ultimately decides how the most powerful AI systems are used? Is it the government, citing national security, or the private companies that develop these systems, citing ethical responsibilities? This debate has profound implications for the future of AI regulation, international arms control, and the balance of power between technological innovators and state actors.

From a social and cultural perspective, the public is increasingly aware of AI’s potential for both good and harm. Reports of AI being used for surveillance or autonomous weaponry often spark widespread concern. Anthropic’s public stance resonates with a segment of the public and the broader scientific community that advocates for responsible AI development and stringent ethical oversight. This incident brings to the forefront the need for clear, internationally agreed-upon norms and regulations for military AI, a challenge that global bodies and governments are only beginning to address.

Looking Ahead: A Defining Moment

As the deadline approaches, the outcome of this dispute remains uncertain. Whether Anthropic stands firm and faces the potential consequences, or if the Department of Defense finds a path to compromise, this episode will undoubtedly serve as a defining moment in the history of AI development and its relationship with military power. It highlights the complex ethical dilemmas at the heart of advanced technology and the urgent need for a robust framework to guide its deployment, ensuring that innovation serves humanity’s best interests while safeguarding democratic values and global stability. The resolution, whatever it may be, will set a significant precedent for how future collaborations—or confrontations—between Silicon Valley and the Pentagon will unfold.