Runway, a prominent innovator in the realm of artificial intelligence, is broadening its strategic horizon beyond its foundational role as a creator of advanced AI video models. The company has announced the establishment of a $10 million venture fund, earmarked for investment in nascent companies operating at the nexus of AI, media, and advanced world simulation. Concurrently, Runway is inaugurating a "Builders" program, offering substantial API credits to early to mid-stage startups, signaling a deliberate move to cultivate a robust ecosystem around its vision of "video intelligence." This dual initiative underscores a significant shift, positioning Runway not merely as a technology provider but as a foundational platform fostering the next generation of AI-driven applications.

From AI Video Pioneer to Platform Enabler

Runway has carved a formidable niche in the burgeoning field of generative AI, particularly renowned for its sophisticated video creation tools. Its suite of products, including models like Gen-1 and Gen-2, have become indispensable assets for professionals across the film industry, advertising agencies, and marketing departments, enabling the rapid generation and manipulation of video content with unprecedented ease and quality. The company’s trajectory mirrors the broader evolution of generative AI, which rapidly progressed from text-based models to image synthesis and, most recently, to complex video generation. This progression reached a new milestone with Runway’s introduction of "general world models" last December, a development that pushed the boundaries beyond mere creative tooling into more expansive and intricate applications. These world models represent a paradigm shift, moving towards AI systems capable of understanding and simulating complex environments, rather than just isolated media elements.

The strategic rationale behind Runway’s latest initiatives is multifaceted. As Alejandro Matamala Ortiz, Runway’s co-founder and Chief Design Officer, articulated, the company believes that by advancing from video generation to "video intelligence," a much wider spectrum of use cases across diverse industries will emerge. Given the inherent limitations of any single company, even one with Runway’s technological prowess and valuation—currently around $5.3 billion post-money, having raised nearly $860 million from investors like Nvidia and the Qatar Investment Authority—it becomes imperative to engage external innovators. This strategy allows Runway to explore and support a multitude of applications that it cannot pursue directly, effectively crowdsourcing innovation on top of its core research and infrastructure.

The Strategic Imperative of Investment

The launch of the $10 million venture fund is a clear indicator of Runway’s intent to actively shape the future landscape of AI. The fund’s investment thesis is structured around three core areas: companies advancing AI primitives, those building innovative media applications, and firms developing technologies for world simulation. While specific details of the "three buckets" were not fully elaborated in the initial announcement, these categories broadly encompass the foundational technologies, creative applications, and immersive experiences that Runway envisions for the future. The fund plans to issue checks of up to $500,000 for pre-seed and seed-stage startups, providing crucial early capital to promising ventures.

Runway is not a newcomer to early-stage backing; for the past eighteen months, it has quietly supported a select group of founders and companies. This includes LanceDB, a firm specializing in databases optimized for AI applications, and Tamarind Bio, which leverages AI for novel protein design in drug discovery. Another notable investment is Cartesia, a company focused on real-time audio generation, whose products are seen as complementary to Runway’s video capabilities. Chang She, co-founder and CEO of LanceDB, emphasized the strategic alignment, stating that the "next generation of AI models will be built on multimodal data — video, audio, images, text together," and that Runway stands out as an investor who deeply understands this critical evolution.

This trend of leading AI developers establishing their own venture arms is gaining momentum across the industry. OpenAI pioneered this model with its Startup Fund, followed by AI search innovator Perplexity, which launched a $50 million seed and pre-seed fund last year. Similarly, CoreWeave, a cloud computing provider specializing in AI workloads, introduced CoreWeave Ventures to back AI companies. This pattern suggests a strategic shift among core AI infrastructure and model developers. By investing in nascent companies, these larger players aim to foster an ecosystem that utilizes their technologies, discover new use cases, and potentially identify future acquisition targets or strategic partners. It’s a mechanism for distributed innovation, allowing relatively small teams, like Runway’s 150 employees, to influence a broader technological frontier.

Pioneering "Video Intelligence" and Immersive Worlds

The conceptual leap from "AI video models" to "video intelligence" signifies a move beyond mere generation to understanding, interaction, and simulation. Video intelligence implies systems that can not only create visual narratives but also interpret complex visual data, respond dynamically, and contribute to the creation of interactive, living digital environments. This vision aligns with the broader industry pursuit of multimodal AI, where models can seamlessly process and generate information across various data types – text, image, audio, and video – to create more holistic and intelligent systems.

The underlying technology driving this vision is Runway’s family of "general world models." These models are designed to go beyond generating isolated video clips, aiming instead to simulate consistent, coherent digital environments. This capability is crucial for developing interactive experiences, where AI agents can maintain context, respond logically to user input, and inhabit virtual worlds with a degree of autonomy. The implications for industries like gaming, virtual reality, and advanced simulation are profound, potentially enabling entirely new forms of entertainment, training, and exploration.

The ‘Builders’ Program: Fostering Innovation

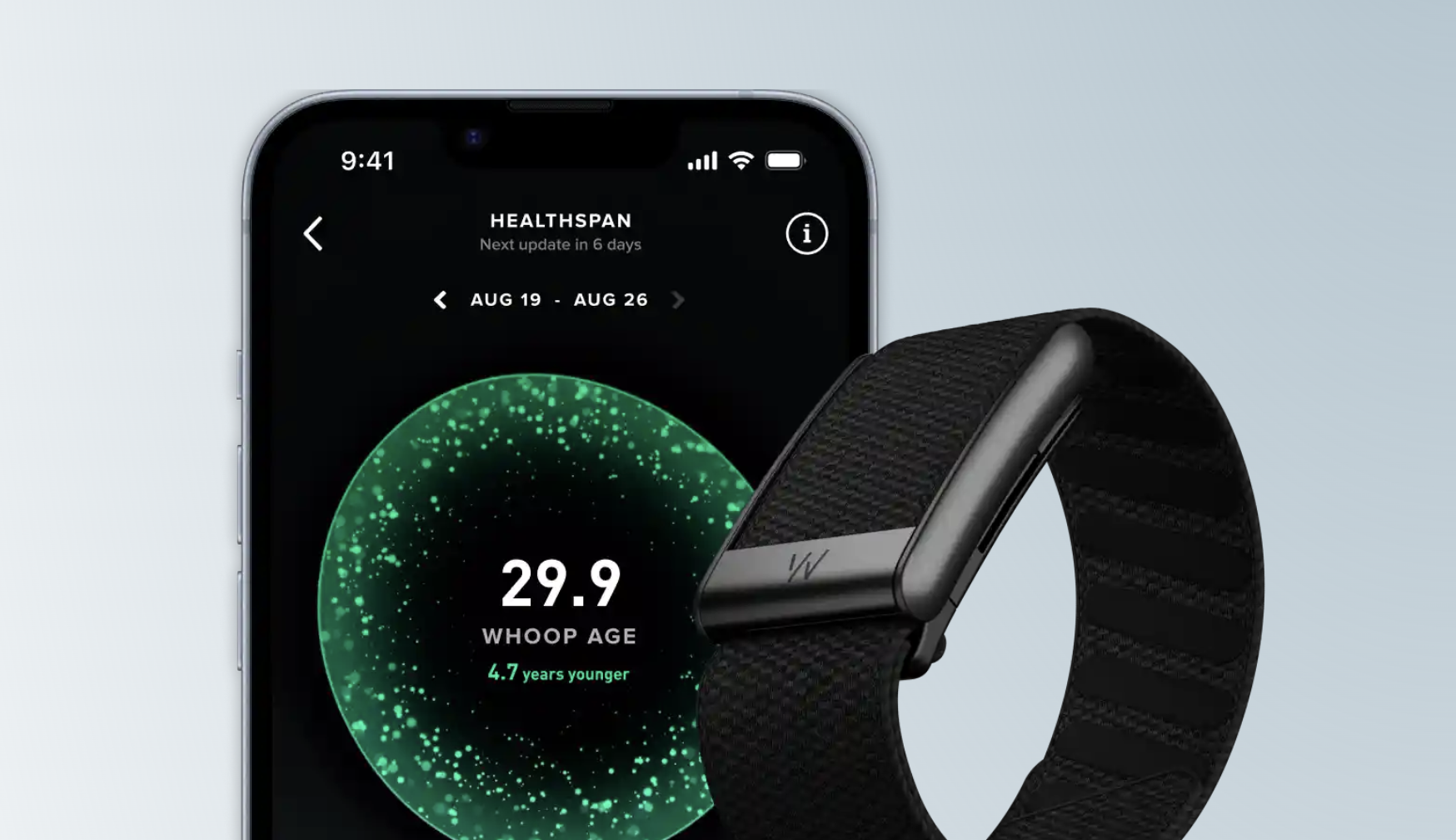

Complementing its direct investment strategy, Runway’s "Builders" program is designed to empower a wider array of startups by providing critical resources. Eligible seed to Series C startups can apply for 500,000 API credits, offering them significant access to Runway’s advanced capabilities without prohibitive upfront costs. A cornerstone of this program is access to "Characters," Runway’s recently unveiled real-time video agent API. Powered by the new general world models, Characters enables users to interact with generative AI agents that can manifest with varying visual styles, from animated figures to photorealistic representations, and possess a voice.

The Builders program is explicitly designed as an incubator for innovation, allowing Runway to observe firsthand the diverse applications that startups will develop using this technology. As Ortiz noted, the ability to converse with a real-time video agent is a relatively new possibility, and the company is eager to see how innovative teams harness its potential for positive impact. The inaugural cohort of the Builders program already showcases the breadth of anticipated applications, including companies like Cartesia, MSCHF, Oasys Health, Spara, Subject, and Supersonik. These startups are exploring use cases spanning AI customer support agents, interactive brand characters, personalized onboarding experiences, real-time sales assistants, and sophisticated synthetic media tools.

The potential societal and economic impact of such technology is vast. Ortiz expressed particular enthusiasm for its applications in telemedicine, where AI characters could provide interactive health information or support, and in education, where personalized AI tutors could revolutionize learning. Given Runway’s roots in creative fields, the integration of Characters into gaming and new forms of interactive entertainment is also a highly anticipated development. The vision extends to a future where these combined technologies allow for the generation and simulation of entire interactive environments, enabling users to participate in and hold conversations with characters within these meticulously crafted digital worlds.

A Glimpse into the Future of Interaction

The landscape for interactive AI characters is becoming increasingly competitive, with several companies already making significant strides. Firms like Inworld and Charisma are actively developing AI platforms for creating non-player characters (NPCs) in games and enhancing digital storytelling. StoReel is experimenting with AI-generated micro-dramas that users can directly engage with, while Character AI has gained substantial popularity for its conversational AI characters. Runway’s Characters enters a dynamic market, distinguished by its foundation in advanced video generation and world modeling capabilities.

The long-term vision articulated by Runway’s leadership suggests a fundamental transformation of the internet itself. Ortiz envisions a "new kind of internet" that is inherently more personalized, immersive, and interactive in real-time. This perspective aligns with broader industry discussions around the metaverse, spatial computing, and the increasing convergence of digital and physical realities. As AI models become more sophisticated in understanding and generating complex, multimodal data, the potential for creating truly immersive digital experiences—where users can naturally interact with AI-driven characters and dynamic environments—moves closer to realization. Runway’s dual strategy of strategic investment and developer enablement is a calculated move to secure its position at the forefront of this evolving digital frontier, shaping the tools and platforms that will define the next era of human-computer interaction.