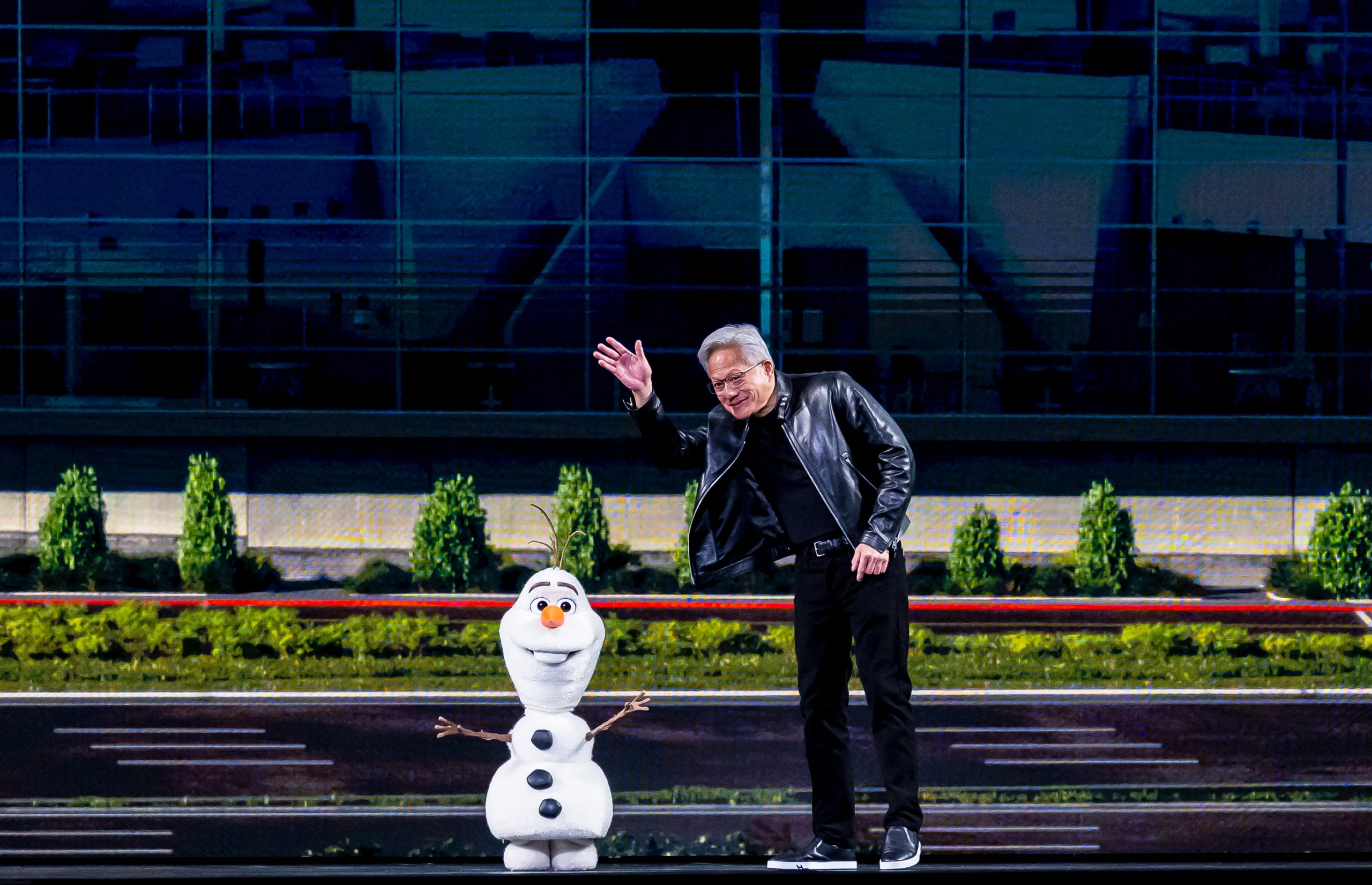

The annual GPU Technology Conference (GTC), Nvidia’s flagship event, recently served as a profound declaration of intent from the semiconductor giant, solidifying its position at the vanguard of the artificial intelligence revolution. CEO Jensen Huang, in his characteristic leather jacket, commanded the stage for a two-and-a-half-hour keynote address that outlined an ambitious future, projecting an astounding $1 trillion in AI chip sales by 2027. This bold financial forecast was accompanied by the strategic imperative for every organization to embrace an "OpenClaw strategy," underscoring Nvidia’s vision for a pervasive AI infrastructure. The keynote concluded with a memorable, albeit somewhat unconventional, appearance by a rambling Olaf robot, symbolizing the diverse and sometimes whimsical applications emerging from the burgeoning AI landscape. The overarching message was unequivocally clear: Nvidia aims to be the foundational technology provider for virtually every facet of modern innovation, spanning from intricate AI model training and sophisticated autonomous vehicle systems to immersive experiences within renowned entertainment venues like Disney parks.

A Trillion-Dollar Vision: Redefining the AI Economy

Nvidia’s projection of $1 trillion in AI chip sales by 2027 is more than just a financial target; it represents a profound reorientation of the global technology economy. To grasp the magnitude of this forecast, it’s essential to consider the current landscape. In recent years, the explosion of generative AI models, large language models (LLMs), and advanced machine learning applications has catalyzed unprecedented demand for specialized computing hardware. Nvidia’s Graphics Processing Units (GPUs), initially designed for rendering complex graphics in video games, proved uniquely suited for the parallel processing demands of AI workloads. This serendipitous alignment transformed the company from a niche hardware provider into the undisputed leader in AI infrastructure.

The $1 trillion figure reflects not only the anticipated growth in demand for high-performance AI accelerators but also Nvidia’s strategic expansion into a comprehensive ecosystem of software, networking, and services. It implies a continued escalation in AI investment across all sectors—from cloud hyperscalers building vast AI factories to enterprises integrating AI into their core operations. Analysts widely acknowledge the aggressive nature of this projection, yet many recognize the underlying market forces driving it. The global AI market is expanding at a compound annual growth rate that few other industries can match, fueled by competitive pressures to adopt AI, the increasing sophistication of models, and the democratization of AI tools. This financial milestone, if achieved, would reshape the semiconductor industry, creating significant ripple effects across supply chains, manufacturing, and global technological development, further cementing AI’s status as a primary driver of economic growth.

The "OpenClaw" Imperative: Democratizing AI Development

Central to Huang’s address was the concept of an "OpenClaw strategy," a framework designed to streamline and secure the development and deployment of AI applications. While the exact technical specifications of "OpenClaw" were elaborated upon during the conference, the core principle revolves around providing a modular, scalable, and secure environment for AI innovation. This strategy addresses a critical challenge in the AI space: the immense complexity and fragmentation of tools, data, and infrastructure required to build and manage sophisticated AI systems.

"OpenClaw" is envisioned as a comprehensive platform that offers standardized interfaces, robust security protocols, and efficient resource management for AI projects. For startups, this means potentially lower barriers to entry, enabling them to leverage advanced AI capabilities without the prohibitive costs and expertise traditionally associated with developing bespoke infrastructure. For large enterprises, it promises enhanced security, compliance, and interoperability across diverse AI initiatives, allowing them to integrate AI more deeply into their operational workflows. This strategic push aligns with Nvidia’s broader objective of making AI accessible and manageable for a wider array of developers and businesses. It builds upon the success of CUDA, Nvidia’s parallel computing platform, which has historically locked developers into its ecosystem by providing unparalleled performance and a rich software library. An "OpenClaw strategy" extends this lock-in by offering a complete, integrated solution that simplifies the entire AI lifecycle, from data preparation and model training to deployment and continuous optimization. This initiative represents a significant step towards democratizing AI, ensuring that its power can be harnessed more broadly while maintaining security and efficiency standards.

From Data Centers to Theme Parks: Nvidia’s Ubiquitous Ambition

Nvidia’s ambition extends far beyond merely selling chips; it aims to embed its technology into the very fabric of industries, creating a ubiquitous AI presence. The keynote highlighted several key areas demonstrating this expansive vision, including AI training, autonomous vehicles, and even novel applications in entertainment, such as Disney parks.

In the realm of AI training, Nvidia’s H100 and forthcoming Blackwell series GPUs are the backbone of modern data centers, powering the development of ever-larger and more complex AI models. These "AI factories," as Huang termed them, are not just server farms but sophisticated computational ecosystems designed for continuous innovation in AI. For autonomous vehicles, Nvidia’s Drive platform is a comprehensive hardware and software stack that enables self-driving capabilities. The company is not just providing chips but also simulation environments like Omniverse, where autonomous systems can be rigorously tested and refined in virtual worlds before deployment in the physical one. This simulation-first approach accelerates development, reduces costs, and enhances safety.

Perhaps most illustrative of Nvidia’s diverse reach is its engagement with entertainment giants like Disney. The application here involves everything from powering advanced robotics and interactive experiences within theme parks to creating digital twins of entire operational environments. These digital twins, built within Omniverse, allow designers, engineers, and operators to simulate, plan, and optimize physical spaces and experiences in a virtual realm. This level of integration transforms how entertainment is created and consumed, blurring the lines between the physical and digital. These examples underscore Nvidia’s strategic goal: to be foundational to any endeavor that requires intelligent automation, sophisticated simulation, or high-performance computing, thus extending its influence across an ever-widening array of industries.

Historical Context: Nvidia’s Evolution from Gaming to AI Dominance

Nvidia’s journey to its current position as an AI powerhouse is a testament to strategic foresight and adaptability. Founded in 1993, the company initially made its mark in the nascent market for 3D graphics cards, primarily serving the burgeoning PC gaming industry. Its early success was built on delivering increasingly powerful GPUs that could render complex visual environments with unprecedented realism. However, a pivotal moment arrived in 2006 with the introduction of CUDA (Compute Unified Device Architecture). CUDA transformed Nvidia’s GPUs from specialized graphics processors into general-purpose parallel computing engines. This opened the door for researchers and developers to harness the immense parallel processing capabilities of GPUs for scientific computing, data analysis, and, crucially, artificial intelligence.

The true inflection point came with the deep learning revolution in the early 2010s. Researchers discovered that GPUs were exceptionally well-suited for accelerating the massive matrix multiplications required to train deep neural networks. Nvidia, having invested years in building the CUDA ecosystem, found itself perfectly positioned to capitalize on this paradigm shift. While competitors focused on traditional CPUs, Nvidia embraced the AI opportunity, pouring resources into developing AI-specific hardware and software libraries. This early and sustained commitment allowed Nvidia to build an insurmountable lead in the AI hardware market, cultivating a vast developer community and creating a formidable technological moat that continues to define its dominance today. Its evolution from a gaming hardware vendor to a full-stack AI platform provider is a compelling narrative of technological transformation and market leadership.

Market Dynamics and Competitive Landscape

Nvidia’s commanding position in the AI chip market is undeniable, yet the competitive landscape is intensifying. While Nvidia’s GPUs, particularly the H100 series, remain the gold standard for AI training, other players are aggressively pursuing market share. Competitors include traditional chipmakers like AMD, which has introduced its MI300X accelerators, and Intel, with its Gaudi series designed for AI workloads. Furthermore, major cloud providers such as Google (with its Tensor Processing Units, TPUs), Amazon (Inferentia and Trainium), and Microsoft (Maia and Athena) are developing custom AI silicon to optimize their own cloud infrastructure and reduce reliance on external vendors.

Nvidia’s primary competitive advantage lies not just in its hardware performance but also in its comprehensive software ecosystem, particularly CUDA. This platform has cultivated a massive developer community, offering a vast array of libraries, tools, and frameworks optimized for Nvidia GPUs. This "software moat" creates significant switching costs for developers and organizations, making it challenging for competitors to gain traction even with comparable hardware performance. However, challenges persist, including potential supply chain constraints for advanced packaging technologies, geopolitical tensions impacting global chip manufacturing, and the escalating costs of developing and deploying cutting-edge AI hardware. Despite these hurdles, the relentless growth of the AI market continues to present immense opportunities for Nvidia to expand its influence across new applications and enterprise segments.

Societal and Economic Implications of Pervasive AI

The expansion of AI, heavily underpinned by companies like Nvidia, carries profound societal and economic implications. On the economic front, pervasive AI promises to drive unprecedented productivity gains across industries, automating routine tasks, optimizing complex processes, and fostering innovation in areas ranging from drug discovery to personalized education. This could lead to significant economic growth, creating new markets and job categories focused on AI development, deployment, and oversight.

However, the rapid acceleration of AI also raises critical questions. Concerns about job displacement in sectors amenable to automation are prevalent, necessitating discussions about workforce retraining and new social safety nets. Ethical considerations surrounding AI bias, data privacy, and algorithmic transparency are paramount. As AI systems become more autonomous and integrated into critical infrastructure, ensuring their safety, reliability, and fairness becomes a societal imperative. Furthermore, the immense computational power required for advanced AI training has significant environmental implications, particularly regarding energy consumption. The drive for more efficient AI hardware and greener data center operations will become increasingly important. Nvidia’s role in democratizing access to AI tools also means a wider range of actors will wield this powerful technology, necessitating robust frameworks for responsible AI development and governance. The industry, led by companies like Nvidia, is not just building technology; it is actively shaping the future of work, society, and human interaction.

The Future of AI: Beyond the Keynote

Nvidia’s GTC keynote served as a potent indicator of the trajectory of artificial intelligence. It underscored the company’s long-term strategy to be a full-stack AI provider, offering not just the chips but also the entire software and platform ecosystem required to build, deploy, and scale AI solutions. The ambition of a $1 trillion market capitalization for AI chips within a few years highlights the transformative power and economic potential that industry leaders foresee for AI.

The presence of the "rambling Olaf robot" at the close of the keynote, while perhaps a lighter moment, can be seen as symbolic of AI’s diverse and sometimes experimental frontiers. It represents the creative, human-centric applications that AI can enable, extending beyond purely computational tasks into interactive and experiential domains. This blend of formidable financial projections, strategic technological frameworks, and imaginative applications paints a vivid picture of a future deeply intertwined with artificial intelligence. Nvidia, through its relentless innovation and expansive vision, continues to position itself as a pivotal architect of this intelligent future, setting the pace for global technological advancement and shaping the next era of computing.